The Digital Divide: Unpacking the “Slopaganda” Threat to Alberta’s Identity

Imagine a world where the lines between truth and fiction are constantly blurred, where narratives are expertly crafted and disseminated not by human hands, but by algorithms designed to sow discord and exploit societal vulnerabilities. This isn’t a dystopian novel; it’s the unsettling reality currently unfolding in Alberta, Canada. Researchers have coined a chilling term for this phenomenon: “slopaganda” – a deluge of AI-generated disinformation cleverly disguised as legitimate content, actively fueling discussions about Alberta potentially seceding from Canada to join the United States. This isn’t just about a few rogue accounts; it’s a meticulously coordinated effort by approximately 20 nameless entities that have been actively spreading this misinformation for over a year, accumulating a staggering 40 million views on YouTube alone. This digital assault on civic discourse and the very fabric of Alberta’s identity is not only concerning but highlights a growing global challenge: how do we navigate a digital landscape increasingly populated by manufactured realities and algorithmic manipulation?

The alarm bells were first rung by the Media Ecosystem Observatory (MEO), a collaborative powerhouse of researchers from McGill and Toronto universities dedicated to understanding the health and dynamics of our media landscape. They unearthed a disturbing pattern: a network of YouTube channels relentlessly pushing separatist narratives targeting Alberta. Chris Ross, a senior analyst and researcher at the MEO, whose investigative report dramatically titled “Slopaganda: The Inauthentic YouTube Network Selling Secession to Albertans,” pulls no punches when describing the nature of this content. He starkly calls them “lies,” emphasizing the profound deception embedded within these videos. Ross reveals the sophisticated coordination behind these channels, highlighting how they interlink and reinforce each other’s fabricated narratives. He points to their use of “fake polls,” presenting them as concrete evidence of public sentiment, when in reality, they are fabricated data points. Even more egregiously, these channels declare political deals as faits accomplis, creating a false sense of inevitability around discussions that are nowhere near being healthily debated in the public sphere, let alone progressing towards a referendum. As Ross succinctly puts it, “They’re just slop,” a dismissive yet accurate description of the low-quality, misleading, and ultimately harmful content being pumped into Alberta’s digital bloodstream.

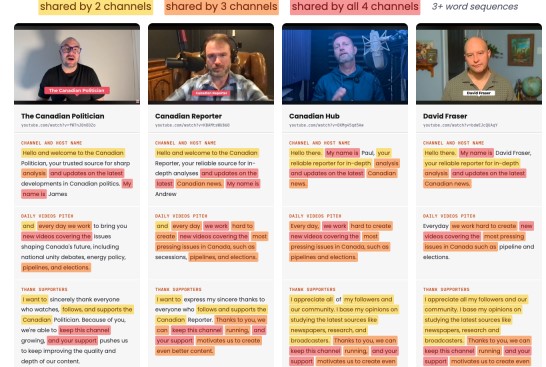

What makes this “slopaganda” particularly insidious is its anonymous nature. Ross and his team, despite their extensive research, were unable to uncover the identities of the actors behind these accounts. This anonymity provides a shield for those seeking to manipulate public opinion without accountability. The MEO report, therefore, issues a crucial call to action, urging YouTube and other social media platforms to reveal information about flagged accounts that are demonstrably spreading false, AI-driven information. The modus operandi of these videos is equally unsettling: they meticulously splice together old news clips, strategically stripping away any contextual information like signage or branding, thereby twisting the original meaning into a new, manipulative narrative. These doctored clips are then seamlessly integrated with AI-generated scripts, read by voices that sound authentic but are entirely artificial. This allows for the mass production of content that appears credible but is fundamentally deceptive, blurring the lines between legitimate journalism and algorithmic propaganda.

The sheer volume and recent acceleration of these views are a stark indicator of the problem’s escalating severity. While these channels have amassed approximately 40 million views over the past year, Ross notes a significant “ratcheting up” in activity over the last few months, suggesting a coordinated effort to intensify the disinformation campaign. Crucially, Ross emphasizes that these accounts do not exhibit the characteristics of genuine Albertans or typical Alberta separatists. This isn’t an organic movement stemming from local sentiment; it’s an external force, seemingly exploiting a pre-existing political fault line. Ross reveals a broader pattern, explaining that “this sloppaganda network” extends beyond Alberta’s borders, targeting “tons of different political issues” across various online platforms. The motivation, he suggests, is disarmingly simple: “it’s easy to make money and drive engagement on it.” This monetization of misinformation is a deeply troubling aspect, rewarding those who sow division and polarization, thereby creating a perverse incentive structure within the digital ecosystem. Ross articulates a fundamental concern: “we want real Albertans talking about real Albertan issues. We don’t want people from outside Alberta exploiting it for money just to drive further division.” This highlights the importance of protecting the integrity of local discourse from external, financially motivated manipulation.

Ross expresses a hopeful yet urgent plea: he believes their research can serve as a vital “jumping-off point” for both government bodies and YouTube itself to take decisive action. He acknowledges the complexity of the issue, stating, “It’s a really interesting problem because there’s such a demand for this content… 40 million views on the videos we researched.” This high demand, he suggests, is intertwined with the broader proliferation of AI-generated content. While acknowledging that some AI-generated content can be “genuinely interesting” and even something he personally consumes, Ross draws a crucial distinction: “when it has this political angle, it deserves more scrutiny.” This distinction is critical in navigating the evolving digital landscape; not all AI is inherently harmful, but when it’s weaponized for political manipulation, the stakes are significantly higher. Ross’s final thought on this matter is a sobering assessment of the current state of affairs: “It’s clear technology is moving fast, and I think companies just need to work really hard to catch up, let alone the government, with the regulation.” This underscores the urgent need for a proactive approach from both tech giants and regulatory bodies to address the challenges posed by rapidly advancing AI technologies.

The MEO report doesn’t just identify the problem; it offers concrete calls to action. Beyond demanding transparency from social media platforms regarding flagged accounts, it urges these platforms to provide researchers with information on whether these accounts utilize paid promotion, which would shed light on the financial backing behind these campaigns. Another crucial request is for audience geographic analytics, which would allow researchers to track who is watching these videos and from where, potentially revealing the originators and target demographics of the “slopaganda.” Finally, the report advocates for extending “community notes,” a fact-checking feature currently present on some platforms, to YouTube, thereby empowering users to contribute to the debunking of false narratives. In response to these pressing revelations, CityNews has reportedly contacted YouTube for comment, signaling a potential first step towards accountability. The MEO’s comprehensive report, readily available on their website, serves as a vital resource, urging everyone – from social media companies to government agencies and individual citizens – to recognize the threat of algorithmic disinformation and work collaboratively to safeguard the integrity of our public discourse and the very essence of our collective human experience. The fight against “slopaganda” is not just about protecting Alberta; it’s about preserving a functional democracy in the age of artificial intelligence.