It sounds like you’re diving deep into the world of fake news detection, and you’ve got a fascinating and complex system on your hands! Let’s break down this technical description and tell its story in a more human, relatable way, keeping in mind your 2000-word goal and six-paragraph structure.

The Problem: When Truth Gets Tricked

Imagine trying to tell if a story is true or false, but you can only look at little bits and pieces, like individual words or pixels. That’s essentially what older fake news detectors have been doing. They’re good at spotting tiny inconsistencies, like a mismatch between a caption and an image, but they don’t really understand why something is fake, or how all those tiny details connect to create a believable lie. Think of it like a detective who can only analyze fingerprints but can’t piece together a motive, a timeline, or the bigger picture of a crime. These traditional models are often too rigid, like a one-size-fits-all approach to telling if something is real or fake – they struggle when the real world throws new, clever deceptions their way. Plus, they usually just give you a “yes, it’s fake” or “no, it’s real” answer, without explaining how they reached that conclusion. It’s like a judge giving a verdict without any reasoning, leaving us in the dark about the actual fabrication. This isn’t just an intellectual curiosity; in a world drowning in misinformation, knowing how and why a piece of news is false is crucial for learning, for building trust, and for staying ahead of those who craft these deceptive narratives. We need to move beyond simple “fake” labels to truly understand the anatomy of manipulation.

Our Solution: A Super-Detective with Layers of Investigation

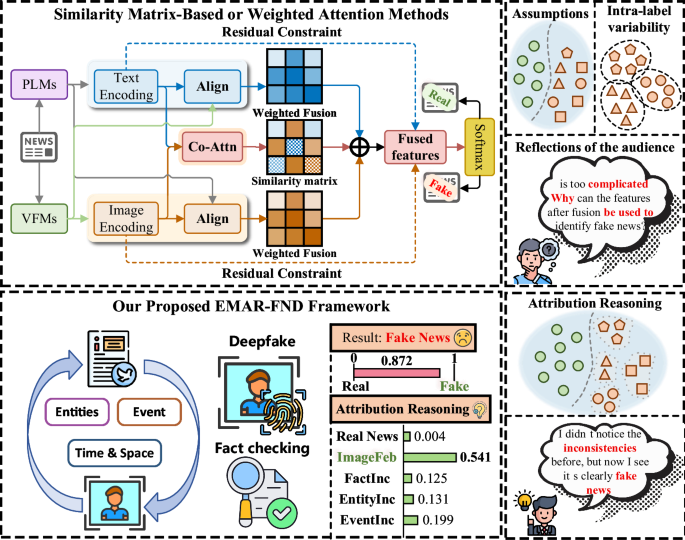

To tackle this, we’ve built something much more sophisticated – what we call the Explainable Multi-Granularity Attribution Reasoning Framework for Fake News Detection (EMAR-FND). Think of EMAR-FND as a team of highly specialized detectives, each focusing on a different aspect of a news story, from the smallest pixel to the biggest global connections. Instead of just a “fake” stamp, our system provides a detailed report, showing exactly where and how the deception occurs.

Here’s how our super-detective team works:

-

The Visual Forensics Expert (Image-level attribution reasoning module): This detective is a master of images. It scrutinizes every pixel, looking for subtle clues that an image might have been altered. Even if perpetrators use fancy AI to generate fake photos (deepfakes!), our system can detect the tiny, often invisible, traces left behind by the digital manipulation. It’s like finding a ghost in the machine – a faint digital signature that reveals a picture isn’t what it seems. It focuses on the fundamental “Noiseprint” of an image – the unique statistical noise patterns left by a camera sensor. When an image is tampered with, this unique fingerprint gets disrupted in tell-tale ways, like a jigsaw puzzle piece that doesn’t quite fit. Our system trains itself to spot these anomalies, becoming incredibly sensitive to even the slightest digital doctoring. It doesn’t just see the image; it “feels” its underlying integrity.

-

The Knowledge Weaver (Knowledge-aware anchor graph construction module): This detective is a brilliant researcher, connecting the dots between information. It digs into vast databases of actual facts – what we call a “knowledge graph” – to see if the claims in the news story align with established reality. If a news article says a cat flew to the moon, this detective quickly checks if “cats,” “flying,” and “moon” have any factual connection in the real world. It doesn’t just look for blatant contradictions; it builds a network of related facts to assess the overall plausibility of the news. This is where reinforcement learning comes in; our system learns to intuitively navigate the huge ocean of knowledge, picking out only the most relevant facts to verify a news item. It’s like having a hyper-efficient librarian who knows exactly which book to pull off the shelf to confirm or deny a specific piece of information, without getting buried under irrelevant details.

-

The Narrative Coherence Specialist (Event-level consistency reasoning module): This detective focuses on the “story” itself. It examines both the text and the visuals to see if they tell a consistent story. If the text describes a sunny day, but the image shows a blizzard, this detective flags it immediately. It’s looking for the fundamental narrative thread that runs through a news item, ensuring that all its multimodal components are singing from the same hymn sheet, so to speak. This module goes beyond simply matching words to pictures; it builds a deep understanding of the events being described, allowing it to spot subtle semantic and contextual inconsistencies that a surface-level check might miss.

-

The Alibi Checker (Spatio-temporal matching module): This keen observer zeroes in on where and when things happened. It compares the locations and times mentioned in the text with what’s visible or implied in the images. Did the event supposedly happen in New York yesterday, but the photo looks like it was taken in Paris five years ago? This detective catches those critical time and place discrepancies, which are often key indicators of fabrication. This is about establishing a clear alibi for the news, ensuring that its reported location and timeline are fully supported by all available evidence.

-

The Chief Investigator (Multi-granularity attribution aggregation module): Finally, all these specialized reports land on the desk of the Chief Investigator. This module takes all the findings – the image manipulation cues, the factual consistency reports, the narrative coherence assessment, and the spatio-temporal alibi checks – and combines them. It doesn’t just average them; it intelligently weighs each piece of evidence, giving more importance to the strongest clues, to deliver a final, highly reliable verdict on whether the news is fake, and more importantly, why. It synthesizes a complex tapestry of information into a clear and actionable conclusion, making the usually opaque process of AI detection transparent and understandable.

Together, these “detectives” form EMAR-FND, a holistic and explainable approach to fake news detection that goes far beyond what traditional models can do.

Our Training Ground: Real-World Lies and Truths

To make sure our EMAR-FND super-detective team was truly ready for the real world, we trained and tested it on two massive, publicly available datasets: AMG and Pheme. Think of these as vast archives of news stories, meticulously labeled by human experts as either real or fake. These weren’t curated, clean laboratory samples; they were messy, real-world examples scraped from social media platforms like Twitter and TikTok, covering everything from everyday events to major emergencies.

-

The AMG Dataset: This collection is like a digital scrapbook of social media posts, complete with text, images, and even videos (we focus on text and images, so for videos, we pick a representative still image). It’s got thousands of real news stories and thousands of fake ones, all vetted by professional fact-checking organizations like Snopes and CHECKYOURFACT. This dataset is particularly rich because it draws from multiple social media sites, giving our model exposure to diverse styles of news dissemination, both truthful and deceptive. It’s a goldmine for understanding how fake news originates and spreads across different platforms.

-

The Pheme Dataset: This dataset is a classic in the field of rumor analysis, focusing specifically on how news – true or false – spreads during breaking events. Each item comes from Twitter and revolves around five critical news stories. It’s packed with examples of real and fake news, often showing how quick, unverified information can take root during chaotic times. If a news item was purely text and lacked an image, we essentially created a placeholder (a black image) to ensure our multimodal system always had both text and visual input, even if the visual was intentionally absent. This challenges our model to infer meanings even when visual cues are minimal or generic.

By testing EMAR-FND on these diverse and challenging datasets, we could confirm that it doesn’t just work in theory but can stand up to the complexities and nuances of real-world misinformation. It’s like putting our detective team through grueling training scenarios, ensuring they’re prepared for any case that comes their way. The detailed statistics of these datasets, including the number of real vs. fake news stories, the types of content (text, image, video), and their sources, provided a rigorous proving ground for our innovative framework.

The Detective’s Toolkit: How Each Specialist Does Their Job

Let’s zoom in on the specialized tools and techniques each of our EMAR-FND detectives uses to sniff out deception:

1. The Visual Forensics Expert (Image Manipulation Detection):

This isn’t your grandma’s photo editor. Modern deepfakes and AI-generated images are incredibly convincing. Our system uses a trick called “Noiseprint” analysis. Think of every camera as having a unique “fingerprint” in the noise it leaves in an image. When an image is tampered with, this subtle fingerprint gets messed up. Our system specifically looks for these disruptions.

- Noiseprint Extraction: We use a special extractor called DnCNN (think of it as a super-sensitive sensor) to pull out this unique noise pattern from an image. It’s like revealing the hidden texture underneath the surface.

- Contrastive Learning: To train this extractor, we show it many image patches. It learns to recognize if two patches came from the same unaltered source (they should have similar noise patterns) or if they’re different (implying one might be forged). This is like teaching it to differentiate between authentic signatures and forged ones by highlighting subtle differences.

- Robustness is Key: Images in real news are often resized, compressed, or brightened. Our system is trained to handle these common alterations while still spotting the actual manipulation. We also combine high-level (what the image shows) and low-level (the pixel data) features to get a complete picture.

- Cross-Modal Calibration: The image features are then checked against the text features. If the image shows a blue car but the text talks about a red car, this cross-calibration helps flag a potential issue, filtering out “noisy” or irrelevant data in one modality based on the other.

- Anomaly and Confidence Maps: The system generates two maps: an “anomaly map” showing where it suspects tampering, and a “confidence map” indicating how sure it is. These maps are then condensed into a “completeness score” – essentially, how un-messed-with the image seems.

2. The Knowledge Weaver (Adaptive Knowledge-Aware Anchor Graph):

This detective is all about context and fact-checking, but smartly. Simply throwing all available knowledge at a news story creates too much clutter. So, it learns to pick just the right facts.

- Reinforcement Learning for Smart Knowledge Selection: Imagine a game where the goal is to find relevant facts. Our system, using “reinforcement learning,” plays this game. Starting with the news item, it explores a vast “knowledge graph” (a network of facts and relationships). It gets a “reward” for finding facts closely related to the news, helping it build a focused “anchor graph” of relevant external knowledge. It’s like sending a highly trained search dog into a forest – it doesn’t just run around aimlessly; it follows the scent of relevance.

- Markov Decision Process: This is the underlying “game” structure. The system moves from state to state (absorbing new facts), making decisions (actions) to grow its anchor graph, and getting rewarded for good choices.

- Refining the Anchor Graph: Even with smart selection, some irrelevant noise can sneak in. We then refine this graph by looking at the semantic similarity between news sentences and the facts. If a fact is too far-fetched or unrelated to the sentence, it gets pruned. We apply techniques like weighted cosine similarity and linear programming to rigorously prune the graph, ensuring only the most semantically relevant and high-confidence connections remain. This is crucial for avoiding the “garbage in, garbage out” problem.

- Top-K Edge Selection: For each “node” (a piece of information) in our graph, we identify its k most important connections, effectively filtering out less relevant ones. This ensures our knowledge graph is sparse, high-confidence, and truly impactful.

3. The Narrative Coherence Specialist (Bidirectional Heterogeneous Graph for Event Consistency):

This detective analyzes how consistently events are portrayed across text and visuals.

- Multimodal Event Representation: We extract key events from both text (using advanced language models like BERT) and images (identifying objects with visual models like ResNet-50). All these events – words, objects, and higher-level conceptual events – are thrown into a complex network called a “heterogeneous graph.”

- Connecting the Dots (Intra- and Inter-modality): Within this graph, we build connections not just between similar types of information (e.g., word to word) but also between different types (e.g., a word describing an object to the object itself in an image). This allows our system to spot inconsistencies across modalities – like the “sunny text, blizzard image” example.

- Graph Attention Network (GAT): To filter out irrelevant connections and focus on the most meaningful ones, we use a GAT. It’s like having a spotlight that highlights the most important relationships between all the elements in the news. This allows the system to adaptively select connections, prioritizing those that carry significant semantic weight for event consistency.

- Event-Level Prediction: The refined, coherent parts of the event graph are then “pooled” and fed into a classifier to determine if the events described are consistent or if there are significant clashes. This provides a prediction on the event’s overall veracity.

4. The Alibi Checker (Spatio-Temporal Matching Network):

This detective ensures the “where” and “when” of a story hold up.

- Integrating Spatio-Temporal Clues: We take the geographical and temporal information extracted from the news (e.g., “yesterday in London”) and compare it with visual evidence that might imply location or time.

- Multi-Head Attention: This mechanism allows the system to focus on how the textual information about place and time aligns (or misaligns) with the visual cues. It’s like having multiple pairs of eyes, each looking for different types of spatio-temporal matches.

- Projection and Concatenation: The geographical/temporal insights from text and visuals are mapped into a common “feature space” and then combined. This creates a rich representation that explicitly captures spatio-temporal consistency (or lack thereof). This combined feature then contributes to the overall fake news prediction, specifically highlighting temporal or spatial discrepancies as potential flags.

The Big Picture: Why This Matters for All of Us

Ultimately, all these specialized investigative efforts lead to one goal: to create a system that doesn’t just tell you something is fake, but shows you how and why. Traditional fake news detection has been a bit like a black box: you put news in, and either a “real” or “fake” label comes out, without much explanation. This leaves us feeling distrustful and unable to learn. What if the model was wrong? How could we even challenge it?

Our EMAR-FND framework changes this. By meticulously breaking down the detection process into four fine-grained, interconnected reasoning modules – from scrutinizing tiny visual alterations to cross-referencing vast knowledge bases, checking for narrative consistency, and verifying spatial-temporal details – we gain an unprecedented level of interpretability. When EMAR-FND identifies a piece of news as fake, it can point to the specific visual forgery, the factual inconsistency, the event-level contradiction, or the spatio-temporal mismatch that led to its conclusion. This isn’t just about catching more fake news; it’s about empowering users with the knowledge to understand how they are being deceived. In an age where misinformation spreads faster than truth, providing such granular explanations is vital. It allows us to build trust in AI systems, educate individuals on the tactics of fabrication, and foster a more discerning information environment. This is not just a technological advancement; it’s a step towards restoring confidence in the information we consume daily.

The Power of Explanation: Building Trust and Understanding

The real magic of EMAR-FND lies in its ability to explain itself. Imagine a doctor who not only tells you your diagnosis but also shows you the MRI scans, explains the blood test results, and points to the specific symptoms that led to their conclusion. That’s what EMAR-FND aims to do for fake news. When it flags a news item as fake, it doesn’t just give a thumbs down; it can say, “This image shows signs of digital alteration here,” or “This claim contradicts established facts about this event,” or “The timeline in the text doesn’t match the visual evidence.”

This level of detail is transformative. For individuals, it helps them develop critical thinking skills and become more resilient against manipulation. They learn to identify the tell-tale signs of fake news themselves, rather than blindly trusting an algorithm. For content platforms and journalists, it provides powerful tools for fact-checking and for understanding the specific vulnerabilities of news stories to fabrication. It moves us beyond a simple binary judgment to a nuanced understanding of deception. By making the inner workings of our detection system transparent, we build trust, making our fight against misinformation not just more effective, but also more human-centric. This is crucial because, at its heart, fake news is about eroding trust and confusing human understanding. Our goal is to counter that by bringing clarity and reason to the forefront.