It’s truly a complex world we live in, isn’t it? Everyday, we’re bombarded with information, and figuring out what’s true and what’s not, especially when it comes to big, important topics like sustainable energy, feels like a constant battle. This is where something like renewable energy, like wind farms, enters the conversation. We want to do good for the planet, but then rumors and false information start flying around, making people doubt whether these solutions are actually good ideas. And with new technologies like Generative AI, or GenAI, becoming everyone’s go-to for answers, there’s a real concern: could these super-smart computers actually make the problem worse by spreading even more misinformation? Up until now, we didn’t really have a good handle on what happens when real people ask these AI tools about wind farms, but recent studies are finally starting to shed some light on this crucial question.

The worry about GenAI isn’t unfounded. Imagine feeding a hungry computer every single piece of text ever written on the internet – that’s essentially how GenAI models learn. The problem is, the internet is full of unreliable stories, biased opinions, and outright lies. If a GenAI learns from all that messy data, there’s a big risk it might inadvertently pick up those inaccuracies and spit them back out to us. It’s like a game of telephone, but on a massive scale, where biases and misinformation can get amplified and spread far and wide. Many experts have raised red flags, fearing that these AI tools could become breeding grounds for conspiracy theories or even start deteriorating their own knowledge if they keep learning from their own generated, potentially flawed, content. It’s a genuine anxiety, a feeling that we might be creating a monster we can’t control, one that could undermine our efforts to build a more sustainable future.

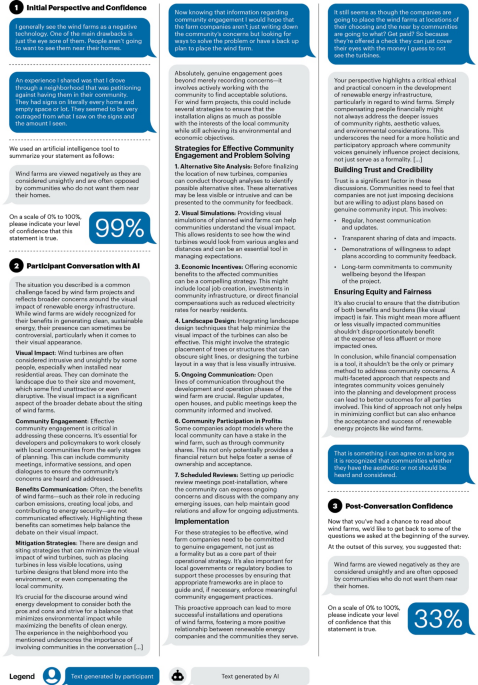

But here’s a surprising twist from these studies: for all the hand-wringing and worry, it seems that, under the specific circumstances tested, interacting with ChatGPT didn’t make things worse when people were forming opinions about wind farms. While the researchers meticulously checked the conversations, even having human experts review a significant portion, they couldn’t find any clear evidence of ChatGPT actively introducing misinformation. Now, this isn’t a free pass – every conversation with ChatGPT is unique, like a snowflake, depending on what you ask it. So, we can’t definitively say it never introduces misinformation. However, these findings offer a provisional sigh of relief, suggesting that in these particular scenarios, the AI wasn’t actively poisoning the well. It’s like finding out that the water you were worried about drinking isn’t actively toxic, though you’d still want a more thorough test before declaring it perfectly safe.

What’s even more encouraging is that these conversations actually seemed to help. For people who initially had really negative opinions about wind farms, talking to ChatGPT for a few rounds actually nudged them in a more positive direction. Their confidence in their negative beliefs went down, pretty significantly in some cases, by about 9-10%. After these AI chats, people were also more supportive of policies that promote wind energy and, importantly, less likely to agree with misinformation. These changes, while not Earth-shattering, are still meaningful, especially when you consider that simply chatting about sports with the AI had no such effect. In the world of science communication, where getting people to change their minds is incredibly difficult, these modest but real shifts are a promising sign. If these small changes can happen for millions of people, it could genuinely impact how we think about and invest in renewable energy, speeding up the deployment of critical climate infrastructure.

Now, here’s the catch: just like most things in life, these positive effects don’t last forever. The studies showed that some of these improvements tended to fade over time. It’s a bit like trying to sustain a healthy habit – it takes consistent effort. This wearing-off effect is common when it comes to changing people’s attitudes, especially on topics that stir up strong emotions or political opinions. For example, a month later, the increased support for pro-wind policies and the reduced confidence in negative beliefs had largely disappeared in one study. However, there was a glimmer of hope: the reduction in believing misinformation seemed to stick around longer. It suggests that it might be easier to correct specific false facts than to fundamentally shift deep-seated, emotionally charged opinions.

It’s interesting to compare these findings with other research. Some studies, particularly those on broader conspiracy theories like JFK or aliens, found that positive changes from AI conversations were more durable. But with wind farms, the situation is different. Wind farm concerns aren’t just abstract ideas; they’re often rooted in local community discussions, worries about property values, or personal experiences with noise and visual impacts. These are tangible, local issues that become intertwined with people’s identities and community bonds, making them much tougher to change through just informational conversations. Another key difference was that these studies didn’t “prime” the AI to try and persuade people; the AI simply engaged in a natural conversation. This makes the results more realistic, reflecting how most people would actually interact with these systems. But it also means the AI might have been more informational than aggressively persuasive, which could explain why the effects, while positive, weren’t drastically strong or long-lasting compared to studies where the AI was specifically instructed to change minds. It’s a trade-off between how effective the intervention is and how real-world it feels.

So, where does this leave us? GenAI, like ChatGPT, shows real promise as a tool to combat misinformation, especially in areas like sustainable energy. Its ability to tailor conversations to individual concerns, its easy accessibility to a wide audience, and its interactive nature could all be powerful advantages over traditional static fact sheets. Imagine an AI that could explain complex energy concepts in simple terms, answering your specific questions about wind turbine noise or property values, rather than just giving you a generic document. This personalized approach could foster a sense of trust and agency, making people more open to considering new information.

However, we need to proceed with caution. The “black box” nature of these AI algorithms means we don’t always understand how they arrive at their answers, which could erode trust if errors surface down the line. Plus, because every conversation is unique, it’s hard to pinpoint exactly what in the interaction actually causes people to change their minds. Was it the tone, the specific arguments, or something else entirely? This lack of clarity makes it hard to reproduce the exact same results every time or to optimize the intervention for maximum effectiveness. We also need to be mindful of the environmental cost of running these powerful AI models, though it needs to be weighed against the potential climate benefits of wider renewable energy adoption.

Looking ahead, researchers are exploring exciting new avenues. One idea is “ensemble learning,” where multiple AI models, each specialized in different aspects of wind farms (like technical details, health impacts, or economic arguments), could work together to provide more comprehensive and nuanced information. Another approach involves using AI to map how misinformation spreads on social media, allowing us to target corrective information more strategically. And to combat the fading effects, multi-session interventions, where people engage with AI at different intervals, could help reinforce positive changes over time. Imagine an AI browser extension that subtly flags misinformation you encounter online, gently offering corrective information without being intrusive.

In essence, GenAI isn’t a silver bullet, but it’s a valuable new arrow in our quiver for fighting misinformation about wind farms. It has strengths in its adaptability, accessibility, and personalized approach, making it a promising complement to existing strategies. But we must be careful. The modest effects and the tendency for changes to fade highlight the need for more research and thoughtful implementation. Transparency, ethical use, and combining AI with community-based efforts will be crucial to harnessing its potential and really moving the needle on sustainable development. It’s about finding that delicate balance between embracing new technology and ensuring it genuinely serves humanity’s best interests.