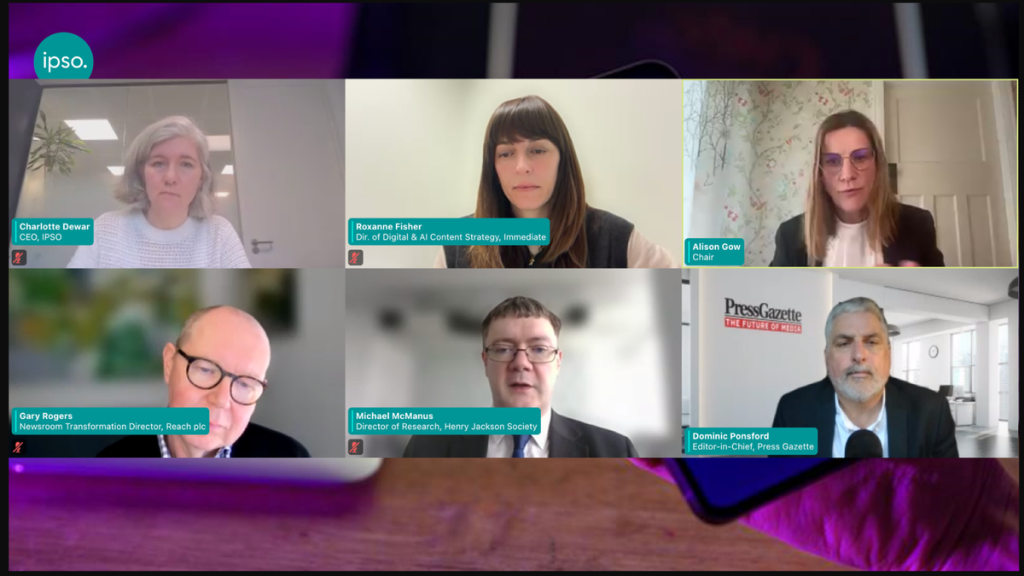

It seems like we’re in the middle of a digital arms race, but instead of traditional weapons, the battleground is information itself. Artificial intelligence (AI), a technology once celebrated for its potential to streamline tasks and enhance efficiency, is now being weaponized to spread misinformation and deceit at an unprecedented scale. This isn’t just a theoretical problem; it’s a very real threat that’s already tricking even seasoned journalists and eroding public trust in news. Independent press regulator IPSO recently brought together a group of leading editors and strategists in the UK to discuss this very issue, highlighting the urgent need for robust strategies to protect journalistic integrity and the public’s understanding of truth. Their insights painted a stark picture of the challenges ahead and offered a glimpse into how news organizations are fighting back.

Michael McManus, research director at Nation-states, an influential think tank focused on foreign affairs, kicked off the discussion with a sobering assessment: AI has become a primary tool for malicious actors. He explained that nation-states, extremist groups, and even individuals driven by ideology are now able to pollute our information ecosystem with astonishing speed and sophistication. “The leaps are geometric,” he warned, emphasizing how AI can churn out convincing fake narratives, craft hyper-personalized phishing attacks that are hard to distinguish from legitimate communications, and create deepfakes so realistic that they blur the lines between what’s real and what’s fabricated. McManus pointed to recent Russian operations where AI-driven social engineering scams, using highly tailored and credible-sounding messages, targeted high-level officials. He stressed that as deepfake technology continues to improve, journalists will soon face an “event horizon” – a critical point where it becomes almost impossible to visually or audibly differentiate genuine content from manipulated versions. McManus drew a crucial distinction: “Disinformation is when somebody deliberately puts something in the ecosystem which they know to be untrue, but misinformation is when people in good faith share it believing it to be true.” The danger isn’t just malicious intent, but also the unwitting spread of falsehoods by well-meaning individuals, including journalists, if they lack robust verification tools and training. To combat this, McManus advocated for a new standard: “verify, then trust.” He highlighted initiatives like BBC Verify and Finland’s deepfake detection curriculum in schools as successful models for building “herd immunity” among both journalists and the general public, equipping them to critically assess information. Ultimately, he insisted, AI tools are only as effective as the humans who program them, and constant human oversight is essential to guard against both technical flaws and subtle corporate biases.

Adding another layer to this deception, the discussion shifted to how AI is being used to manipulate the public relations (PR) sector and, by extension, newsrooms. Press Gazette’s “Reality Wars” series has extensively documented a disturbing trend: seemingly legitimate and expert news sources, complete with quotes, turning out to be entirely AI-generated. This has led to embarrassing gaffes for major publications like The Telegraph, Wired, and Business Insider, who have unknowingly published content from fake freelancers. Dominic Ponsford, Editor-in-Chief of Press Gazette, explained how some PR agencies have “weaponised and industrialised” the process of securing SEO mentions for brands. These agencies exploit journalists’ traditional trust in press releases, particularly for “softer” lifestyle stories, to push fabricated narratives. The result? Thousands of articles in mainstream media featuring entirely made-up sources. Ponsford urged journalists to adopt a deeply skeptical approach: treat unsolicited emails as potentially AI-generated until proven otherwise, verify sources through harder-to-forge channels like LinkedIn or direct phone calls, and utilize AI-detection tools like Pangram and Identify-AI. He delivered a blunt warning: “Don’t take them as an expert just because they’ve appeared in lots of other publications, because we found [fake] people that have appeared in maybe 50 different places. They’ve been just as fake in every other article as the next one that gets written.” This highlights the urgent need for a fundamental shift in journalistic practice, moving away from inherent trust towards rigorous, independent verification.

Gary Rogers, Newsroom Transformation Director at Reach, a major UK publisher, openly acknowledged that his organization has also fallen victim to these deceptive tactics. He confirmed that Reach took the Press Gazette investigation “very seriously” and promptly removed any affected stories. Rogers emphasized the severe threat to the essential trust between PR professionals and journalists when fabricated stories are published. In response, Reach has significantly tightened its vetting process for PR agencies. More crucially, they are rolling out an “advisory research assistant” tool to all their journalists. This AI-powered tool is designed to flag suspicious emails, verify the legitimacy of senders, and search for an internet footprint that goes beyond mere circular references, which often characterize fake personas. “It’s not a yes or no — it’s a ‘here are some red flags, you need to go check,'” Rogers explained, underscoring that the AI assists human judgment rather than replacing it. A cornerstone of Reach’s strategy is comprehensive training and fostering a culture of constant verification. They employ a “buy and build” approach to AI, integrating off-the-shelf solutions while also developing in-house tools like “Guten” for content repurposing. Each new tool undergoes a strict vetting process, with particular attention paid to data handling and the potential exposure of sensitive information, ensuring robust governance around privacy and source protection. While recognizing AI’s potential to streamline workflows and automate repetitive tasks, Rogers offered a pragmatic warning: it’s not a “miracle engine.” “They will cause you problems. They could save you effort. You have to get the balance right,” he cautioned, highlighting the constant negotiation between efficiency and potential risks.

Similarly, Roxanne Fisher, Director of Digital & AI Content Strategy at Immediate Media, a prominent magazine publisher, shared her organization’s journey, which is firmly rooted in transparency, inclusivity, and responsible experimentation. From the outset, Immediate Media has been open about its use of AI, establishing clear “guardrails” – rules for what AI can and cannot be used for. Fisher stressed that the company is “very careful to avoid AI for AI’s sake,” insisting that all AI applications, whether for content creators or readers, must address a genuine user need. To circumvent concerns about data security and reliance on external AI models, Immediate Media developed “First Draft,” an internal tool exclusively trained on the company’s vast archives of proprietary content. This tool aids in research and content repurposing, crucially providing full citations and references for every output, ensuring accountability and traceability. “We talk about being here for assisted, not generated AI content,” Fisher clarified, emphasizing that all AI-generated outputs are subjected to rigorous human oversight and editorial judgment. Training is a significant focus, with “immersion days” featuring external experts who discuss ethical considerations and build confidence among staff in using AI effectively and responsibly. Fisher concluded by expressing a desire for Immediate Media to be an active participant in shaping the future of AI in media: “We’d much rather be part of the conversation and the problem-solving of how we use [AI] in the best way possible to enhance what we do, not obliterate it,” encapsulating a proactive and hopeful stance on navigating this rapidly evolving technological landscape.

In essence, the conversations at IPSO painted a vivid picture of a media industry grappling with a double-edged sword. AI offers immense potential for efficiency and innovation, but it also presents a formidable challenge in the form of weaponized disinformation. The “geometric” leaps in AI’s capabilities mean that journalists and the public collectively face an “event horizon” where discerning truth from fiction becomes incredibly difficult. However, the responses from organizations like Reach and Immediate Media demonstrate that through a combination of enhanced technological tools, rigorous internal training, skepticism towards unsolicited content, and an unwavering commitment to human oversight, the industry can begin to build “herd immunity” against these new threats. The core message is clear: AI isn’t simply a tool to be adopted; it’s a force that demands careful navigation, ethical consideration, and a proactive, human-led approach to protect the integrity of information in an increasingly complex digital world. This is not just about protecting newsrooms; it’s about safeguarding the very foundation of an informed society.