Imagine trying to tell what’s true and what’s false in the swirling ocean of news, especially when it’s in a language like Urdu, which has its own unique complexities. That’s precisely the challenge a dedicated team of researchers tackled, creating a massive collection of Urdu news articles to help computers learn the difference between genuine information and deceptive stories. It’s like building a super-smart detective for fake news in Urdu.

This monumental effort involved gathering 14,178 news articles, neatly sorted into fifteen different categories. Think of it as a meticulously organized library, but instead of books, it’s packed with news ranging from the thrill of sports to the gravitas of politics, the advancements in technology to the glitz of showbiz, and everything in between. They cast a wide net, collecting news from 2017 all the way to 2023, making sure they had a diverse snapshot of information. To do this, they used a precise set of search terms – not just “Pakistan Urdu news,” but also specific provinces like Punjab and Sindh, political parties like PTI and N-League, and even broader topics like “Pakistan and India Urdu news” and “elections Urdu news.” This wasn’t just about quantity; it was about quality and variety, ensuring they captured the many ways fake news can spread, across different topics and narratives. They even delved into how words are used, looking at both cross-domain and multi-domain news to spice up the linguistic range. Their goal was to not only create a robust dataset but also to test how well the latest AI models could handle this intricate task, even analyzing the common acronyms found in Urdu news. All the articles came from trusted Pakistani Urdu news websites, covering both local and international events, ensuring a foundation of reputable sources.

Think of the journey to create this dataset as a meticulous treasure hunt for truth and deception. It began by recognizing the scarcity of reliable resources for detecting fake news in Urdu. So, armed with a comprehensive list of keywords related to Pakistan and its news, they started sifting through countless articles. They even tapped into tools like PolitiFact’s APIs, which are like special doorways that allow programs to access news information directly. PolitiFact is a fantastic resource because it includes both real and fake news, often with expert verification. They also leveraged the widely recognized News API, a user-friendly way to get authentic news from diverse global sources, carefully filtering for content relevant to Pakistan. And of course, they included the invaluable insights from FactCheck, a nonprofit that specializes in verifying news and debunking false claims made by public figures. Beyond these big players, they meticulously scoured popular Urdu news platforms like Urdu Point, Roznama, BBC, and even Pak India News, knowing that social media often acts as a breeding ground for both legitimate and misleading stories. It was a painstaking process of compiling, comparing, and cross-referencing to ensure they had a rich mix of both verified and fabricated news.

However, this wasn’t a walk in the park. The researchers faced numerous hurdles in their quest to gather this data. One significant challenge was finding truly trustworthy sources that specifically dealt with fake news. Many news websites, surprisingly, didn’t offer the convenient “APIs” that would allow for easy data collection. This meant that extracting information from these sites often resulted in just raw HTML code, not the actual, digestible content. Some websites even had restrictions on “data scraping,” forcing the team to resort to more manual methods like accessing page sources and extracting content from the underlying HTML. Another major roadblock was the scarcity of fact-checked news specifically related to Pakistan. While many international fact-checking websites existed, they often lacked a search function for Urdu news, and very few offered structured, user-friendly APIs for sourcing information. Even when they did find international fact-checking platforms, the sheer volume of Pakistan-specific fact-checks was limited. For instance, PolitiFact, a well-known and respected fact-checking source, had only conducted about 170 fact-checks directly related to Pakistan. This highlighted a significant gap in the fact-checking landscape, making the researchers’ work even more crucial.

As they began to analyze the collected data, a clear picture emerged: an imbalance. In the world of fake news detection, where an article is either true or false, there was a noticeable skew. There was significantly more accurate news data than misleading news. This isn’t surprising given the limited availability of reliable, fact-checked Pakistani news websites. It’s like trying to find a needle in a haystack, and sometimes, the haystack itself isn’t as big as you’d hope for verified information. Before any of this data could be fed into smart computer models, it needed a good “spring cleaning.” This involved several crucial steps: first, getting rid of any duplicate articles, because who needs to read the same story twice? Second, they purified the data, making sure the linguistic content was sound. Third, they added important “metadata” – things like the article’s URL, title, the date it was reviewed, the publisher’s name, and even author information, all crucial details for proper analysis. Fourth, they tackled the raw, untamed text that came from various sources. This involved “pre-processing,” which meant scrubbing out things like internet addresses, URLs, and punctuation, then breaking the text down into individual words or “tokens.” They also removed common filler words, known as “stop-words,” and performed “stemming,” which reduces words to their root form (think of “running,” “ran,” and “runs” all becoming “run”). Finally, each news report was carefully labeled, with “fake” Urdu news assigned a “fake” label, and anything considered “accurate,” “partially accurate,” or “half accurate” being grouped under the “real” category. This meticulous preparation ensured the data was pristine and ready for the serious work of distinguishing truth from deception.

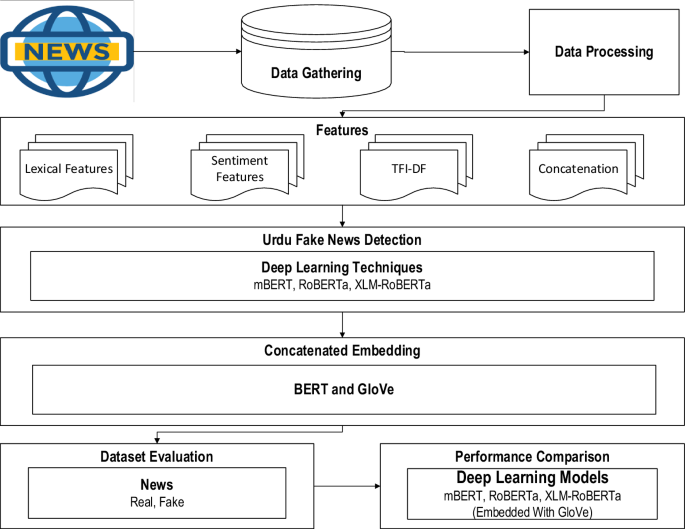

With the data polished and ready, the researchers moved on to the exciting part: making sense of it all and building smart detection systems. They employed some of the most advanced techniques in artificial intelligence, especially “deep learning” models. To make these models truly effective, they focused on extracting crucial “features” from the news articles. This included “lexical features” – things like common words, proper nouns (named entities), word length, and even how many syllables were in a word. They also considered “sentiment features,” which analyze whether the text expressed positive, negative, or neutral emotions. And then there were “TF-IDF elements,” which measure how frequently certain terms appear in a text, giving an idea of their importance. These traditional features were then combined with more cutting-edge deep learning methods that used “word embeddings.” Imagine teaching a computer to understand the meaning of words by representing them as numbers. “BERT embeddings” are incredibly powerful because they understand words in their context – like knowing “bank” means something different in “river bank” versus “money bank.” “GloVe embeddings,” on the other hand, define words as vectors based on how often they appear near other words, capturing semantic similarities. By combining these, the models could grasp both the nuanced meaning of words and their broader relationships. They then fed this rich data into powerful deep learning models like mBERT, RoBERTa, and XLM-RoBERTa, fine-tuning them with specific settings to learn and identify patterns of fake news. This blend of meticulous data preparation and advanced AI techniques truly pushes the boundaries of fake news detection in Urdu, striving to make our digital information landscape a little more trustworthy.