Imagine you’re trying to figure out if a news story is true or false. It’s not always easy, especially when you have to consider both the words AND the pictures. This study is like a detective agency, setting up an elaborate experiment to catch fake news in action. Their goal? To build a super-smart system that can sniff out deception in Amharic news articles that come with both text and images.

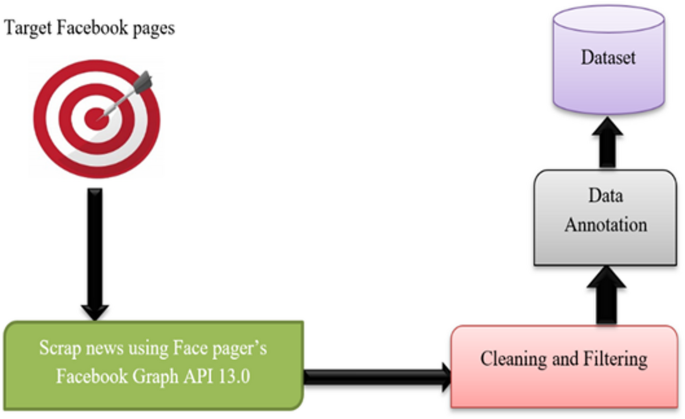

Think of it like this: they started by gathering a massive pile of real and fake news stories – over 23,000 of them! These weren’t just any stories; they came from various sources and were carefully chosen to represent the kind of news you’d find online. To make sure they knew which stories were fake and which were real, a team of linguistic experts and image analysts meticulously checked every single one. The language experts looked for tricky wording, misleading claims, and sensationalism, while the image experts scrutinized pictures for any signs of manipulation or if they even made sense with the text. This wasn’t a solo effort; multiple experts worked together to ensure accuracy, and any disagreements were ironed out until everyone agreed. This meticulous process gave them a high-quality “training set” of news articles – a kind of textbook for their fake news detector. They even used special tools to collect this data from Facebook pages, being careful to address ethical concerns like privacy and potential biases in the information they collected.

Once they had all this raw news data, it was time to clean it up. Imagine getting a dusty old book and wanting to read it; you’d wipe off the dust, right? That’s what “data preprocessing” is all about: making the data neat and tidy for the computer to understand. Since they were dealing with both text and images, they had to clean them differently. For the text, they removed anything that wasn’t Amharic – special characters, symbols, emojis, and even punctuation were shown the door because, for this particular study, they were considered “noise.” They also had to deal with the quirks of the Amharic language, where the same sound can be written in multiple ways. They standardized these spellings to make sure the computer didn’t get confused. After all that cleaning, they broke the text down into individual words, like separating a paragraph into single words, which they then converted into a numerical format the computer could process. For the images, they resized everything to a standard size (like making all photos the same dimensions for an album), converted them to grayscale (removing color to simplify things), and even used a technique called “histogram equalization” to make sure the brightness and contrast were consistent across all images. Finally, they zapped away any “noise” in the images, like digital artifacts or blurry spots, to ensure clarity.

Now, for the really clever part: how do you combine the information from both the text and the images to make a single, powerful decision about whether a news story is fake? This is where “multimodal fusion” comes in. Think of it like a jury where each juror has a different area of expertise. One juror focuses on the written evidence, another on the visual evidence. This study used a strategy called “late fusion.” This means they first trained separate “detectives” – one specifically for text and one specifically for images – to make their own independent judgments. Only after these individual judgments were made did they combine the results to arrive at a final verdict. This approach is like getting separate opinions from different experts and then weighing those opinions together, rather than mixing all the raw evidence together from the start.

To build these “detectives,” they used powerful artificial intelligence techniques called “deep learning.” For the text side, they explored a few brilliant methods. One was Convolutional Neural Networks (CNNs), often used for image recognition but also surprisingly good at spotting patterns in text, almost like finding specific “clues” in the words. Another was Bidirectional Long Short-Term Memory (BiLSTM) networks, which are excellent at understanding the sequence of words, like reading a whole sentence and understanding how the beginning relates to the end. They even added an “attention mechanism” to the BiLSTM, which is like giving the model the ability to highlight the most important words in a sentence, helping it focus on what truly matters. Finally, they tried a hybrid CNN-BiLSTM model, which is like combining the best of both worlds: CNNs to pick up quick patterns in the text, and BiLSTMs to understand the deeper meaning and context.

For the image side, they primarily relied on Convolutional Neural Networks (CNNs). Imagine a CNN as a skilled art appraiser who knows exactly what to look for in a painting. The CNN takes the image, breaks it down into tiny pieces, and then uses various “filters” to detect features like edges, corners, and textures. It does this in layers, getting progressively better at identifying more complex patterns. It’s like the appraiser looking at the brushstrokes, then the composition, then the overall style. After identifying these features, it “flattens” them into a manageable format and then uses these learned clues to make a judgment: is this image part of a real news story or a fake one? This entire experimental setup, with its careful data collection, meticulous cleaning, and sophisticated deep learning models, is designed to create a robust system capable of fighting the tide of fake news.