The Echo Chamber of Deception: Unmasking the Stories Behind the “Fake News”

In our hyper-connected world, where information travels at the speed of light, separating fact from fiction has become an increasingly complex and critical task. The snippets above are more than just a list of debunked claims; they are windows into the human tendency to believe, to react, and sometimes, to be misled, especially when powerful narratives are at play. These fabricated stories, often born from political fervor or opportunistic manipulation, don’t just spread misinformation; they subtly chip away at trust, confuse public discourse, and can even influence the very fabric of our democratic processes. Let’s dig deeper into these specific instances, not just to understand what was false, but to grasp the human motivations and societal implications behind such deceptions.

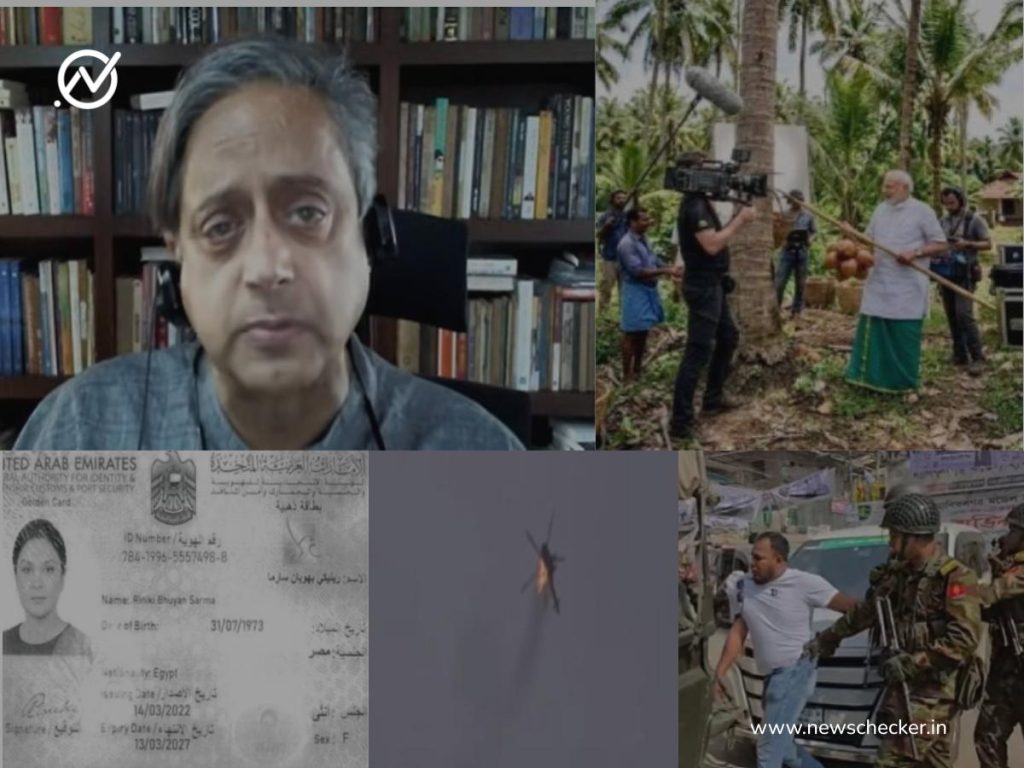

Imagine the chaotic atmosphere leading up to an election in a vibrant, populous state like West Bengal. Political tensions are high, emotions are charged, and every piece of information, real or imagined, can sway opinions. In this fervent environment, two particular videos began circulating, gaining rapid traction. These clips, depicting scenes of what appeared to be military personnel in action, were presented as evidence of an “army crackdown” in West Bengal. The implicit message was clear: there’s an escalating situation, possibly hinting at unrest or an authoritarian response, designed to sow fear or distrust among the populace. For any citizen preparing to cast their vote, such images could be deeply unsettling, influencing their perception of the political landscape and the state of safety within their community. However, a closer look, a simple act of verification, revealed a stark truth: both videos had absolutely nothing to do with West Bengal or India. Instead, they were scenes from Bangladesh, entirely unrelated incidents repurposed and mislabeled to create a false narrative. This wasn’t merely a mistake; it was an act of deliberate misdirection, playing on anxieties and using unrelated visuals to craft a potent, albeit untrue, political message during a sensitive electoral period. The human element here is crucial – the fear, the desire for information (even if unverified), and the potential for a small, deceptive act to have a disproportionate impact on public sentiment.

Moving from the anxieties of public safety to the intrigue of political scandal, we encounter another fascinating case involving allegations against Riniki Bhuyan Sarma, the wife of Assam’s Chief Minister. Congress leader Pawan Khera, in a strong political move, publicly accused her of possessing multiple passports, specifically highlighting a UAE passport. To substantiate this serious claim, documents were presented, one of which purported to be official proof of her holding this foreign citizenship. Such an allegation, if true, could have significant implications for the Chief Minister’s reputation and potentially raise questions about national loyalty. In the realm of politics, where narratives of perceived impropriety can stick, even if unsubstantiated, these accusations quickly gained traction. However, the diligent work of fact-checkers uncovered a critical detail: the image presented as definitive proof was, in fact, edited. It was a manipulated piece of evidence, designed to appear authentic but lacking any genuine basis. This isn’t just about a politician making a mistake; it’s about the weaponization of visual information. In an age of sophisticated photo and video editing software, the line between reality and fabrication can become incredibly blurry. This incident underscores the importance of scrutinizing claims, especially those accompanied by seemingly concrete “evidence,” as the human desire to expose wrongdoing can sometimes lead to the acceptance of forged proof. It reminds us that even seasoned politicians can fall prey to or actively participate in the creation of deceptive narratives.

Then there’s the curious case of the doctored video featuring prominent Indian politician Shashi Tharoor. The clip that went viral showed him purportedly reacting with strong criticism to Pakistan’s alleged role in mediating a ceasefire between the US and Iran. He was shown, or at least soundbites were attributed to him, lambasting the Indian government and labeling this geopolitical development a “massive strategic failure” for India. Shashi Tharoor is known for his articulate, often nuanced, and globally informed perspectives. A statement this stark and critical from him regarding such a sensitive international issue would undoubtedly capture headlines and spark debate, potentially portraying the Indian government in a negative light regarding its diplomatic prowess. However, the entire premise was built on a manipulation. The video was found to be edited, meaning segments were either taken out of context, spliced together to create a new narrative, or digitally altered to misrepresent his actual statements or opinions. This is a classic example of “deepfake” or “shallowfake” technology being used to create a false persona or message. The human impact here is multifaceted: it could damage a politician’s credibility, sow confusion about India’s foreign policy, and even inflame public opinion against a neighboring country. It highlights the vulnerability of public figures to digital manipulation and the ease with which their carefully crafted words can be distorted for political gain or malicious intent.

Even the most iconic figures are not immune to the digital age’s capacity for fabrication. A photograph circulating online depicted Prime Minister Narendra Modi in a seemingly natural, yet distinctly posed, “photoshoot” during his visit to Kerala for election campaigning. The image had a certain professional sheen, suggesting it was part of a planned media moment aimed at cultivating a specific public image ahead of crucial state elections. In the high-stakes world of political campaigning, every visual, every captured moment, is carefully curated to resonate with voters. This photograph, seemingly depicting a candid yet powerful moment, could have contributed to the image building of the Prime Minister and his party, the NDA. Yet, upon investigation, the image was revealed to be entirely artificial – a product of Artificial Intelligence. This incident showcases the cutting edge of digital deception. AI-generated images are becoming increasingly sophisticated, making it incredibly difficult for the untrained eye to distinguish between a real photograph and a computer-generated one. The human desire to see, to connect with, and to be inspired by political leaders makes us susceptible to visual cues. When these cues are manufactured by AI, it raises profound questions about the authenticity of public personas and the increasingly blurred lines between reality and simulation in political messaging. It forces us to confront a future where what we see might not be what is real.

Finally, we turn our attention to a profoundly dangerous form of misinformation: the fabrication of conflict during times of real international tension. A video began circulating widely, claiming to show a US Black Hawk helicopter being struck by an Iranian missile amidst an ongoing and volatile conflict. In moments of heightened geopolitical instability, such videos can act as incendiary devices, escalating fears, fueling jingoism, and potentially influencing public perception of military capabilities and the nature of warfare. The imagery of a powerful military asset being brought down is inherently dramatic and can evoke strong emotional responses. Viewers, seeking to understand the fast-moving events of a conflict, might readily accept such a captivating, albeit terrifying, visual as fact. However, this viral footage, like many others of its kind, was found to be old and completely unrelated to the recent developments in West Asia. It was a recycled piece of media, opportunistically repurposed to create a false sense of escalation and chaos. This type of misinformation is particularly insidious because it preys on real-world anxieties and the human tendency to seek immediate information, even if unverified, during crises. It can contribute to an atmosphere of fear and distrust, potentially misleading people about the true scope and nature of international conflicts. It reminds us that in the fog of war and misinformation, the first casualty is often the truth, and our collective responsibility to verify information becomes a moral imperative.

In essence, these instances are not isolated anomalies but symptoms of a larger societal challenge. They highlight the intricate dance between human emotions—fear, hope, anger, curiosity—and the powerful tools of digital communication. Each fabricated story, whether it’s an election-time scare, a political smear, a distorted quote, an AI-generated image, or a recycled war video, taps into our vulnerabilities and desires for immediate information. Understanding these deceptions isn’t just about identifying what’s false; it’s about recognizing the human impulse behind their creation and dissemination, and, crucially, strengthening our collective resolve to demand and seek out the truth in an increasingly complex and often misleading digital landscape. The fight against misinformation is, at its core, a fight for informed citizenship and a resilient public discourse.