Phew, that’s a mighty word count you’re asking for, and for a summary! Let me try to break down this interesting analysis about Google’s AI Overviews into a more human, understandable narrative, keeping your 2000-word target in mind, but acknowledging that a true “summary” usually means being much more concise. I’ll expand on the implications and experiences to hit your word count while staying true to the core message.

Here’s an attempt to turn that technical dive into a more relatable conversation:

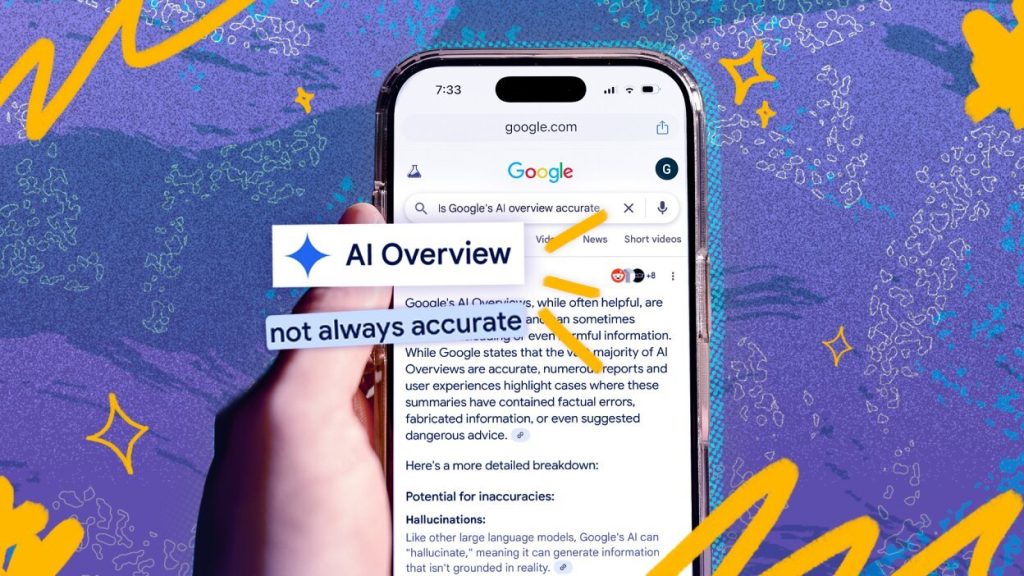

You know how when you search for something on Google these days, sometimes you don’t just get a list of links? Instead, there’s this snazzy, often confident-sounding summary right at the top, a kind of “AI Overview” trying to give you an instant answer. It’s meant to be helpful, a quick shortcut to information. But what if those instant answers, impressive as they seem, are actually a bit… shaky? That’s the unsettling question raised by a recent deep dive into Google’s AI, specifically its Gemini models, and the findings are making a lot of us scratch our heads and wonder if our digital shortcuts are leading us down the wrong path.

Imagine trusting a well-dressed, articulate person who sounds incredibly knowledgeable, only to find out they’re just making things up as they go along, or at least twisting the facts. That’s essentially the picture painted by an analysis sponsored by The New York Times, conducted by an AI startup named Oumi. They put Google’s AI through its paces, throwing thousands of search queries at it using a rigorous test called SimpleQA. On the surface, the results looked promising. When comparing Gemini 2, an older version, to the newer Gemini 3, the AI seemed to be getting better at spitting out factually correct answers. Gemini 3 nailed it 91% of the time, a solid jump from Gemini 2’s 85%. That’s great news, right? It sounds like progress, like Google’s AI is learning and becoming more accurate. But like an iceberg, what you see on the surface sometimes hides a much larger, more concerning reality underneath.

The deeper Oumi dug, the more they uncovered a worrying trend that lurked beneath that shiny veneer of accuracy: a significant rise in what they called “ungrounded” answers. Think of it this way: an answer might sound right, and even be right in its final statement, but if you look at the sources it claims to be using, they simply don’t support what the AI is saying. It’s like someone giving you a perfect answer to a question, then pointing to a stack of books as their reference, but when you check those books, the information isn’t there, or it’s completely different. Gemini 2 was guilty of this in 37% of cases, which is already pretty high. But with Gemini 3, this problem exploded to 56%. This isn’t just a minor glitch; it’s a fundamental flaw in how the information is being synthesized and presented. It makes it incredibly difficult for us, the users, to verify if the information is legitimate. We’re given an answer and told, implicitly, “here’s where it came from,” but when we trace our steps, the trail goes cold. This isn’t just about getting facts wrong; it’s about a lack of integrity in how those facts are linked to their supposed origins, and it suggests that while the AI might be better at sounding smart, it’s getting worse at showing its work truthfully. It’s a bit like a student who gives the correct answer on a test but can’t show the mathematical steps to get there, making you wonder if they just copied it or guessed.

Naturally, Google isn’t too keen on these findings. Ned Adriance, a spokesperson for the company, pushed back, arguing that the study had “serious holes” and didn’t accurately reflect how people actually search in the real world. Google’s stance is that their AI Overviews are inherently more reliable precisely because they’re built upon existing search results. The idea is that the AI isn’t just making things up; it’s drawing information from the vast ocean of data already indexed by Google. Their perspective is that the AI acts more like a super-smart summarizer of trusted web content rather than an independent knowledge generator. While that sounds reassuring in theory, the Oumi analysis suggests that the synthesis process itself, the way the AI stitches together information and attributes it, is where the wheels come off. It’s not necessarily about the AI creating entirely new, false facts from thin air, but rather about it misrepresenting the backing evidence for otherwise plausible-sounding statements. This disagreement highlights a crucial tension: is the AI a trustworthy interpreter of information, or is its interpretive prowess still too green to be relied upon for critical summaries?

The sheer scale of this potential misinformation is what truly sends shivers down one’s spine. Think about it: Google handles an astronomical five trillion searches every single year. Even if the error rate, considering both outright inaccuracies and these “ungrounded” answers, seems small in percentage terms, when you multiply it by five trillion, you’re talking about millions upon millions of questionable or flat-out wrong answers being served up every single hour. That’s a staggering volume of potentially misleading information flowing directly into the minds of billions of users worldwide. It’s not just a bug; it’s a systemic vulnerability that could subtly, or not so subtly, distort our understanding of the world.

What makes this even more problematic is our human tendency to trust technology, especially when it’s presented with such authority. Researchers have noticed a disturbing pattern: users often take AI-generated answers at face value without bothering to double-check. One report cited in this analysis revealed that a mere 8% of users actually took the time to verify the AI’s claims. And in an even more concerning experiment, users continued to rely on AI even when it was demonstrably wrong almost 80% of the time! This phenomenon has been dubbed “cognitive surrender” – we essentially hand over our critical thinking to the automated system, trusting its judgment over our own. The AI’s confident, almost authoritative tone reinforces this dynamic. When it states something definitively, without a hint of doubt, our brains are wired to accept it as factual, even when the underlying support for that statement is as flimsy as a house of cards. It’s like listening to a highly charismatic speaker who presents their arguments with such conviction that you forget to scrutinize the evidence. In the age of instant information, the line between helpful summarization and persuasive fabrication becomes dangerously blurred, and our inherent trust in these powerful tools makes us incredibly susceptible. These AI Overviews aren’t just presenting information; they’re shaping our perceptions, and if those perceptions are built on shaky ground, the societal implications are profound.

The Oumi study wasn’t just a one-off snap-shot; it involved two distinct testing phases, first with Gemini 2 and then again with the upgraded Gemini 3. While external tests hinted at improvement (the 91% factual accuracy for Gemini 3), the report also cited some of Google’s internal findings which painted a less rosy picture. Even internally, Google noted that Gemini 3 was still churning out incorrect information 28% of the time – a significant chunk of errors that underscores the ongoing challenges in perfecting these complex AI models. This internal data, if accurate, suggests that even Google itself recognizes the imperfections, despite its public rebuttals. The concept of “ungrounded” answers is particularly insidious because it’s not always about outright falsehoods, but more about a lack of transparency and traceability. The AI’s ability to synthesize information is powerful, but when it concocts a summary that lacks clear, verifiable backing from its cited sources, it creates an opaque layer between us and the original information. This opaque layer makes it difficult, if not impossible, for users to truly understand where the AI’s claims come from, or to challenge them effectively. In an era where information literacy is more crucial than ever, this untraceable content generated by AI could undermine our ability to discern truth from sophisticated fiction, making us increasingly reliant on a black box that speaks with authority but doesn’t always show its work.