The 2026 West Bengal Assembly elections were far more than just your typical political showdown filled with rallies and policy debates. They were a full-blown digital battlefield, where sneaky misinformation wasn’t just a minor annoyance but a key player in shaping what people thought and felt. It’s like a massive online “game of telephone” where the original message gets twisted beyond recognition, but the stakes here were real: who would govern West Bengal. Our investigation, based on flicking through countless posts on X (formerly Twitter), showed that this wasn’t just accidental slips of the tongue or innocent mistakes. No, it was a carefully orchestrated strategy. We saw elaborate networks using every digital trick in the book to invent stories, mess with facts, and stir up division along existing social and political cracks. The sheer size, speed, and cleverness of these campaigns painted a clear picture: there’s a powerful misinformation machine at work, deeply embedded and capable of swinging elections in a big way.

To really get a grip on how this machine worked, we spent the entire election cycle acting like digital detectives. We zeroed in on posts that went viral and hashtags that were trending, watching how many times things were shared, reposted, and commented on. We categorized all sorts of content – videos, pictures, and text claims – to see what kind of misinformation was out there. A big part of our job was also to see how these false stories were bundled together and then hammered home repeatedly to make them seem legitimate, like a broken record playing a lie until it sounds true. And because we’re serious about truth, we didn’t just take things at face value. We cross-checked everything with trustworthy sources and used reverse image and video searches to catch anything faked, misleading, or wrongly attributed. This meticulous approach allowed us to do more than just spot individual lies; it helped us map the entire highway system through which these false narratives traveled and gained traction.

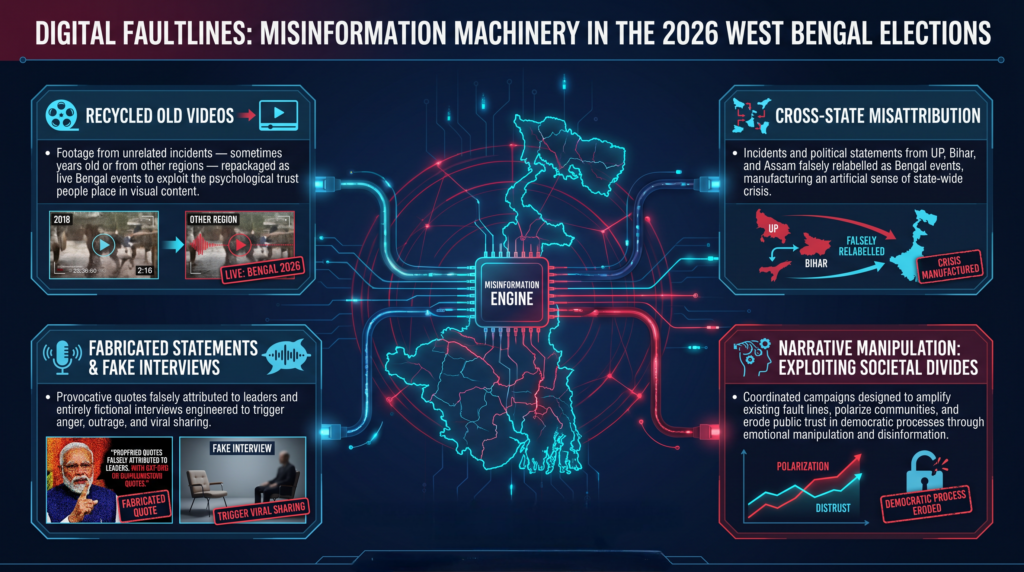

One of the most common and effective tricks we saw was something we called “manufacturing a false present” by digging up old videos. Imagine seeing a news report about something happening right now, only to find out the video is actually from years ago or even a different country entirely. That’s what they did. Footage of communal clashes from, say, another state, would be slapped with a new caption claiming it was current violence in Bengal. Similarly, old clips of protests, accidents, or police actions from completely unrelated situations were reused to suggest that West Bengal was in chaos or its government failing. This tactic was incredibly powerful because visuals hit you hard. People naturally tend to believe what they see, especially if it backs up what they already suspect. And when you strip away all the original context, these misleading posts suddenly felt incredibly believable to many.

Right alongside this tactic was what we called “cross-state misattribution” – basically, playing a game of “pin the tail on the donkey,” but with states. Events that happened in other parts of India were deliberately presented as taking place in West Bengal. Think of a political leader saying something controversial in Uttar Pradesh, only for their words to be edited or spun to look like they were directly speaking to voters in Bengal. Incidents from states like Bihar or Assam would suddenly appear in people’s feeds, wrongly labeled as happening within Bengal’s borders. This wasn’t just sloppy journalism; it was a clever strategy. It drummed up an artificial sense of crisis, injected politically charged narratives into the state’s discussions, and took advantage of the fact that most people scrolling through their phones don’t have the time or tools to instantly verify where digital content originally came from. Over time, this constant barrage of wrongly attributed information created a twisted view of reality, leaving voters to navigate an inaccurate portrayal of their state’s political and social landscape.

Beyond all the visual trickery, another potent weapon in this digital misinformation arsenal was the outright fabrication of statements and what we called “narrative engineering.” We saw quotes falsely attributed to prominent political leaders and public figures, often designed to be shockingly provocative or inflammatory. In some extreme cases, entirely fake interviews were created and spread far and wide. The goal here wasn’t just to misinform people; it was to ignite strong emotions like anger, outrage, or fear, knowing that these feelings would prompt users to share the content even further. By putting divisive words into the mouths of influential people, these fabricated statements aimed to chip away at public trust and deepen the divides already present in society.

This whole process wasn’t just about individual lies; it was like a master plan where every piece of misinformation, no matter how small, was interconnected. Coordinated campaigns made sure that different types of misleading content – videos, text posts, fake quotes, and even cherry-picked statistics – all worked together to bolster one central, overarching narrative. For instance, a false claim about escalating communal tension might be supported simultaneously by an old video presented as current, a fabricated statement attributed to a respected leader, and some misleading data points. Together, these elements crafted a seemingly consistent and convincing story, making it incredibly difficult for everyday users to tell fact from fiction. Perhaps the most troubling consequence of this misinformation ecosystem was how it fanned the flames of communal division. A huge chunk of the misleading content was specifically designed to target religious and ethnic identities, often painting one community as the innocent victim and another as the aggressive perpetrator. While these kinds of narratives aren’t new to Indian politics, the sheer scale and speed at which they spread during the 2026 elections marked a worrying escalation. Social media platforms acted as gigantic loudspeakers, blasting these messages to enormous audiences in no time, which significantly raised the risk of real-world tensions. The use of highly emotional language, paired with provocative images, made these messages incredibly shareable, and many users, often without realizing it, became unwitting participants in this cycle of misinformation by simply forwarding unverified information.

In conclusion, the 2026 West Bengal Assembly elections serve as a stark reminder of how incredibly sophisticated and organized misinformation campaigns have become in India’s election landscape. The clever use of AI-manipulated content, recycled old visuals, completely made-up statements, and playing geographical tricks with events shows that there’s a strategic, adaptable misinformation machine at work. As digital platforms continue to become the main stage for political conversations, tackling this problem of misinformation will remain absolutely crucial. It’s not just about winning an election; it’s about protecting the very integrity of our democratic process itself.