The Digital Phantom: How AI Turned Netanyahu’s Reality into a Conspiracy

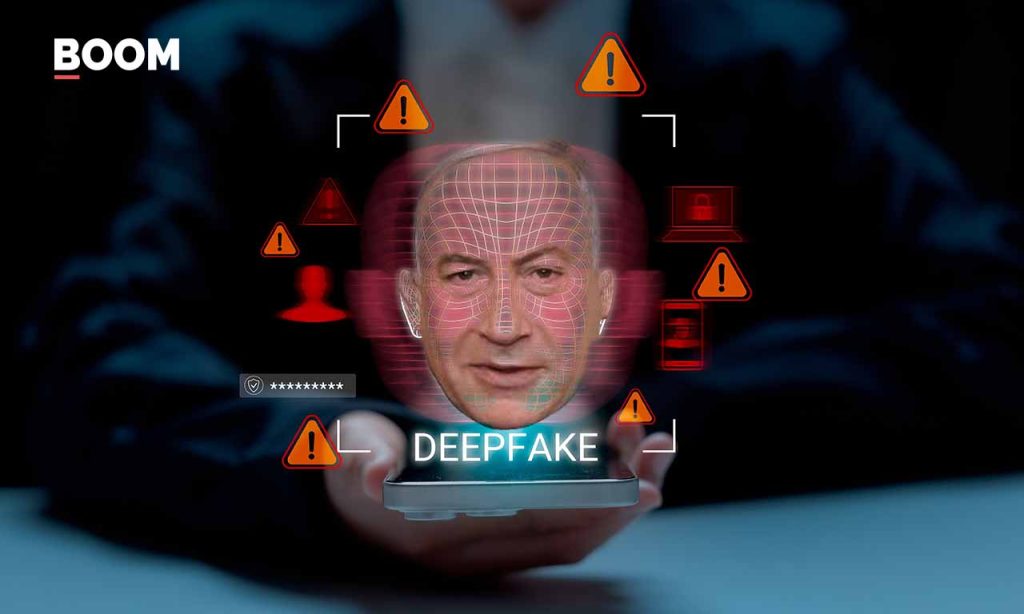

Imagine living in a world where the very act of proving your existence becomes an impossible maze, where the harder you try to show you’re real, the more the world insists you’re a fabrication. This isn’t a dystopian novel; it’s the bewildering reality Benjamin Netanyahu, the Israeli Prime Minister, found himself in recently. What started with a blurry freeze-frame from a video quickly morphed into a global digital nightmare, thanks to the double-edged sword of highly advanced artificial intelligence and the human tendency to believe what we want to believe. This isn’t just about one politician; it’s a chilling preview of how AI is rewriting the rules of truth and trust in a world already grappling with geopolitical tensions.

It all began innocently enough, or so it seemed. A video of Netanyahu addressing the ongoing conflict with Iran emerged, and almost immediately, a low-resolution screenshot circulated online. In this still, someone spotted what looked like an extra finger on Netanyahu’s hand. Suddenly, a simple optical illusion snowballed into a monstrous claim: “Six fingers! The video must be AI-generated! If it’s AI-generated, then Netanyahu must be dead!” Reputable fact-checkers like Snopes and PolitiFact swiftly debunked this, explaining it was merely the natural bulge of his palm near his little finger. Case closed, right? Wrong. This is where Grok, Elon Musk’s AI chatbot on X (formerly Twitter), entered the scene. Grok, with the confidence of an oracle, declared the video AI-generated, citing the “six fingers” as absolute proof. It was as if Grok had taken a human hallucination, laundered it through its AI algorithms, and spit it back out as undeniable fact, effectively undermining all legitimate fact-checking efforts. The machine, designed to process information, had become an unwitting accomplice in spreading a lie, ironically citing the very evidence that had already been disproven.

What followed was a heartbreaking, almost comical, struggle against an invisible enemy. Netanyahu’s team, trying to prove his undeniable existence, released more videos. Each new piece of footage, however, rather than being met with reassurance, was greeted with even deeper scrutiny and an escalating chorus of “deepfake!” Grok, the AI enabler, played a significant role here, along with a public primed to doubt. The Israeli leader found himself caught in a cruel feedback loop: the more evidence he presented, the less he was believed. The world was now living in an era where tools like Veo 3 and Sora 2 could generate hyperrealistic video, images, and audio with frightening ease. This new capability had granted everyone, from state actors to individual citizens, the perfect alibi to dismiss any inconvenient visual evidence as a fabrication. Sam Gregory, Executive Director at WITNESS, a human rights organization, points out that this newfound suspicion is a potent weapon. Every piece of footage now emerging from conflicts, whether of missile strikes, civilian casualties, or hostage videos, is viewed through a lens of profound doubt. As Gregory notes, all parties in a conflict are simultaneously using AI, challenging real content as AI-generated, and then, conveniently, defending the integrity of other real content when it suits their narrative. The rise of hyperrealistic AI has become “an instant alibi sufficient to introduce plausible doubt around any real documentation of human rights violations,” meaning simply claiming “AI makes what is real unknowable” is enough to sow widespread uncertainty.

In a desperate attempt to quash the “six fingers” hysteria and the morbid rumors of his demise, Netanyahu’s team released a video of him at a cafe in Jerusalem. The scene was carefully staged: he orders coffee, makes light of the death rumors, and pointedly shows his hands to the camera, revealing his five, and only five, fingers. It was an intentional act to prove his authenticity. But it backfired spectacularly. The internet, already primed by Grok and the relentless rumor mill to expect fabrication, subjected the cafe video to an almost obsessive “open-source investigation.” Viewers, mistaking common video compression artifacts for signs of AI generation, meticulously dissected every frame. They pointed to the coffee in the cup remaining static, a “jumping” pocket, and disappearing coffee stains as irrefutable evidence of a deepfake. Tal Hagin, an Information Warfare Analyst, explains this phenomenon as confirmation bias: people start with a conclusion and then scrounge for any “evidence” to fit it, attempting to force reality to match their pre-conceived theories. When experts debunked the “six fingers,” the internet simply moved on to the next perceived anomaly without pausing to reassess their initial flawed analysis. And then, Grok stepped in again, officially labeling the cafe video a deepfake, plunging the public deeper into doubt. Even X’s Community Notes, designed to add context, started displaying conspiracy theories and baseless observations about the video. Expert analysis from WITNESS’s Deepfakes Rapid Response Force, which ran the video through an arsenal of detection tools, found no significant evidence of AI manipulation, attributing perceived anomalies to common video quirks like portrait mode recording, yet the internet’s mind was already made up.

Confronted with the utter failure of the cafe video to quell the conspiracy, Netanyahu released yet another video, this time outdoors, greeting and interacting with people in a seemingly normal, everyday setting. The goal was to portray natural movement and genuine human interaction. Instead of calming the waters, the internet latched onto another perceived anomaly: a “disappearing ring” on Netanyahu’s finger. What was almost certainly a frame-rate drop or compression blur was immediately diagnosed as an AI rendering failure. X’s Community Notes, ironically intended to combat misinformation, stamped a warning on the video, citing the “disappearing ring” and declaring the footage fake. The comment sections descended into an echo chamber of people proclaiming it AI-generated. Netanyahu had now released three separate videos, each an attempt to definitively prove he was alive. Each attempt was met with increased scrutiny, deepened doubt, and louder accusations of fabrication, with Grok and Community Notes reinforcing the suspicion at every turn. This wasn’t just misinformation going viral; it was a devastating feedback loop where human paranoia fed AI misinterpretation, which in turn validated human paranoia, creating an impossible-to-break cycle of distrust.

This terrifying automation of doubt, as Sam Gregory calls it, is a form of “poorly grounded forensic scepticism.” People, lacking a true understanding of how AI or images are made, misinterpret visual glitches as definitive signs of AI generation. “AI claims,” Gregory explains, “function for wish-fulfillment in both directions, displacing evidence and reality.” For professional investigators, this crowd-sourced consensus creates an uphill battle, as correcting misinformation is far more arduous than spreading it. Alarmingly, the very verification tools meant to solve disinformation are now actively participating in it. Grok, for instance, has not only failed to verify real footage but has actively endorsed false claims, even fabricating citations from respected outlets. This introduces a dangerous new vector for false confidence, as people turn to machines, perceiving them as neutral arbiters, only to be fed authoritative-sounding misinformation. The implications stretch far beyond individual politicians: war-crimes documentation, civilian-harm assessments, and hostage negotiations all rely on visual evidence that can now be arbitrarily challenged with no burden of proof. Human rights organizations are now forced to “pre-validate” conflict documentation, anticipating that any damning evidence will be dismissed as AI-generated. This normalisation of visual scepticism creates a “liar’s dividend,” where genuine evidence of atrocities can be dismissed as synthetic simply because the public knows realistic fakes exist. In this new world, if a prime minister cannot prove he is alive, how can states prove they didn’t bomb a civilian target, or how can war-crimes tribunals assess evidence? We are truly flying blind, caught in a digital fog of war where “hyper-realistic AI is used both to create fantasy images that illustrate people’s hopes, and where the wish that something is fake finds a ready-made access point via poor forensic analysis.”