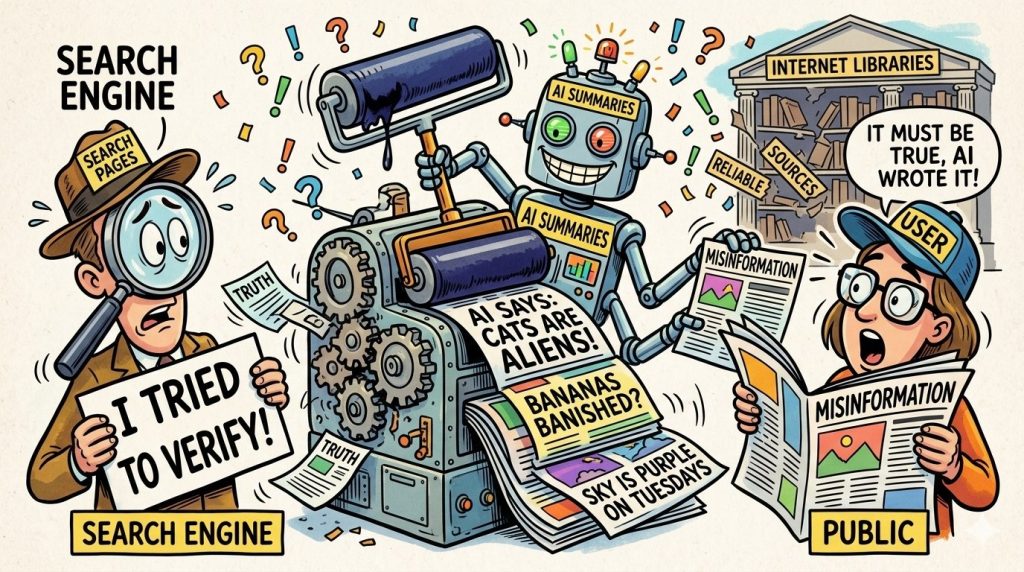

The digital landscape is abuzz with a new concern, as a recent survey, commissioned by The New York Times and conducted by AI startup Oumi, sheds light on the potential for widespread misinformation stemming from Google Search’s AI-generated summaries. While it might bring a fleeting moment of relief to publishers, who’ve been grappling with the seismic shifts brought on by AI, the implications are far-reaching. The survey found these AI summaries to be remarkably accurate, around 91% of the time, which on the surface sounds impressive. However, when you consider Google processes an astounding 5 trillion search queries annually, that seemingly small 9% inaccuracy rate translates into tens of millions of instances where users could be receiving incorrect or misleading information through Google’s AI Overviews, potentially every hour. This isn’t just a minor glitch; it raises serious questions about the unprecedented scale of misinformation Google might inadvertently be spreading. As publishers, who constantly battle for attention and accurate information, this revelation is a double-edged sword – offering a moment of validation for their concerns, yet deepening the anxieties about the future of truth in the digital age.

The report highlights some glaring examples of AI summaries “delivering a dud.” Take, for instance, the case of a search for “Dhurandhar Bhatawdekar.” Initially, Google’s AI served up pure nonsense. An avid movie buff, the author of the piece, quickly knew this name belonged to a character from the 1983 Bollywood classic “Rang Birangi,” played by the legendary Utpal Dutt. While it’s understandable that Google might struggle with indexing deep cuts from decades past, especially considering the existence of a Wikipedia page for the movie and character, the AI’s initial failure was notable. The plot thickened when actor Rakesh Bedi, playing a character in a new film also named “Dhurandhar,” made a social media post where he referred to himself by that name, referencing a decade-old TV series. This viral clip, and the media’s subsequent assumption that Bedi was the original “Dhurandhar,” likely prompted Google to re-evaluate its AI’s understanding. Now, if you search for “Dhurandhar Bhatawdekar,” the AI summary correctly outlines the “Rang Birangi” connection and even mentions the recent social media buzz. However, a more specific search for “Dhurandhar Bhatawdekar Rakesh Bedi” completely skips any mention of Bedi’s video or TV series, focusing solely on his role in the new “Dhurandhar” film. This illustrates a crucial point: while AI can learn and adapt, its initial inaccuracies, and the potential for it to misattribute or misinterpret information, are a significant concern. The fact that human intervention or widespread public correction is sometimes needed to course-correct these AI summaries highlights the ongoing need for critical thinking and human oversight.

The study’s findings are particularly troubling when considering how readily users accept AI-generated information. The sheer volume of search queries means Google, despite its best intentions, could be fueling a misinformation crisis. An Inc.com study from last July noted that a minuscule 8% of users actually bother to double-check AI-provided answers. Even more alarming, research from the University of Pennsylvania, cited by Futurism, revealed that over 80% of users took ChatGPT’s output at face value, even when it was incorrect. Researchers termed this phenomenon “cognitive surrender,” a stark phrase that underscores the growing trust, perhaps misplaced, in AI. This tendency to blindly accept AI-generated content is precisely why the NYT-Oumi research, utilizing a simple yet effective test called SimpleQA, is so vital. This benchmark, designed by OpenAI itself, was used to evaluate Google’s AI Overviews, first powered by Gemini 2 and later by the upgraded Gemini 3. While Gemini 3 demonstrated improved accuracy, the lingering concern remains the AI’s propensity for “hallucinations” – generating plausible but false information. Google, through spokesman Ned Adriance, dismissed the study’s relevance, stating it “doesn’t reflect what people are actually searching on Google.” However, this assertion feels somewhat ironic given Google’s own past admissions about its AI models producing incorrect information. The tech giant has consistently promoted its AI’s summary accuracy as a byproduct of its decades-long, high-quality search indexing, claiming it gives Gemini a distinct advantage over other AI models. Yet, the evidence suggests a more nuanced reality where even sophisticated AI can stumble.

For publishers, who have seen their revenues eroded by the rise of AI summaries, this news is a mixed bag – a glimmer of good news amidst ongoing struggles. The direct correlation between these summaries and the decay of the pay-per-click (PPC) advertising model has forced publishers to unite, calling for a “content independence day.” An earnings call last November from People Inc. (formerly Dotdash Meredith) vividly illustrated this decline, revealing that Google Search, which once accounted for 54% of their traffic two years prior, now only contributes 24%. Neil Vogel, CEO of People Inc. and a vocal critic of AI models training on content without compensation, declared that their decision to block AI crawlers had been “very effective,” forcing dialogue and ultimately leading to a new licensing deal with Microsoft, complementing their existing agreement with OpenAI. This assertive stance by larger conglomerates offers a lifeline, demonstrating that collective action can yield results. It signifies a potential shift in power dynamics, where publishers are beginning to assert their value in the AI ecosystem.

However, this emerging strategy carries a significant risk, especially for smaller publishers, as one of our columnists at CXO Today meticulously explored. While blocking AI bots can feel empowering, in ad-dependent media economies like India’s, it might inadvertently accelerate their demise. For Indian digital media, the ability to block AI crawlers isn’t a weapon of offense but a “strategic siege.” It’s a defensive maneuver that offers temporary satisfaction but does little to alter the harsh economic realities. While large media corporations can afford to build digital walls and patiently wait for lucrative licensing deals, the vast majority of Indian publishers find themselves in a precarious position. By blocking bots, they risk becoming invisible in an increasingly AI-driven search environment. But by allowing them unrestricted access, they risk becoming obsolete, their content consumed and summarized without fair compensation or credit. Unlike their Western counterparts, Indian publishers often lack the economic leverage to demand a third, more equitable, option. Alok Gurtu, a 20-year veteran of the industry and an advisor to startups, aptly concludes that this puts them between a rock and a hard place, highlighting a critical disparity in the global AI landscape and the urgent need for solutions that support the diverse ecosystem of content creation.