Imagine the bustling digital town square of X (formerly Twitter) in 2022, a place where opinions fly thick and fast, and sometimes, truths get a little tangled. Our team embarked on a massive detective mission, akin to observing nearly 1.7 million townspeople and sifting through almost 15 million of their conversations, or “tweets.” Our goal was simple yet crucial: to figure out if we could spot those who tend to spread unreliable information just by looking at how they interact on this platform. We weren’t interested in judging individual statements, but rather, the overall “factuality” of the news sources they shared, like discerning whose digital newspaper stack was filled with credible sources versus whose had more sensational, less vetted stories. We gave everyone a “factuality score” based on the news links in their last 500 tweets, then neatly sorted them into three groups: the ‘High Factuality Folks’ (those who mostly share credible stuff), the ‘Low Factuality Folks’ (who lean towards less credible sources), and the ‘Middle Grounders’. To ensure our findings weren’t just a fluke, we even created a thousand imaginary versions of our dataset, shuffling factuality scores like a deck of cards while keeping everything else the same, so we had a solid benchmark to prove our discoveries were truly meaningful.

With our meticulously prepared dataset, we zoomed in on a few easily observable characteristics, what we called “easy-access social network metrics,” hoping they’d serve as early warning signs for potential misinformation spreaders. Think of it like trying to identify reliable shopkeepers in our digital town: you might look at how many customers they have, how often they’re open, how many other businesses they interact with, and how long they’ve been around. Drawing inspiration from previous studies on human behavior online – how we form social connections, the limited attention we pay to things, and our general savviness (or lack thereof) with digital information – we chose four key indicators: how many followers someone has (their popularity), how often they tweet (their daily activity), how many other accounts they follow (their curiosity, perhaps?), and how long they’ve had their account (their established presence). This careful selection was crucial, as we wanted to make sure our chosen metrics were rooted in established understanding of online interactions.

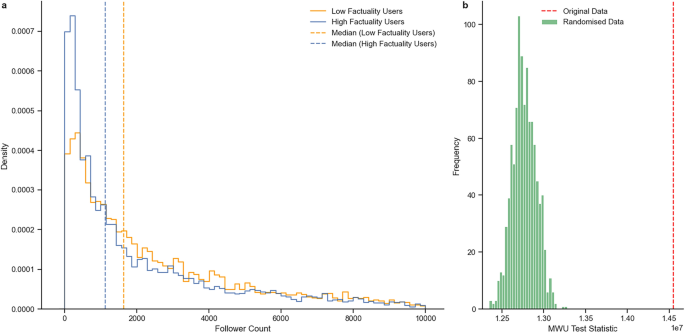

Before diving into the heart of our analysis, we had to make sure we were studying actual people, not just the digital equivalent of town criers, automated bots, or official news channels and celebrities. So, we filtered our dataset like a sieve, keeping only the “regular users” – the everyday folks. This meant excluding verified accounts (no official blue checks), limiting daily tweets to a manageable 32 (to avoid bots or constantly broadcasting news feeds), capping followers at 10,000 and followed accounts at 10,000 (to exclude mega-influencers), and ensuring healthy follower-to-following ratios (to weed out spam accounts). These thresholds were carefully chosen to help us focus on the typical user experience, ensuring our insights reflected the broader population rather than a few outliers. Once we had our filtered group, we then clearly defined our ‘Low’ and ‘High Factuality Folks’ by looking at the bottom and top 30% of users based on their credibility scores, allowing us to later test if these percentages made a big difference to our results.

One of our most striking discoveries, and perhaps the most intuitive, was that popularity, measured by the number of followers someone has on X, seemed to be linked to sharing less factual information. It’s like finding that the loudest, most attention-grabbing person in the digital town square is often the one spreading rumors rather than verified news. When we compared the ‘Low Factuality Folks’ to the ‘High Factuality Folks,’ we consistently found that those who tended to share less credible content had a significantly larger audience. This wasn’t just a casual observation; our statistical tests, comparing our real-world data against our thousand shuffled datasets, confirmed this connection was definitely not by chance. So, if you see someone with a huge following, it might just be a subtle hint that a closer look at the accuracy of their shared information could be warranted, aligning with our initial hunch that social drivers might often overshadow a concern for accuracy online.

Beyond popularity, we found two other noticeable patterns among our ‘Low Factuality Folks’: they tended to tweet more frequently and followed a greater number of other accounts. Imagine someone constantly shouting in the town square and trying to listen in on every conversation happening – it’s a lot of input and output. Drawing on existing ideas about “information overload” – the idea that when people are swamped with too much information, it becomes harder to discern what’s true – we hypothesized that these two behaviors would also differentiate the less credible from the more credible users. Our analysis strongly supported this: users in the ‘Low Factuality’ group were indeed more prolific tweeters and cast a wider net in terms of who they followed. Again, these weren’t just correlations; our rigorous statistical tests showed these were significant differences. This suggests that a constant stream of self-generated content and a broad, perhaps indiscriminate, consumption of information from a vast network could be subtle markers of a potential inclination towards spreading misinformation.

Conversely, our last key finding offered a glimmer of hope for a more seasoned and discerning online presence: the longer someone had been registered on X, the more likely they were to belong to the ‘High Factuality Folks.’ It’s as if the digital townspeople who had been around for a while, who had perhaps learned the ropes and grown wiser with experience, were better at distinguishing fact from fiction. This aligns with the concept of “digital literacy,” where experience and time spent navigating the online world can lead to a more critical approach to information. Our data showed that ‘Low Factuality Folks’ generally had newer accounts compared to their ‘High Factuality’ counterparts. This statistically significant observation suggests that a longer social media presence might indicate a greater digital maturity, fostering a more discerning approach to the information they choose to share, offering a subtle but optimistic indicator for identifying sources of more reliable information.

When we pulled all these threads together, using a more sophisticated statistical technique called multinomial regression, we started seeing a richer, more nuanced picture. This was like observing all the characters in our digital town square at once, seeing how their popularity, chattiness, social circles, and age on the platform all played into their tendency to share factual or less factual news. We compared both the ‘Low Factuality Folks’ and the ‘High Factuality Folks’ against our ‘Middle Ground’ group, to really highlight the distinguishing features. The results confirmed that each of our four metrics had a significant role to play, even when considering the influence of the others. For example, our data showed that having more followers still strongly indicated a higher likelihood of being in the low factuality group and a lower likelihood of being in the high factuality group. This suggests that the attention-grabbing power of popularity, even when balanced against other characteristics, remains a strong predictor of misinformation sharing.

Interestingly, the number of accounts a user followed revealed a more complex pattern. While it was associated with being less likely to be in either the ‘Low Factuality’ or ‘High Factuality’ groups (when compared to the ‘Middle Ground’), the effect was stronger for the ‘High Factuality’ group. This suggests a bit of a “Goldilocks effect”: those in the middle, who follow a moderate number of accounts, are less likely to be at the extremes of factuality. Meanwhile, those who follow very few or very many accounts might lean toward higher or lower factuality, respectively, but this particular metric seemed less robust as a standalone identifier. However, our findings on daily tweet frequency were consistent with our earlier individual observations: the more someone tweeted, the more likely they were to be in the ‘Low Factuality’ group and the less likely to be in the ‘High Factuality’ group, reinforcing the idea that constant, rapid output might be at odds with careful vetting of information.

Finally, the age of an account proved to be a consistently strong indicator. Older accounts were significantly more likely to be found among the ‘High Factuality Folks’ and less likely among the ‘Low Factuality Folks.’ This reinforces the idea of digital wisdom accruing over time – perhaps longer-term users have developed a healthier skepticism, improved their digital literacy, or simply found their preferred trusted sources. What’s more, we rigorously tested our findings by tweaking our data filters and factuality thresholds in various ways, confirming that these relationships generally held true across different scenarios. While some metrics, like follower count, showed a bit more sensitivity to how we set up our analysis, the core message remained: tweet frequency and account age were particularly reliable signals, providing robust clues about a user’s factuality tendencies.

Delving even deeper, we explored how these various social habits interacted with each other – like understanding how a person’s popularity might influence their chattiness, or how their age on the platform affects their social circle. We found some fascinating interplay. For instance, the impact of how much someone tweets on their factuality strongly depended on how many accounts they followed. Among people who followed many accounts, tweeting more actually increased their likelihood of being in the high factuality group – perhaps they were engaging with a diverse, well-curated information diet? However, for those who followed fewer accounts, frequent tweeting showed a stronger link to low factuality. Another interesting interaction involved account age: while older accounts generally meant higher factuality, this trend was reversed for those who followed many accounts. In this specific scenario, the older the account was among those following many people, the less likely they were to be highly factual. These intricate interactions paint a dynamic picture, reminding us that there’s no single, simple answer to spotting misinformation spreaders, but rather a complex web of social behaviors that, when viewed together, can offer valuable insights into the credibility of information shared online.