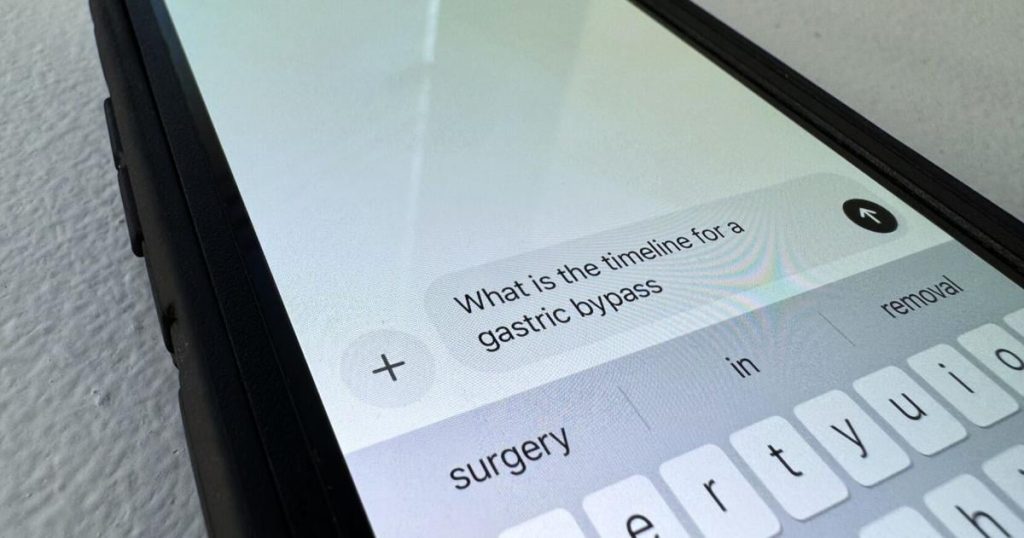

It’s fascinating how quickly artificial intelligence (AI) has gone from the realm of science fiction to a tangible part of our everyday lives. From optimizing our music playlists to suggesting what to buy online, AI is already deeply integrated into many facets of our modern existence. So, it’s perhaps not surprising that its potential for healthcare has sparked considerable excitement, even reaching a point where people are turning to AI for medical advice. We’ve all seen the headlines, heard the whispers, and maybe even experimented with it ourselves: asking a smart assistant about a strange cough or typing symptoms into a large language model. On the surface, the allure is strong. Imagine instant access to information, no waiting rooms, no appointment scheduling – just a quick query and a potentially insightful response. The idea of democratizing health knowledge, making it readily available to anyone with an internet connection, feels undeniably progressive and empowering. There’s a certain romanticism to the notion that technology can solve some of our most deeply ingrained problems, offering a panacea to the complexities of the human body and the often-overwhelming healthcare system. This promise of convenience and accessibility is indeed a powerful draw, particularly in a world where access to medical professionals can be uneven and their time often stretched thin.

However, as with any groundbreaking technology, the initial enthusiasm often needs to be tempered with a healthy dose of caution and critical evaluation. While AI’s potential in healthcare is undeniably vast – assisting with research, analyzing complex data, or even streamlining administrative tasks – relying on it for direct medical advice, especially in a diagnostic capacity, is a far more contentious issue. It’s in this crucial area that a growing chorus of medical professionals, including the physician highlighted in the Tribune Courier article, are sounding alarm bells. They’re not dismissing AI outright, but rather urging a crucial distinction between AI as a tool to support human doctors and AI as a replacement for them. The core of their concern isn’t just about the technology’s current limitations, but about the fundamental nature of medical practice itself. Medicine is not merely a collection of data points; it’s an intricate blend of science, experience, intuition, and, perhaps most importantly, human connection. This nuanced reality is where AI, despite its impressive computational power, currently falls short and where the real dangers of its misuse begin to emerge.

One of the most pressing concerns raised by doctors is the significant risk of misinformation. While AI models can churn out vast amounts of text that sound authoritative, they don’t possess genuine understanding or clinical judgment. Their responses are based on patterns learned from the data they were trained on, which can be flawed, incomplete, or even incorporate societal biases present in the original material. Imagine a patient describing symptoms to an AI. The AI might pull information from unreliable sources, misinterpret the context of the symptoms, or even inadvertently combine disparate pieces of information to form a misleading conclusion. The internet, while a treasure trove of information, is also a swamp of misinformation, and AI models, in their current state, are not infallible navigators of this landscape. They lack the inherent ability to discern credible sources from unreliable ones, to critically evaluate conflicting information, or to identify subtle linguistic cues that a human doctor would instantly recognize as significant. The consequences of such misinformation, especially in a medical context, can be severe. A patient might be led to believe their condition is benign when it’s serious, or vice versa, potentially delaying crucial treatment or causing unnecessary anxiety. The very act of seeking information online, when not critically assessed, can already lead to self-misdiagnosis and inappropriate self-treatment; layering an AI with an authoritative-sounding but ultimately flawed response on top of that only exacerbates the problem.

Beyond outright misinformation, the risk of missed diagnoses is perhaps even more alarming. A human doctor brings years of training, countless hours of clinical experience, and a finely tuned ability to ask probing questions to a diagnostic challenge. They can observe subtle non-verbal cues – a patient’s demeanor, their gait, the way they describe their pain – that are impossible for an AI to fully process. They can synthesize seemingly unrelated pieces of information into a coherent diagnostic picture. More importantly, doctors understand the inherent variability of human biology and the often-atypical presentations of diseases. An AI, however, is limited by its training data. If a particular rare disease or an unusual symptom presentation wasn’t heavily represented in its dataset, it might completely miss it. Furthermore, a human doctor engages in a dialogue, adapting their questions based on the patient’s answers and exploring possibilities that an initial algorithm might not present. An AI, by contrast, is more likely to follow a predetermined path, missing opportunities to delve deeper into nuances. This isn’t just about finding the right answer; it’s about asking the right questions and understanding the human story behind the symptoms. When a diagnosis is missed, the patient loses precious time, their condition can worsen, and the trust in the healthcare system, and AI as a supposed helper, is eroded. The Tribune Courier article’s physician wisely highlights this, emphasizing that true diagnosis involves more than pattern matching; it involves critical thinking, differential diagnosis, and a deep understanding of human physiology in all its complex manifestations.

So, where does this leave us? The message from medical professionals isn’t to fear AI or to reject its potential. Instead, it’s a call for discernment, responsible integration, and a clear understanding of its limitations, especially concerning direct patient care. We need to view AI not as a surrogate doctor, but as a powerful tool that can augment the capabilities of human doctors. Imagine AI assisting in crunching vast amounts of research data to identify potential drug targets, or sifting through patient records to flag individuals at high risk for certain conditions. Picture AI simplifying paperwork, freeing up doctors to spend more quality time with their patients. These are areas where AI truly shines and can significantly enhance healthcare delivery without jeopardizing patient safety. The human element in medicine – empathy, critical thinking, personalized assessment, and the ability to build trust – remains irreplaceable. A doctor isn’t just a dispenser of information; they are a confidant, an advocate, and a guide through moments of vulnerability and uncertainty. This is a role that, foreseeably, no algorithm can replicate.

Ultimately, the enthusiasm for AI in healthcare needs to be harnessed responsibly, focusing its power on what it does best while safeguarding against its inherent weaknesses. The warnings from physicians, like the one quoted in the Tribune Courier, are not intended to stifle innovation but to ensure that our pursuit of technological advancement doesn’t inadvertently harm the very people we aim to help. We, as individuals, also have a responsibility: to approach AI-generated medical advice with a skeptical eye, to remember that it is a tool, not a doctor, and to always prioritize consulting with qualified healthcare professionals for our health concerns. The future of healthcare will undoubtedly involve AI, but it will be a future where AI works intelligently alongside humans, rather than attempting to replace the irreplaceable and deeply human art of healing.