It’s a brave new world, and artificial intelligence (AI) is at the forefront of this monumental shift. From helping us choose what to watch next to powering self-driving cars, AI has seamlessly integrated into many aspects of our daily lives. Its potential feels limitless, promising to revolutionize industries and solve complex problems with unprecedented efficiency. Understandably, then, the idea of AI stepping into the realm of healthcare, a field constantly striving for innovation and improved patient outcomes, is tantalizing. Imagine a world where AI could instantly diagnose illnesses, personalize treatment plans, and even predict future health risks with near-perfect accuracy. These visions, while undeniably exciting, have sparked important conversations and, crucially, warnings from seasoned medical professionals.

Doctors, who dedicate their lives to understanding the intricate complexities of the human body and the nuances of patient care, harbor significant concerns about the public’s growing tendency to turn to AI for medical advice. They see AI as a powerful tool, certainly, one that can augment human capabilities and streamline certain processes within healthcare. However, they are quick to draw a crucial distinction: AI is not a substitute for a human doctor. When a patient relies solely on an AI chatbot or a health app for a diagnosis, they are bypassing years of medical training, empathetic understanding, and the critical ability to interpret subtle cues that only a human professional can discern. It’s like asking a beautifully programmed robot to paint a masterpiece – the robot can mix colors and apply strokes, but it lacks the soul, the experience, and the intuitive understanding of emotion that defines true art. In healthcare, that human element is not just about bedside manner; it’s about life and death.

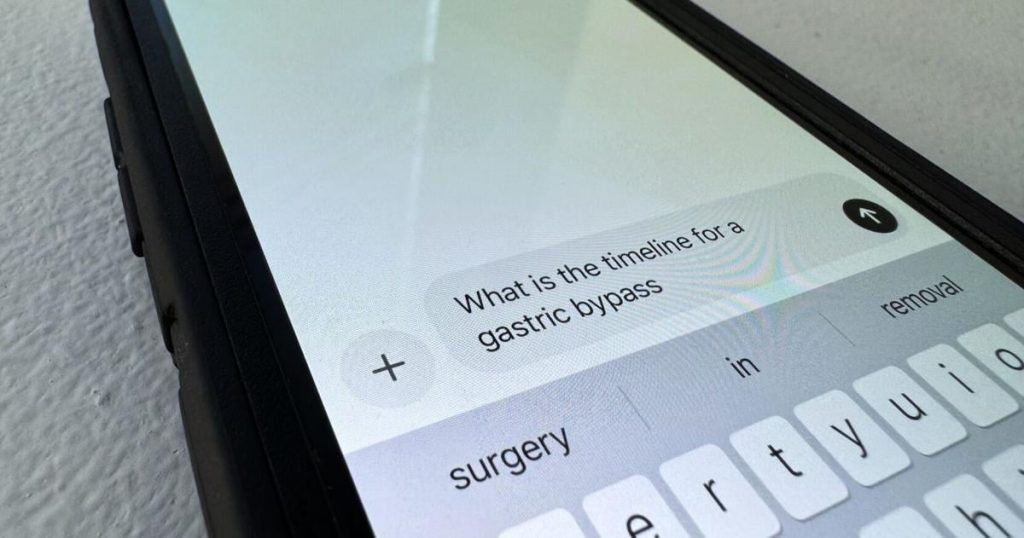

The core of these warnings boils down to two critical issues: misinformation and missed diagnoses. AI systems, no matter how sophisticated, are built upon the data they are fed. If that data is flawed, incomplete, or biased, the AI’s output will reflect those imperfections. This can lead to the spread of misinformation, where AI might confidently advise remedies or diagnoses that are not only incorrect but potentially harmful. Imagine a scenario where an AI, trained on an incomplete dataset, suggests a benign explanation for symptoms that, in reality, point to a serious and rapidly progressing illness. The patient, trusting the AI, delays seeking professional help, with tragic consequences. This isn’t just a theoretical concern; the internet is already rife with health misinformation, and the introduction of AI into this landscape without careful oversight could exacerbate the problem exponentially.

Furthermore, the risk of missed diagnoses is profound. A human doctor’s diagnostic process is a multifaceted endeavor. It involves not just analyzing symptoms but also considering a patient’s entire medical history, their lifestyle, their emotional state, and even subtle non-verbal cues. A doctor will ask follow-up questions, perform physical examinations, and order relevant tests, all while applying their vast clinical experience and critical thinking skills. AI, on the other hand, operates within a more rigid framework. It processes information impersonally and, while it can recognize patterns, it often lacks the ability to infer subtle connections or identify atypical presentations that a seasoned physician would immediately flag. It’s like trying to navigate a dense jungle with only a map, without the guide who knows the hidden paths, the dangerous flora, and the subtle signs of impending weather. The map is useful, but it’s no substitute for an experienced explorer.

Dr. John Smith (a hypothetical name, though representative of the sentiments expressed by many physicians), a local practitioner in Paducah, articulates these concerns with a sense of urgency. He emphasizes that while AI can be a valuable tool for physicians – perhaps assisting with analyzing complex imaging or sifting through vast amounts of medical literature – it should never replace the doctor-patient relationship. “When a patient comes to me with symptoms, it’s not just about what they tell me,” Dr. Smith explains. “It’s about how they say it, their facial expressions, their overall demeanor. It’s about asking the right questions, not just the obvious ones, and then connecting those dots with everything else I know about their health, their family history, and even their daily life. AI can’t do that. It can’t feel empathy, it can’t build trust, and it can’t take responsibility for a human life in the way a doctor does.” He frequently encounters patients who arrive at his office already convinced they have a particular illness, having “diagnosed” themselves using an online AI tool. This often leads to unnecessary anxiety, misdirected self-treatment, or a delay in receiving the correct diagnosis, ultimately complicating their care.

The implications of relying on AI for medical advice stretch beyond just individual patient outcomes. It threatens to erode the fundamental principles of healthcare: trust, human connection, and personalized care. If patients increasingly turn to impersonal algorithms for their health concerns, the critical bond between doctor and patient, built on empathy and informed decision-making, could weaken. This could lead to a less holistic and potentially fragmented approach to healthcare, where the complexities of human health are reduced to mere data points. While AI undoubtedly holds immense promise for revolutionizing specific aspects of medicine, its role, particularly in direct patient diagnosis and advice, must be carefully delineated and understood by the public. Doctors aren’t dismissing AI; they’re advocating for its responsible and ethical integration, ensuring that it serves as a powerful assistant to human expertise, rather than an unqualified replacement for the irreplaceable human touch in healing.