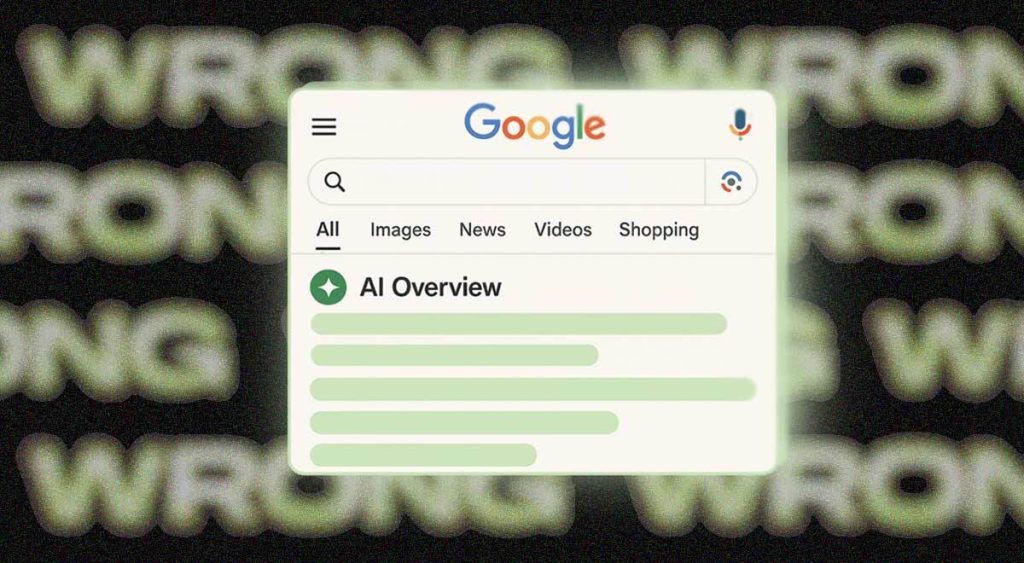

In the ever-evolving landscape of information, Google’s AI Overviews feature has emerged as a double-edged sword, promising instant answers while simultaneously raising alarms about the potential for widespread misinformation. On the surface, the numbers from a recent study by the AI startup Oumi seem impressive: Google’s AI-generated summaries, which pop up prominently above traditional search results, boast an accuracy rate of roughly 91%. That’s like getting nine out of ten questions right on a test – a score most of us would be incredibly proud of. But let’s take a moment to truly grasp the monumental scale of Google’s operations. The tech giant processes a staggering 5 trillion search queries every single year. When you apply that seemingly small 9% error rate to such an immense number, the implications become truly staggering. We’re no longer talking about a few isolated mistakes; we’re talking about tens of millions of erroneous or questionable answers being churned out every single hour, or hundreds of thousands every minute. This isn’t just a minor glitch; it’s a potential tsunami of inaccuracies that could fundamentally alter how we perceive and trust the information we encounter daily. The very entity that once curated information for us is now becoming a publisher, and in doing so, is grappling with the immense responsibility of ensuring the reliability of the vast ocean of data it presents.

Oumi’s analysis offers a deeper dive into this phenomenon. They rigorously tested Google’s AI Overviews using an industry-standard benchmark called SimpleQA, first in October when the Gemini 2 model was at the helm, and again in February after the system was upgraded to Gemini 3. The initial tests with Gemini 2 revealed an accuracy of 85%, which then improved to 91% with the more advanced Gemini 3, widely regarded as one of the least “hallucinatory” AI models available. While this improvement is a positive sign, it’s crucial to remember that even the most cutting-edge AI still has its limitations. The study focused on a substantial set of 4,326 complex Google searches, giving us a robust snapshot of the system’s performance. However, what’s equally concerning, according to Oumi, is that over half of these “accurate” responses were actually “ungrounded.” Imagine being told something is true, but then when you try to trace it back to its source, the links provided don’t fully support the information. This “ungroundedness” makes it incredibly difficult for users to verify the AI’s claims, eroding trust and making critical evaluation nearly impossible. It essentially gives the AI the final say, without offering a clear path for independent verification.

Adding another layer to this digital labyrinth is the unsettling fact that Google’s AI system can generate a completely new response to the same query, even if those queries are made seconds apart. This means you could search for something, get an answer, then refresh the page and receive a different (and potentially contradictory) answer. This inconsistency creates a fundamental problem: how can users trust information that appears to shift and change? Oumi CEO Manos Koukoumidis perfectly encapsulates this dilemma, asking, “Even when the answer is true, how can you know it is true? How can you check?” This question strikes at the heart of the matter. In a world where information is constantly flowing, the ability to discern truth from falsehood is paramount. If the very tools we rely on for information become unreliable, the foundations of informed decision-making begin to crumble. This fluctuating nature of AI-generated responses not only hinders verification but also undermines the core principle of a reliable information source.

The potential for a severe misinformation crisis looms large. Numerous studies have sadly revealed a troubling human tendency: people are often quick to trust what an AI tells them, questioning it far less than they would a human source. This inherent bias towards AI-generated content is particularly alarming given the identified inaccuracies. While Google has indeed added a small-print disclaimer to its AI Overviews, cautioning users that “AI can make mistakes, so double-check responses,” the effectiveness of such disclaimers appears limited. Reports from last year indicated that a mere 8% of users actually took the time to double-check an AI’s answer. Even more recently, another study found that an astonishing 80% of people still followed an AI’s advice, even when it was demonstrably wrong. These statistics paint a bleak picture of user behavior, highlighting a widespread misconception that AI chatbots, regardless of their integration into search engines, are fundamentally flawless.

This isn’t about placing blame solely on Google or the AI; it’s about understanding the complex interplay between advanced technology and human psychology. We’ve become accustomed to the convenience of instant answers, and the perceived authority of artificial intelligence often lulls us into a false sense of security. The sad reality, as these studies painfully illustrate, is that AI chatbots are still deeply flawed. They are learning machines, and like any learner, they make mistakes. Their “intelligence” is based on patterns and probabilities, not genuine understanding or critical thinking. Therefore, the information they present, while often appearing authoritative and confidently delivered, should always be approached with a healthy dose of skepticism. The onus, then, falls on us, the users, to cultivate a more critical approach to the information we consume, particularly when it originates from AI.

In conclusion, while the advancement of AI in search engines like Google is undoubtedly a marvel of modern technology, it comes with a hefty responsibility and a significant caveat. The high accuracy rate of AI Overviews masks a colossal volume of errors when scaled across billions of searches. The “ungrounded” nature of many accurate responses, coupled with the AI’s tendency to produce inconsistent answers, makes verification challenging and erodes trust. Most disturbingly, human users exhibit a dangerous level of implicit trust in AI, often neglecting to verify information even when warned. This concoction of technological limitations and human behavior creates a fertile ground for a potentially unprecedented misinformation crisis. Moving forward, it’s imperative that both AI developers and users foster a deeper understanding of AI’s capabilities and limitations. For users, it means adopting a more skeptical and critical approach to AI-generated content, actively seeking to verify information from multiple reliable sources. For developers, it means a continued commitment to improving accuracy, transparency, and the ability for users to easily trace back information to its origins. Only then can we truly harness the power of AI without falling prey to its inherent imperfections.