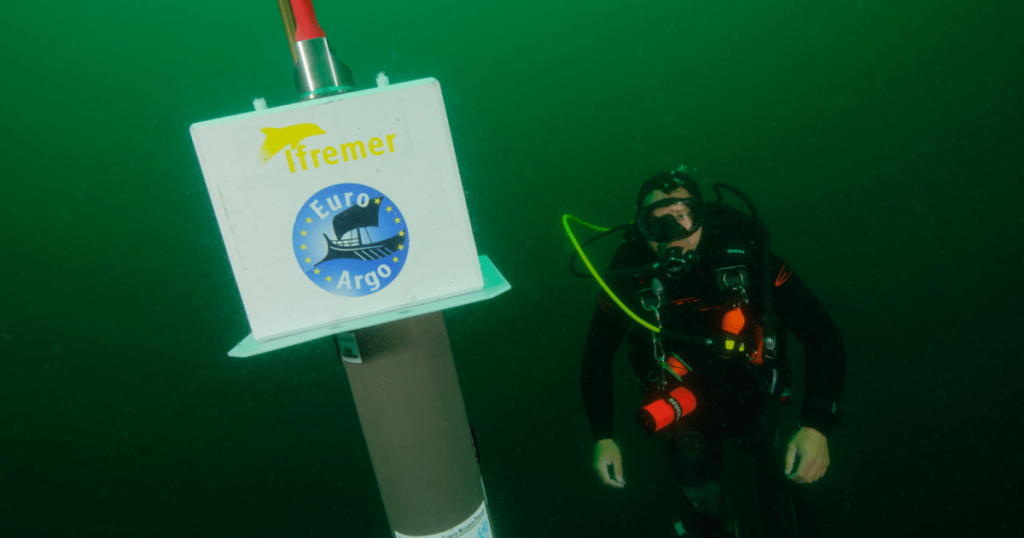

The scientific community is currently grappling with a curious and concerning development: a recent paper attempting to dismantle widely accepted climate science, particularly the understanding of ocean warming. This isn’t just an academic debate; it’s a battle against what some experts are calling “bullshit,” with the added complication of artificial intelligence amplifying its spread. At the heart of the issue is a paper claiming to expose “fatal flaws” in how we measure ocean heat content (OHC), a key indicator of global warming. The authors argue that the renowned Argo program – a sophisticated network of nearly 4,000 robotic ocean floats that have been meticulously collecting oceanic data for years – is insufficient, and therefore, our conclusions about ocean warming are baseless. One of the lead authors went so far as to declare on social media that the Intergovernmental Panel on Climate Change (IPCC)’s core findings have been “torn apart,” asserting that the oceans are not warming, let alone boiling. This audacious claim, if true, would indeed be a scientific bombshell, completely altering our understanding of climate change.

However, the scientific community’s response has been swift and overwhelmingly dismissive. Experts like Henri Drake, a professor of Earth System Science at the University of California, Irvine, succinctly explain that the paper “gets everything completely backwards.” Drake clarifies that the Argo program’s deployment of a specific number of floats was a conscious, well-researched decision designed to effectively measure ocean warming within pre-determined uncertainty ranges. In other words, the very “insufficiency” the paper criticizes was an intentional design choice, carefully considered for cost-effectiveness and scientific rigor. Kevin Trenberth, a highly respected climate scientist who has contributed to multiple IPCC assessments, was even more direct, calling the paper “absolute nonsense” and refusing to waste his time on it. Yet, despite the scientific consensus, the paper has gained traction on social media, with one of Cohler’s posts garnering nearly half a million impressions. This phenomenon highlights a key challenge in today’s information landscape: the “Bullshit Asymmetry Principle,” also known as Brandolini’s Law. This principle states that the effort required to refute misleading information is significantly greater than the effort needed to produce it, and as we’ll see, AI is making this asymmetry even more pronounced.

The credibility of this controversial paper is further undermined when we examine the backgrounds of its authors. The lead author, Jonathan Cohler, is prominently identified with MIT, a revered institution. However, upon closer inspection, Cohler’s affiliation with MIT was limited to being a clarinet instructor, and even that employment had ceased by the time the paper was published. While Cohler is an accomplished clarinetist with a Harvard physics degree, to label him a “leading scientist” in the field of climate science, based on this connection, stretches the definition of the term to a breaking point. Other co-authors also raise red flags. David Legates, a former professor of geography at the University of Delaware, has a long history of questioning mainstream climate science, even being removed from his state climatologist position due to his public statements. Kesten Green, a “senior marketing scientist” at the Ehrenberg-Bass Institute, and Ole Humlum, a professor emeritus, have also consistently expressed skepticism about anthropogenic global warming, attributing warming primarily to natural causes.

Perhaps most concerning is the involvement of Willie Soon, an astrophysicist and long-standing climate change denier. Soon’s credibility was significantly damaged in 2015 when it was revealed that he had received over a million dollars from the fossil fuel industry without disclosing this funding in his published research. Interestingly, a high school student, Franklin Soon (likely related to Willie), is also listed as an author, further raising questions about the paper’s scientific rigor and the authorship criteria. The authors’ acknowledgements also reveal a significant reliance on AI tools – Grok, Claude, Gemini, and ChatGPT – for substantial contributions to the paper, including “drafting, editing, conceptual development, research, logical structuring, literature synthesis, and iterative refinement.” While they credit these AI tools, they also criticize the “prejudice and discrimination” against listing nonhuman entities as authors, a rather telling statement given that Cohler, Legates, and both Soons previously listed “Grok 3” as a lead author on a paper questioning anthropogenic global warming.

This paper, therefore, is not just an isolated incident but a symptom of a larger, evolving problem. The increasing use of AI chatbots and large language models in scientific writing, while potentially offering some benefits, also introduces significant risks of errors, “hallucinations” (AI-generated falsehoods), and a blurring of lines between human and AI-generated content. It becomes incredibly difficult to distinguish between carefully edited AI-assisted papers and those that are largely AI-generated, both appearing equally legitimate on the surface. While one might be tempted to dismiss this particular paper as a fringe anomaly, hoping it fades into obscurity, doing so would be shortsighted.

This type of content acts as kindling for climate denial, providing ammunition for those who are “just asking questions” or “doing their own research” without a foundation in scientific evidence. While climate misinformation is not new, the advent of AI tools makes its production significantly easier and more sophisticated. The sheer volume of AI-generated “bullshit” threatens to overwhelm the capacity of legitimate scientists to refute it effectively, exacerbating Brandolini’s Law. Therefore, despite the energy it consumes, it becomes crucial to highlight and debunk such papers, not only to correct the record but also to expose the methods and motivations behind the spread of climate misinformation in the age of artificial intelligence. Failing to do so risks further eroding public trust in science and hindering urgent action on climate change.