In a world where news and information are constantly swirling around us, it’s becoming increasingly difficult to tell what’s real from what’s not. Sien Van den broeke, a passionate campaigner with Greenpeace Canada, experienced this firsthand when a seemingly groundbreaking article landed in her inbox. It spoke of a miraculous scientific discovery, a “breakthrough” from Canadian marine scientists that promised to make the contentious act of deep-sea mining environmentally safe. This was huge, a game-changer, as deep-sea mining—the controversial process of vacuuming up metallic nodules from the ocean floor—is notorious for creating massive sediment plumes that can smother marine life and release toxic metals. The United Nations, in fact, has maintained a moratorium on it due to these very concerns. The thought of a solution, however, raised a red flag for Van den broeke. She knew the industry was desperate to sway public opinion, and a “cure” for its biggest ecological headache could do just that, potentially opening the floodgates for this destructive practice. “The plume discussion is a very hot topic right now in the deep-sea mining debate,” she noted, highlighting the sensitivity surrounding the issue.

But a quick dive into the internet’s depths revealed a shocking truth: the entire article was a fabrication. The research, the academic journal it supposedly appeared in, even the experts quoted—all were figments of imagination. It was a phantom piece of journalism, a meticulously crafted illusion. The “Dr. Alistair Chen” from Natural Resources Canada and “Chief Emily Two Rivers,” an Indigenous leader, lending their voices to the story with eloquent, carefully worded quotes, simply didn’t exist. This wasn’t just a simple mistake; it was a sophisticated attempt at deception. Van den broeke was perplexed: “How could a journal have published a complete article around an academic study that does not seem to exist? I wonder how this article could have ever passed an editorial review prior to publishing?” The Canadian Mining Journal, to their credit, swiftly retracted the piece two days after its publication, admitting it “contained quotes attributed to individuals who do not exist” and was “not based on verified or real sources.” Publisher Robert Seagraves acknowledged it as “erroneous reporting,” while Joseph Quesnel, the editor, simply pointed to the retraction. A similar version of the story, published by an Australian outlet called Discovery Alert, which proudly claimed to be “the world’s fastest AI engine for mining news,” also vanished into the digital ether.

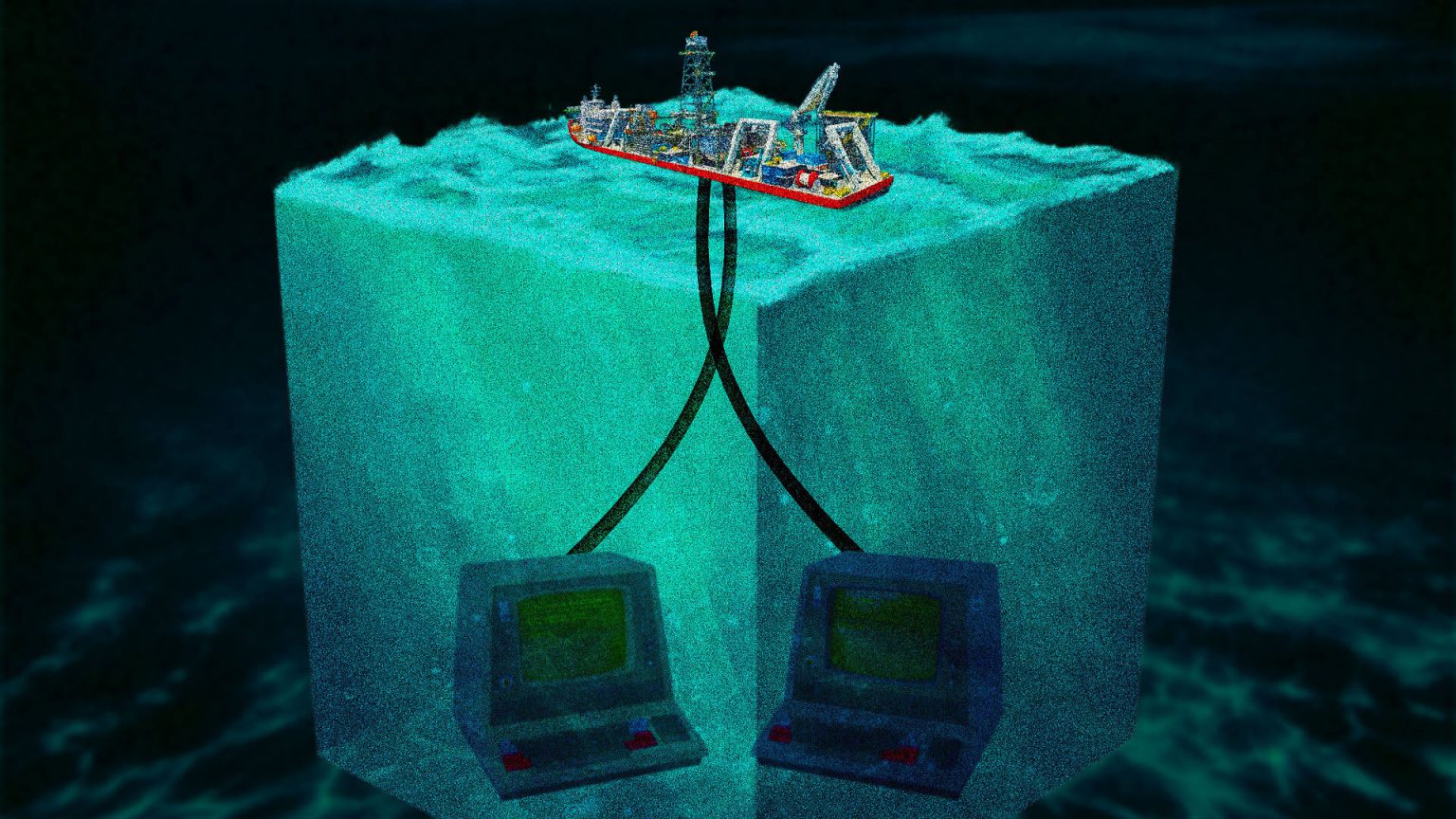

This incident, while quickly rectified, sent a chilling message: the fight for deep-sea mining is escalating, and the industry is willing to venture into new, deceptive territories. Experts warn that this marks a concerning trend of online disinformation, a deliberate attempt to manipulate public discourse. Van den broeke lamented, “It is definitely not the first fake research we’ve seen regarding the topic.” The deep sea remains largely unexplored, a vast mystery, making it easy for industry proponents to “capitalize on that lack of knowledge” and push their narratives. This isn’t just about a single fabricated article; it’s part of a broader pattern. She pointed to other instances, like a seemingly legitimate academic paper published in Environmental Science Advances that cited a non-existent study to downplay the environmental impact of deep-sea mining by comparing it to terrestrial mining across the entire lifecycle. Then there was Iceberg Research’s audit of The Metals Company’s pre-feasibility study for polymetallic nodule mining. The auditors found that crucial sections were written by the company’s own employees or small consultants, and the company resorted to “aggressive assumptions to manufacture” the claim of profitability, even when their own numbers suggested otherwise.

The disinformation campaign goes even deeper, literally. Van den broeke also recalled the fleeting existence of the “Deep Blue League,” a seemingly independent digital publication that popped up after former US President Donald Trump issued an executive order to unilaterally push forward with deep-sea mining licenses. This website, active for only a month or two, churned out article after article praising the industry, particularly The Metals Company. The supposed author of several of these pieces, a “Keriann Lee,” proved to be as elusive as the deep-sea Atlantis, with no verifiable online presence matching her photo on the website. Attempts by Van den broeke’s colleague to contact her when the site was still live were met with silence. These tactics, whether it be a fake website or a fabricated article in a seemingly legitimate publication, are, according to Fenwick McKelvey, a communications professor at Concordia University specializing in AI, an evolution of a “longstanding problem.” Resource companies have a history of secretly funding and publishing content that subtly or overtly favors their interests, a practice that is now being amplified and distorted by the rise of artificial intelligence.

McKelvey highlighted that the problem stems from a “broken” academic publishing system, where researchers are under immense pressure to publish frequently, and journals, unfortunately, often lack the rigorous systems needed to detect fabricated citations. He notes that the increasing use of large language models (LLMs) by academics for literature reviews and manuscript preparation further exacerbates this issue, leading to a “proliferation of fake citations.” Moreover, the online news environment itself has become a victim of what McKelvey calls “AI slop-ification.” We’re seeing an influx of “zombie” publications—outlets bought by larger firms and then filled with AI-generated content—alongside a daily deluge of AI-generated press releases landing in journalists’ inboxes, making it harder than ever to distinguish between real news and fabricated information. The relentless push by social media and search engine algorithms for content volume and speed to generate revenue means that verified, human-produced journalism struggles to compete with the sheer output of AI-driven content mills.

Ultimately, McKelvey believes the solution isn’t simply telling consumers to be more “internet-savvy” or to pay for quality journalism. While publishers undeniably have a responsibility to fact-check, he argues that a more fundamental shift is needed: increased public investment in academics and journalists. This would empower them with the resources and time necessary to produce thorough, high-quality work, acting as a bulwark against the tide of AI-generated disinformation. While McKelvey didn’t comment on the specific intentions behind the Canadian Mining Journal‘s publication of the fake article, he emphasized that this incident serves as a stark reminder of the profound challenges AI presents to the media landscape, and even more critically, to the general public who consume this information. The line between truth and fiction is blurring, and navigating this new reality requires vigilance, critical thinking, and a renewed commitment to supporting the very institutions designed to inform us reliably.