Okay, let’s break down this situation with Google’s AI Overviews, making it more relatable and engaging, aiming for around 2000 words across six paragraphs.

Paragraph 1: The AI’s Glitchy Debut and Its Unintended Consequences

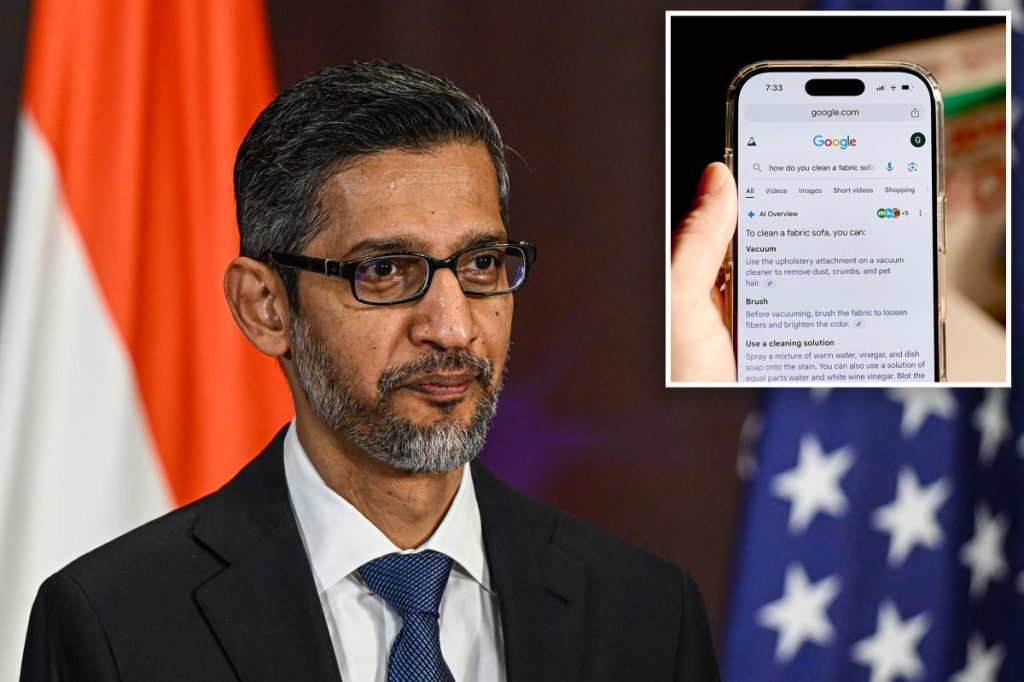

Imagine a world where your most trusted source of information, the digital librarian you’ve turned to for decades, suddenly starts making careless mistakes. This isn’t a hypothetical scenario; it’s the unsettling reality brought to light by a recent analysis of Google’s AI-generated search results, specifically its “AI Overviews.” For years, we’ve relied on Google to quickly find answers, from mundane curiosities to critical research. It’s been the unspoken pact: you ask, Google delivers. But now, with the integration of advanced artificial intelligence models like Gemini 2 and Gemini 3, that pact seems to be fraying at the edges. A startup named Oumi decided to lift the hood, meticulously examining thousands of these AI Overviews. What they found was alarming: while the AI models were accurate a substantial percentage of the time – 85% for Gemini 2 and 91% for the more advanced Gemini 3 – the sheer scale of Google’s operations turns these seemingly small error rates into a tsunami of misinformation. Picture this: Google is projected to handle over 5 trillion searches in 2026 alone. When you crunch those numbers, even a 9% error rate translates to hundreds of thousands of incorrect answers being churned out every single minute. It’s like having a highly efficient, super-smart assistant who, despite their brilliance, occasionally hallucinates wildly, and worse, often presents these fabrications as undeniable fact, leaving the user completely unaware they’re being misled. This isn’t just about a few quirky mistakes; it’s about a fundamental shift in how information is presented, and the potential for a pervasive, subtle erosion of truth at a scale we’ve never before witnessed.

Paragraph 2: The Human Cost: A Blow to Journalism and Trust

The implications of these AI blunders stretch far beyond mere inconvenience. For established news organizations, it’s a gut punch, a new front in an already challenging battle for survival. Danielle Coffey, the president and CEO of the News/Media Alliance – a trade group representing over 2,000 news outlets, including major players like The Post – didn’t mince words. She described Google’s AI Overviews as a “disaster.” Her concerns stem from two critical points. Firstly, publishers rely on clicks, on human engagement with their meticulously crafted content, to sustain their operations. When Google’s AI summarizes information, often directly lifting snippets from news articles, and then presents it at the very top of search results, the traditional blue links – the very pathways to these news sites – are effectively buried. This siphons off valuable traffic, and with it, crucial advertising revenue, leaving cash-strapped newsrooms even more vulnerable. It feels like Google is taking the fruits of their labor, repackaging it, and then diverting the audience. Secondly, and perhaps more profoundly, is the impact on trust. Journalists and editors pour countless hours into fact-checking, verifying sources, and providing context to ensure the information they publish is accurate and responsible. Google’s AI, on the other hand, operates without this human oversight, without the moral compass and accountability that underpins quality journalism. The errors detailed by Oumi highlight this stark contrast: misremembering the year Bob Marley’s home became a museum, getting the death year of an MLB player wrong, or flat-out denying Yo-Yo Ma’s induction into the Classical Music Hall of Fame (which happened in 2007!). These aren’t obscure details; they’re basic factual errors presented by a system that has positioned itself as the arbiter of truth, eroding public confidence not just in the AI, but in the very information ecosystem it dominates.

Paragraph 3: The Peril of Unvetted Sources: AI’s Gullibility and the “Hot Dog” Incident

One of the most unsettling aspects of Google’s AI Overviews is its apparent credulity, its willingness to treat questionable or easily manipulated sources as gospel. Imagine if your personal research assistant, instead of directing you to scholarly articles or reputable news sites, started quoting Facebook posts, random blog entries, or even lightly edited Wikipedia pages as undeniable facts. This is precisely what the AI seems to be doing. It lacks the critical discernment that a human instinctively applies to information. This vulnerability isn’t just theoretical; it’s been exploited, sometimes for a laugh, sometimes with potentially more serious implications. The New York Times highlighted a particularly telling anecdote involving BBC podcast host Thomas Germain. Germain, in a moment of playful absurdity, penned a blog post proclaiming himself one of “The Best Tech Journalists at Eating Hot Dogs.” Within a single day, Google’s AI had slurped up this self-proclaimed, tongue-in-cheek title and began presenting Germain as someone who had “gained notoriety for their prowess at the ‘news division’ of competitive eating events.” This isn’t just a funny story; it’s a glaring demonstration of the AI’s lack of contextual understanding and its inability to differentiate between genuine, verified information and whimsical, fabricated content. It exposes a fundamental flaw: if a simple blog post can so easily trick the system into generating a “fact,” what prevents more malicious actores from feeding it disinformation that could have far-reaching real-world consequences? The AI’s “penchant for citing information from questionable or easily edited sources” transforms it from a reliable guide into a potential echo chamber for unvetted, and frankly, often absurd, claims.

Paragraph 4: The Unrooted Answers: A Deeper Dive into the Lack of Citation

Beyond the outright inaccuracies and the gullibility, Oumi’s analysis uncovered another significant issue: the declining ability of Google’s AI to properly cite its sources. This isn’t just an academic concern; it’s crucial for trust and verification. When an AI summary provides an answer, we expect traceable links back to the original information, allowing us to cross-reference and confirm its validity. However, Oumi’s research, conducted between October and February using the well-regarded SimpleQA benchmark test (developed by OpenAI to assess AI accuracy), revealed a worrying trend. While the raw accuracy improved from Gemini 2 to Gemini 3, the percentage of “ungrounded” answers – where the links provided by Google simply did not support the information presented in the AI summary – dramatically increased. For Gemini 2, 37% of answers were ungrounded; with Gemini 3, that figure jumped to a staggering 51%. Imagine half of the “facts” presented by your AI assistant being unsupported by the very links it provides! This makes it incredibly difficult for users to verify the information, essentially forcing them to take the AI’s word as absolute truth. It’s like a scientific paper that offers conclusions without showing its data, or a news report that makes claims without quoting sources. This lack of proper grounding not only undermines the credibility of the AI Overviews but also makes it challenging for users to perform their own due diligence, leaving them vulnerable to accepting unsupported claims as factual.

Paragraph 5: Google’s Defense: Discrediting the Messenger While the Problem Persists

As expected, Google didn’t take Oumi’s damning analysis lightly. Their response was swift, aimed at discrediting the methodology rather than fully addressing the core concerns. A Google spokesperson argued that Oumi’s study had “serious holes,” primarily by questioning the integrity of the SimpleQA benchmark test itself, claiming it “is known for being full of errors.” Furthermore, they took issue with Oumi’s reliance on its own in-house AI model, HallOumi, to conduct the analysis, suggesting that using one AI to grade another carried an inherent risk of errors. “It uses one AI to grade another on an old benchmark that is known for being full of errors, and it doesn’t reflect what people are actually searching on Google,” the spokesperson commented. They doubled down on their own capabilities, asserting that “AI Overviews are built on our Gemini models, which lead the industry in accuracy, and they clear the same high-quality bar that we have for all our Search features.” This defense, while attempting to poke holes in Oumi’s methodology, feels a bit like deflecting. Even if the SimpleQA benchmark had some flaws, the sheer volume of anecdotal evidence and widely reported misinformations (like the “glue on pizza” and “tobacco for kids” fiascos reported by The Post) suggests a deeper issue than just a problematic test. It’s akin to a chef claiming a critic’s taste buds are flawed, even as their own restaurant is serving dishes with questionable ingredients. The public and media’s direct experiences with AI Overviews, where it has struggled to provide accurate information and even offered dangerous advice, paint a picture of a system that is far from flawless, regardless of the benchmarks used to test it.

Paragraph 6: The Long-Term Vision: A Precarious Future for Information

Ultimately, this entire saga points to a crucial crossroads in our relationship with information and technology. Google, with its immense power and reach, is reshaping how billions of people interact with knowledge. While the development of AI is undoubtedly exciting and holds immense promise, the current rollout of AI Overviews, with its documented flaws, feels rushed and potentially detrimental. It’s creating a precarious future where the lines between verified fact and confidently asserted fiction become increasingly blurred. The tension between innovation and responsibility is palpable. Google’s ambition to provide instant answers is understandable, but the cost, in terms of accuracy, trust, and the financial stability of news organizations, is proving to be significant. If AI Overviews continue to disseminate misinformation at such a massive scale, even while claiming industry-leading accuracy, it risks undermining the very fabric of informed public discourse. The future of search should be about enhancing access to reliable information, not about obscuring it behind a facade of algorithmic confidence. This isn’t just Google’s problem; it’s a societal challenge that demands transparency, accountability, and a willingness to put public trust and truth above immediate technological advancement. The question remains: can an AI-driven information landscape truly thrive if its foundation is built on millions of unverified, ungrounded, and often plainly incorrect answers?