Here’s a humanized summary of the provided text, aiming for a conversational tone and incorporating the core ideas into six paragraphs:

Let’s talk about something that’s quietly shaping our online world every single day: the smart “brains” that power our social media. You know, those clever computer programs, powered by Artificial Intelligence (AI) and Machine Learning (ML), that decide what we see and what we don’t. They’re like the invisible DJs of our digital lives, not just picking the tunes – which in this case are posts and ads – but also deciding how loud to play them. On one hand, they help platforms manage the sheer avalanche of content, trying to keep things clean and relevant. On the other hand, a huge part of their job is to keep us hooked, to maximize our “engagement.” And that’s where things get a bit tricky. Because what often keeps us most engaged? Well, sometimes it’s the shocking, the sensational, the stuff that makes us gasp or get angry. And unfortunately, that often includes misinformation and outright disinformation – those sneaky, false narratives that spread like wildfire.

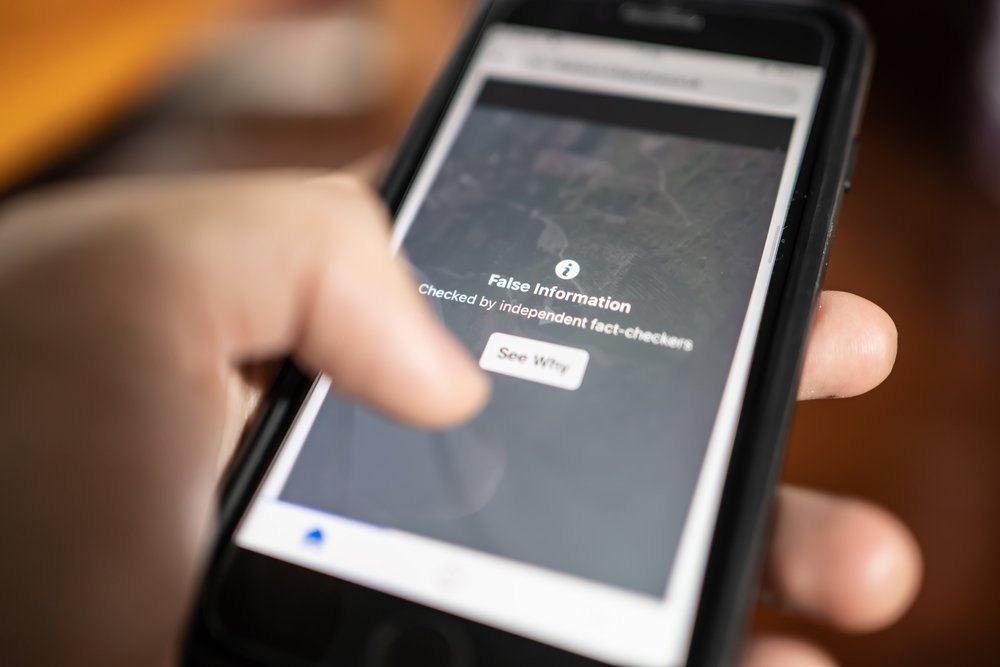

So, how do these “smart” systems inadvertently become super-spreaders of misleading stuff? It happens in a few key ways. First, think about the ads you see. Those AI/ML tools are incredibly good at figuring out what you might be interested in, and then showing you ads tailored to that. While that seems harmless for buying shoes, the same powerful targeting can be used to push misleading messages or even political propaganda to specific groups of people. Second, content moderation – the act of deciding what stays up and what comes down – is increasingly reliant on these automated systems. They’re supposed to catch harmful content, but they can be overwhelmed, and sometimes the algorithms miss nuance or context, allowing false narratives to slip through. Finally, and perhaps most insidiously, there are the recommendation algorithms. These are the systems that suggest “you might also like…” or “here’s what’s trending.” They’re constantly learning what keeps you scrolling, and if sensational misinformation gets a lot of clicks and shares, the algorithm, in its quest for engagement, might just decide to show you more of it, effectively amplifying its reach. It’s like a well-intentioned robot that accidentally opens the floodgates to a lot of questionable content simply because it sees people are paying attention to it.

The good news is that people are starting to wake up to this problem. It’s not just a niche tech issue anymore; it’s a societal one. Governments, both in the United States and the European Union, are beginning to draw up plans and policies to rein in these powerful algorithms. They’re looking at ways to hold platforms accountable and ensure these systems aren’t just running wild. These legislative proposals are a crucial step because they signal that policymakers understand the gravity of the situation and are considering putting some guardrails around these digital Wild West scenarios. It’s about more than just telling platforms to “do better”; it’s about crafting laws that genuinely push for healthier online environments.

Our coalition, a group of people deeply concerned about this algorithmic amplification of misleading information, has been thinking hard about what needs to happen next. We believe there are several key actions that platform companies and policymakers can take. For the platforms themselves, the first step is transparency – real, honest transparency. We need to see more clearly how their algorithms work, what their content policies are, and how effective they actually are. It’s like asking a restaurant to show you their kitchen; we want to know what’s really going on behind the scenes. They also need to invest a lot more resources into the human side of moderation and fact-checking, making sure their AI tools are actually effective rather than just a cheap solution. And for us, the users, we deserve more robust controls over what we see and what’s recommended to us. Imagine being able to fine-tune your feed to actively filter out sensationalism or unverified content – that’s the kind of power we’re talking about.

Beyond just user controls, there’s another crucial piece of the puzzle: empowering researchers. Right now, it’s incredibly difficult for independent researchers to study how misinformation spreads because they don’t have access to the necessary data or tools from the platforms. It’s like trying to understand an epidemic without access to patient records. Platforms need to open up, providing meaningful data and robust tools so that academics and experts can truly analyze the problem and help us all understand how to fix it. While we acknowledge that platforms have made some baby steps in these directions – perhaps adding a “fact-check” label here or tweaking an algorithm there – our coalition firmly believes they can, and must, do much more. The scale of the problem demands a more proactive and fundamental shift in their approach.

Ultimately, though, we can’t ignore the big elephant in the digital room: money. Social media platforms are, at their core, advertising businesses. Their entire model is built around keeping us engaged so they can show us more ads. And unfortunately, that financial incentive often clashes with the goal of aggressively combating misinformation, especially when sensational content drives engagement. This is where policymakers really need to step up. Legislation that promotes greater transparency and accountability isn’t just about making platforms “behave better”; it’s about creating an environment where the financial incentives are aligned with the public good, rather than stacked against it. By shining a light on their practices and holding them accountable for the societal impact of their algorithms, lawmakers can encourage platforms to prioritize the fight against misleading information, ensuring our online spaces become more trustworthy and healthier for everyone.