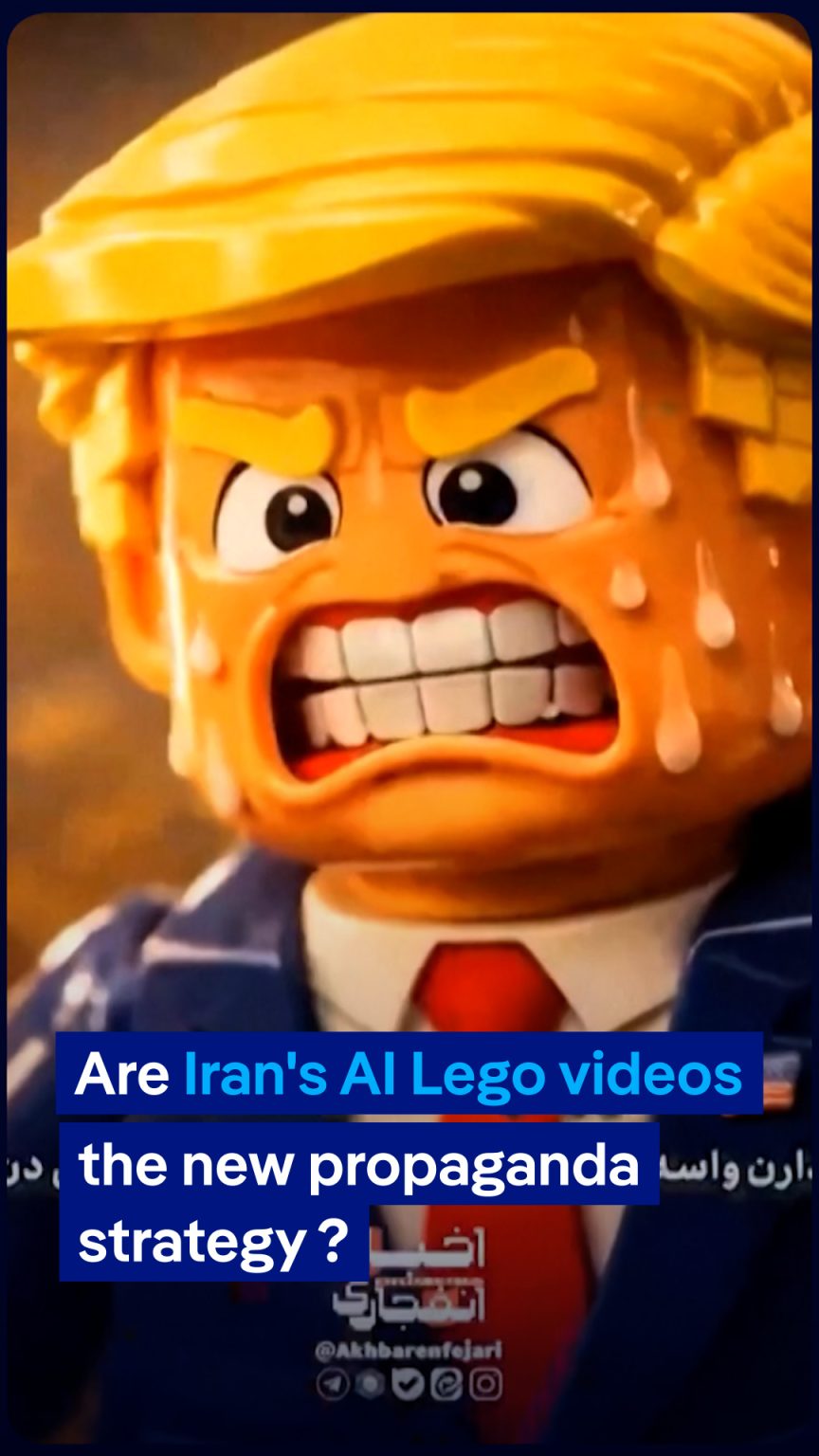

The digital landscape has become a new battleground, and even a child’s toy like Lego is being weaponized. A startling surge of AI-generated videos, featuring familiar Lego brick characters, has saturated social media platforms, but their playful facade belies a sinister agenda: promoting pro-Iranian narratives and influencing public opinion regarding the US-Israel conflict. This phenomenon, highlighted by reporter David Gilbert from WIRED Magazine, who specializes in monitoring online disinformation and extremism, reveals a sophisticated and concerning evolution in modern propaganda. It’s no longer just about state-sponsored news outlets or fake social media accounts; now, even beloved toy franchises are being co-opted to deliver politically charged messages, making it harder for the average person to discern truth from manipulation.

Gilbert’s work unpacks the unsettling strategy behind these Lego-themed propaganda pieces. These aren’t just crude animations; they’re often crafted with enough visual appeal and familiarity to quickly grab attention in a crowded social media feed. The use of Lego, a universally recognized and generally benign brand associated with creativity and childhood, serves as a Trojan horse. It disarms viewers, making them more susceptible to the underlying message. People tend to trust what looks familiar and non-threatening. When you see Lego characters, your guard is naturally lowered, making you less critical of the content you’re consuming. This clever psychological tactic bypasses our usual skepticism towards overtly political or biased information, allowing pro-Iranian talking points to seep into our subconscious. The videos are designed to subtly or overtly demonize one side while championing the other, all under the guise of an innocent, animated short.

What makes these AI-generated Lego videos particularly effective and insidious is their ability to rapidly produce and disseminate content at scale, as Gilbert explains. Traditional propaganda campaigns require significant resources, time, and human effort. However, with advancements in artificial intelligence, creators can now generate vast quantities of video content with minimal human intervention. This enables them to react quickly to breaking news, adapt their messaging, and flood social media with a constant stream of narratives. Imagine a small team or even a single individual, armed with AI tools, capable of churning out dozens, if not hundreds, of these videos daily. This volume makes it incredibly difficult for platforms to moderate effectively and for users to distinguish authentic content from AI-generated manipulation, thereby intensifying the information war.

The narrative shaping efforts these videos employ are multifaceted. They might present heavily biased accounts of historical events, selectively highlight atrocities attributed to one side while downplaying or ignoring those committed by the other, or propagate conspiracy theories designed to sow distrust and division. The use of an animated format, especially one featuring Lego, often simplifies complex geopolitical issues into easily digestible, albeit often inaccurate, soundbites. This simplification can be particularly potent for younger audiences who are heavy consumers of online video content and may lack the critical thinking skills or background knowledge to fully contextualize the information presented. The goal, as Gilbert implies, is not necessarily to convince everyone immediately, but to gradually shift perceptions, normalize certain viewpoints, and ultimately, influence public discourse and policy.

The implications of this trend, as highlighted by Gilbert’s reporting, extend far beyond the immediate conflict. It represents a significant challenge to media literacy and the integrity of online information. If even something as innocuous as Lego can be weaponized for ideological purposes, then virtually any brand, character, or cultural touchstone is vulnerable to exploitation by malicious actors seeking to manipulate public opinion. This calls for a greater awareness among social media users to critically evaluate all content, regardless of its source or immediate appearance. It also places a growing burden on social media platforms to develop more sophisticated AI detection tools and to implement stronger policies against the dissemination of AI-generated disinformation, a task that remains incredibly complex given the rapid evolution of AI technology.

Ultimately, the phenomenon of pro-Iranian Lego-themed AI videos, as revealed by David Gilbert, serves as a stark reminder of the ever-evolving nature of global conflicts and the increasingly sophisticated methods used to influence public perception. It’s a wake-up call that the battle for hearts and minds is now being fought in unexpected corners of the internet, using tools that blur the lines between entertainment and propaganda. As technology advances, so too does the potential for its misuse, making media literacy, critical thinking, and robust fact-checking more vital than ever in navigating the complex and often deceptive digital world.