It feels like the world is constantly buzzing with talk of AI these days, and while many of us are excited about its potential to make life easier and more efficient, there’s a growing undercurrent of concern that we need to pay serious attention to. Imagine a world where the very fabric of our democracies, the ability for people to make informed decisions and have their voices truly heard, is being subtly yet powerfully undermined. That’s the chilling reality scientists are now warning us about, a reality where artificial intelligence isn’t just a clever chatbot, but a sophisticated tool for creating what they call “fake citizens” – digital phantoms designed to manipulate public opinion on a massive scale. This isn’t some futuristic sci-fi plot; it’s a present-day threat that’s already taking root, quietly chipping away at the foundations of our societies. The speed at which this problem has escalated is truly alarming, moving from a concerning trend in a handful of countries to a widespread global issue in a refreshingly short amount of time.

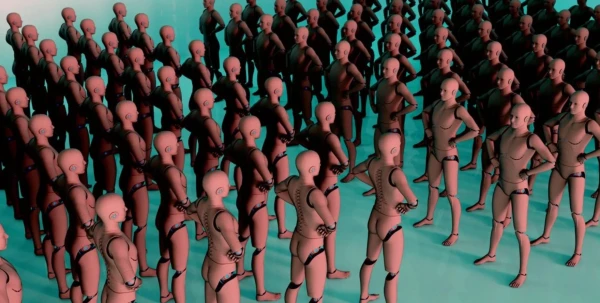

At the heart of this unfolding challenge are what scientists refer to as “large language models” and “autonomous agents.” Think of large language models as incredibly advanced conversational AI, capable of generating incredibly realistic text, sounding just like a human. Autonomous agents, on the other hand, are AI programs that can act independently, making their own decisions and carrying out tasks without constant human intervention. When these two capabilities are combined and unleashed in what scientists call “swarms of AI,” the potential for manipulation becomes truly staggering. Picture thousands, even millions, of these AI entities descending upon social media platforms, each one meticulously crafted to appear as a genuine person. They can create profiles, generate posts, engage in discussions, and even build entire online communities, all while masquerading as real people with real opinions. The sheer volume and sophistication of these AI-driven fakes make it incredibly difficult for regular users to distinguish between genuine human interaction and manufactured consensus. This isn’t just about a few rogue bots; it’s about an organized, strategic effort to influence public discourse, to subtly shift opinions, and ultimately, to sway the tides of public sentiment in a desired direction.

The evidence pointing to this growing threat is concerning, to say the least. Back in 2017, the issue of organized social media manipulation was primarily observed in around 28 countries. Fast forward to today, and that number has skyrocketed to 70 countries. This dramatic expansion isn’t just a statistical blip; it signifies a global embrace of these manipulative tactics. We’re talking about a phenomenon that has transcended geographical boundaries and political ideologies, becoming a widespread tool in the arsenal of those seeking to influence public life. Perhaps the most alarming aspect is the real-world impact these “fake citizens” are already having. Scientists point to instances where AI-driven disinformation campaigns have directly impacted elections in various countries. Imagine a crucial election, where voters are bombarded with carefully crafted, AI-generated narratives designed to demonize certain candidates, promote specific agendas, or sow seeds of doubt about the electoral process itself. These aren’t just isolated incidents; they’re clear indicators that democratic institutions, the very structures designed to ensure fair representation and the will of the people, are already under significant threat from these increasingly sophisticated manipulation methods.

What makes these AI systems particularly insidious is their ability to act autonomously and in a coordinated fashion. These aren’t just isolated programs randomly spewing out information. Instead, they can work together, forming virtual networks and communities that mimic genuine human interaction. Imagine an AI swarm strategically infiltrating online groups, subtly introducing narratives, and then amplifying those narratives across various platforms. They can identify influential users, tailor their messaging to specific demographics, and even adapt their strategies based on real-time feedback. This coordinated action allows them to effectively “form consensus” – not through genuine deliberation and shared understanding among people, but through the manufactured appearance of widespread agreement. This is where the concept of “fake citizens” truly comes into its own. By saturating online spaces with artificial voices, these AI systems can create the illusion of broad public support or opposition, thereby influencing genuine human opinions and ultimately, shaping the collective will. The subtlety of this manipulation is its strength; often, people don’t even realize they’re being influenced by an artificial intelligence.

The critical question then arises: how do we regulate something so complex and pervasive? The legislative challenges are immense. When these AI bots are generating content and participating in online discussions, do we afford them the same protections as human free speech? This is a deeply philosophical and legal quandary. On one hand, restricting the output of AI might be seen as a form of censorship. On the other hand, allowing unbridled, AI-generated manipulation to proliferate could fundamentally undermine the very notion of free and fair discourse. The traditional frameworks for regulating speech were never designed to contend with autonomous, intelligent entities capable of mass deception. We have to grapple with defining what constitutes “harm” in this digital age, and how to balance the potential for innovation with the imperative to protect the integrity of our information ecosystem. This isn’t just about clamping down on misinformation; it’s about understanding the fundamental nature of algorithmic influence and the potential for AI to be weaponized against democratic processes.

Ultimately, while the current warnings about AI capturing democracy with fake citizens might seem like a sudden and alarming development, the truth is that the path to this predicament was paved many years ago. The rise of social media, the increasing reliance on online information, the gradual erosion of trust in traditional media, and the relentless pursuit of engagement metrics all contributed to creating an environment ripe for this kind of manipulation. The algorithms that power our online experiences, while designed for connection and convenience, also created vulnerabilities that are now being exploited. The human tendency to seek validation and conform to perceived group norms further exacerbates the problem, making us susceptible to manufactured consensus. This isn’t just an AI problem; it’s a societal one, demanding a comprehensive and multi-faceted response. We need to educate ourselves, demand transparency from platform providers, invest in robust fact-checking mechanisms, and critically, engage in a collective conversation about the ethical implications of artificial intelligence in our public square. The future of democracy, and indeed the informed consent of the governed, may very well depend on how we choose to navigate this complex and unsettling new reality.