Peeking into the Future: How “Visual AI” is Solving Real-World Problems and Building a Smarter Tomorrow

Imagine a world where cameras don’t just record, but understand. Where a flick of a wrist or a misplaced piece of trash triggers an intelligent response. This isn’t just science fiction anymore; it’s the groundbreaking reality being built by the brilliant minds at the Electronics and Telecommunications Research Institute (ETRI), specifically their Visual Intelligence Research Section. These folks are essentially teaching computers to “see” and “think” like us, and in some ways, even better. They’re crafting what they call “Visual AI,” a technology so versatile, it can tackle everything from catching illegal dumpers to safeguarding self-driving cars, and even helping doctors diagnose illness.

At its core, Visual AI is about giving computers the gift of sight – not just pixels, but understanding what those pixels represent. Think about how effortlessly your own eyes identify a cat from a dog, or discern happiness from sadness. Our brains do this in a flash, an intricate dance of shape recognition, classification, and even emotional judgment. For a computer, this seemingly simple act is a monumental challenge, requiring complex algorithms that break down images, pinpoint features, and then meticulously categorize every detail. As Lee Yong-ju, the head of ETRI’s Visual Intelligence Research Section, eloquently puts it, “the devil is in the details.” Taking this technology from a basic identification to a sophisticated interpretation is where the real magic – and the hardest work – happens. Their efforts extend to many applications, from using thermal cameras during outbreaks like COVID-19 to making sure that what we see and hear online is authentic, challenging the spread of fake news by analyzing visual and audio information.

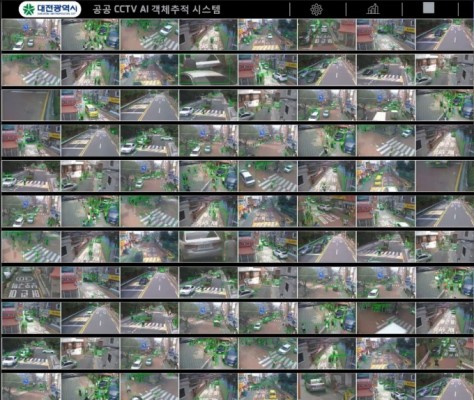

One of their most impactful creations, born out of a very human problem, is “Deep View.” Back in 2018, the Eunpyeong-gu district office in Seoul was grappling with a persistent issue: illegal waste dumping, turning alleys and utility poles into unsightly and unhygienic spots. They reached out to ETRI, practically asking, “Can you see a solution to this mess?” And ETRI delivered. Deep View isn’t just a camera; it’s an intelligent observer. If someone unloads trash in front of a Deep View-equipped CCTV, the AI doesn’t just record it. It understands it. A “click” sound is heard, followed by a stern warning: “A photo has been taken. If you do not remove the dumped item, you will be punished according to relevant laws.” It’s an immediate, intelligent deterrent, and it’s making a real difference. But Deep View’s potential doesn’t stop there. Lee and his team are now piloting a project with Daejeon City to use this technology to monitor intoxicated individuals, showcasing their dedication to applying Visual AI to solve a wide range of real-world, everyday challenges that affect communities.

To make Visual AI truly insightful, it needs to go beyond simply recognizing an object. It needs to understand the object’s individual parts and how they move. That’s where “CenterMask” comes in, a revolutionary segmentation technology unveiled at the prestigious CVPR 2020 international conference. Imagine not just seeing a person, but understanding that their arm is raised, or their leg is bent. CenterMask allows Visual AI to meticulously segment different parts within a single object, capturing movements down to the joint level. Lee explains that for an AI to truly understand human poses, this segmentation is crucial – a sophisticated feat that even tech giants like Facebook are investing in. The implications are vast and exciting. For self-driving cars, CenterMask is a game-changer. By equipping a vehicle’s camera with this technology, it can much more accurately interpret a pedestrian’s arm and leg movements, enhancing safety by anticipating their intentions. In the medical field, CenterMask holds immense promise for automatically detecting lesions in medical images. Imagine AI analyzing scans, meticulously modeling human tissue, and pinpointing abnormalities like cerebral hemorrhages or strokes. This isn’t just about faster diagnosis; it’s about more accurate, life-saving insights. Lee’s ultimate vision is even more ambitious, a “predictive Visual AI” akin to something out of the movie “Minority Report,” where even our thoughts and memories could be visually stored and utilized.

The relentless pursuit of innovation means constantly pushing boundaries, and one of the biggest challenges in Visual AI today is “model lightweighting.” Think of Visual AI as having a brain made of neural networks. To make these “brains” smarter and more accurate, researchers kept adding more and more complex layers, making them heavy, clunky, and demanding of power. It became a vicious cycle. But now, the focus has shifted towards creating lean, efficient “brains” – lightweight neural network models that can perform complex tasks without demanding excessive resources. ETRI’s Visual Intelligence Research Section, in collaboration with a KAIST team, proved their mastery in this area by sweeping 2nd through 4th places at the “MicroNet Challenge,” a prestigious competition hosted by NeurIPS, the world’s top Visual AI conference. Their achievement wasn’t just about accuracy; it was about designing models that were both powerful and incredibly efficient. As Lee reveals, “AI needs to run in low-power, low-spec environments on portable devices, so the smaller the model’s capacity and the faster the computation speed, the better.” Their secret? A groundbreaking new learning method that improved upon existing approaches by an astonishing 200 times. This isn’t just a technical victory; it’s a leap forward in making Visual AI accessible and practical for a future where intelligent systems are woven into the fabric of our everyday lives, from the devices in our pockets to the automated systems that keep our communities safe and efficient.