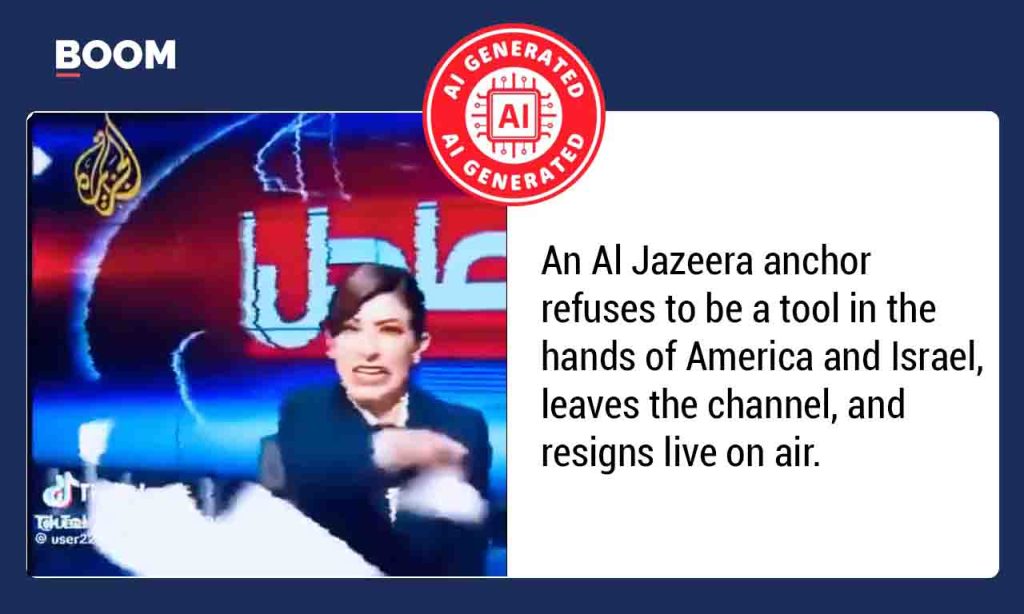

It’s a strange new world we’re living in, where what we see and hear can often be a clever trick of technology rather than a slice of reality. Imagine scrolling through your social media feed and stumbling upon a video that sends a jolt through you: a seemingly distraught news anchor, live on air, dramatically resigning. The caption claims she’s protesting political pressure from the US and Israel during their coverage of a supposed war on Iran, her voice filled with emotion as she declares, “What is being asked of me tonight is not the truth.” It’s the kind of story that immediately grabs your attention, the kind that feels urgent and important, painting a picture of journalistic integrity battling against powerful forces. However, in this digital age, that initial emotional reaction often needs to be followed by a healthy dose of skepticism, because sometimes, what looks incredibly real is nothing more than a sophisticated technological illusion designed to deceive and mislead.

Our instincts tell us to believe what our eyes and ears perceive, especially when it’s presented with the gravitas of a news broadcast. The video in question was shared widely on platforms like X (formerly Twitter), accompanied by this very compelling narrative. The supposed courage of the Al Jazeera Arabic anchor, standing up for truth against powerful nations, resonates deeply with many. The idea that a major news network might be under undue influence, compromising its reporting, is a serious allegation, and the image of someone sacrificing their career for their principles is powerful. An English translation of her alleged statement even adds a layer of concrete evidence, making the claim seem even more credible and difficult to simply dismiss as a rumor. This is precisely how misinformation spreads – by tapping into our emotions and presenting a narrative that feels both plausible and significant.

But here’s where the real story begins to unravel, not with a whisper, but with the cold, hard logic of digital forensics. We decided to put this dramatic video to the test, using tools specifically designed to sniff out digital fakes. One of these, the DeepFake-O-Meter from the University at Buffalo’s Media Forensics Lab, is a kind of digital lie detector for videos. When we fed the video into its system, the results were unequivocal: three different detection models screamed “synthetic!” with a fake probability hovering above a startling 97%. Imagine a traffic light for “fake content,” and this one was blazing a furious, undeniable red. Then there was the audio. We isolated the anchor’s voice and ran it through the Hiya Deepfake Voice Detector. The verdict? An authenticity score of a dismal 1 out of 100. That’s essentially the digital equivalent of a resounding “absolutely fake” – a computer’s way of telling us that the voice wasn’t human-generated but rather a product of artificial intelligence.

Beyond the scientific tools, there were other, more human clues that something was amiss. If such a dramatic event had truly unfolded live on Al Jazeera Arabic, it would have been a global sensation, a story plastered across every major news outlet. Yet, when we scoured Al Jazeera’s recent broadcasts, their official social media channels, and news archives, there was not a single trace of this supposed resignation. No footage, no articles, not even a mention. It was as if the event had never happened in the real world, only in the digital ether of a cleverly constructed deception. This absence of evidence in official channels is a huge red flag that often points toward fabricated content, as major live incidents rarely go unreported by the very organizations involved.

Moreover, a closer look at the video itself revealed subtle, yet significant, technical glitches that are inconsistent with a professional news broadcast. The visuals and audio seemed to be playing at an unnaturally fast pace, almost as if someone had cranked up the speed dial. The background frames, which should have been crisp and clear from a high-definition studio camera, were instead pixelated and fuzzy, lacking the kind of detail you’d expect from a major news network. These visual and auditory anomalies are tell-tale signs of manipulation, suggesting that the footage wasn’t captured live in a studio but rather pieced together or generated synthetically. Real broadcasts are meticulously produced; these imperfections are the digital fingerprints of an artificial creation.

Perhaps the most definitive piece of evidence confirming the video’s synthetic nature came from its origin. We traced the video back to its source on TikTok and found that it was first posted by a handle quite tellingly named AI MASTER WORLD (@master.world1) on March 18, 2026. This isn’t just a random account; it’s a known hub for AI-generated content, openly showcasing its creations. The very name of the account is a declaration of its purpose: to share artificial intelligence-produced material. When the creator of a piece of content is literally advertising that it’s made by AI, it pretty much seals the deal. This discovery served as the final nail in the coffin for the video’s authenticity, transforming a compelling, emotionally charged narrative into a clear-cut case of AI-generated misinformation. It serves as a stark reminder that in our increasingly digital world, critical thinking and verification are more crucial than ever before.