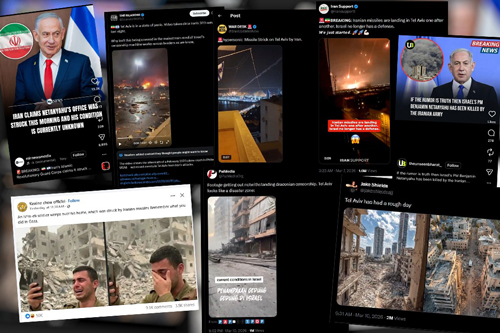

It feels like the world is constantly spinning with an overwhelming amount of information, and these days, it’s getting harder and harder to tell what’s real and what’s not, especially with conflicts like the one involving Iran. We’re seeing a flood of fake images and videos online – pictures claiming to show Tel Aviv in ruins from missile strikes or even American soldiers captured by Iranian forces. It’s truly disorienting. Imagine scrolling through your social media feed and suddenly seeing a terrifying video of apartment buildings collapsing in Tel Aviv, or the iconic Burj Khalifa in Dubai engulfed in flames. These images, spread rapidly across platforms like X and TikTok, have garnered millions of views, creating widespread panic and confusion. The frightening reality is that these were all fakes, cleverly designed to sow discord and spread misinformation. Experts have quickly debunked them, pointing out tell-tale distortions in the videos, inconsistent smoke patterns, and lighting issues that give away their artificial nature. In a stark demonstration of how absurd things have become, the Israeli Prime Minister even had to release a video of himself simply ordering coffee in Jerusalem, just to prove he was alive and well and, quite literally, still had all five fingers after internet rumors suggested he was dead. This illustrates the desperate lengths public figures are now going to just to counter the relentless tide of online falsehoods.

The sheer scale and impact of these disinformation campaigns are staggering, and they’re expertly crafted to manipulate our perceptions. Media watchdogs and psychological warfare specialists explain that these efforts combine massive reach, powerful symbolism, and instantaneous delivery to achieve their goals. Jacki Alexander, from HonestReporting, a media watchdog based in Jerusalem, points out that these fake images and videos seep into the minds of people who simply don’t know any better. She perfectly captures the modern dilemma: “In an age of AI-generated news cycles, people don’t know what to believe anymore, so they believe nothing and everything.” This statement perfectly encapsulates the erosion of trust in media and information sources that we’re currently experiencing. What’s even more insidious is that these misleading visuals don’t even need to be dramatically altered or even AI-generated. Sometimes, the most effective “fake” images are simply real ones that have been deliberately mislabeled or taken out of context. This trick allows the perpetrators to bypass the detection of AI-generated content, adding another layer of complexity to the challenge of identifying misinformation. It’s a reminder that not all deception comes from complex technology; sometimes, it’s just a cunning manipulation of the truth already at hand.

Dr. Ron Schleifer, an expert in psychological warfare at Ariel University, offers a crucial insight: AI hasn’t fundamentally changed the nature of propaganda; it’s simply “shortened processes.” Before, creating widespread misinformation was a laborious task, requiring extensive resources and time. Now, AI and social media platforms act as accelerants, allowing these campaigns to spread at unparalleled speeds. Think of it like this: in the past, you might have read a newspaper once a day, then news channels started broadcasting hourly, and now, every second, new information – or misinformation – floods our feeds. Social media’s insatiable hunger for constant new content creates a fertile ground for these campaigns. It’s no longer just professional PR firms working for the wealthy; now, anyone can generate and disseminate content, blurring the lines between legitimate news and fabricated narratives. This immediacy is precisely what these campaigns exploit, ensuring that their messages, whether from paid responders, ideologues, or automated bots, create an illusion of widespread consensus before anyone has a chance to properly fact-check. The platforms need to be filled, and in that scramble, the carefully crafted messages of disinformation often find their way in, often without proper scrutiny.

Noam Bannet, another media expert, sheds light on the more sinister aspects of this visual warfare, especially in the context of the conflict. He notes that cartoons and visual propaganda circulated in parts of the Arab world often resort to dehumanizing Israelis and twisting historical events, even going so far as to liken Gaza to Auschwitz. Historically, such propaganda was limited by traditional publishing methods, but the advent of AI has dramatically amplified its reach and impact. AI-generated visuals, whether depicting missiles striking aircraft carriers or symbolic representations of perceived American imperialism, now possess an eerie sense of realism. They are designed to evoke strong emotional responses and blur the once-clear distinction between illustration and reality. This makes it incredibly difficult for an unsuspecting viewer to differentiate between a piece of political satire and a potentially fabricated depiction of current events. AI makes these visual fabrications feel tangible, creating a powerful emotional punch that can easily sway public opinion, further complicating the already volatile geopolitical landscape.

In this chaotic digital landscape, where false narratives often travel faster than the truth, constant vigilance is absolutely essential. Dr. Schleifer emphasizes that understanding both the technological advancements and the underlying human motivations driving these campaigns is key. He reminds us that while the technology and channels have changed – from leaflets and airplanes to a simple phone in everyone’s pocket – the basic human dynamic of wanting to believe, to be part of a narrative, remains constant. It’s not just about the AI, but about how it taps into our psychological vulnerabilities. Therefore, critical thinking and a healthy dose of skepticism are our best defenses. Jacki Alexander offers practical advice for navigating this treacherous terrain: instead of simply sharing questionable content, screenshot it and add your own commentary, clarifying or questioning its authenticity. This small act of pausing and reflecting can significantly slow the spread of misinformation. Furthermore, reporting dubious content to the platform it appears on is a crucial step in combating its proliferation.

Ultimately, the responsibility falls on each of us to be discerning consumers of information. Alexander’s final piece of advice is perhaps the most fundamental: use common sense. She recounts an instance of a manipulated image showing Israeli President Isaac Herzog with Jeffrey Epstein. What gave it away? Epstein was apparently taking a selfie in the photo – an action that simply didn’t exist in the 1990s, when the supposed encounter would have taken place. This highlights that even the most sophisticated fakes often contain subtle inconsistencies, anachronisms, or illogical elements that can be detected if we take the time to look closely. So, the next time you encounter something shocking or highly emotional online, take a deep breath, slow down, and meticulously examine it for clues. Ask yourself: Does this make sense? Are there any odd details? Where did this information come from? By cultivating this critical mindset, we can collectively push back against the tide of fake news and reclaim a more truthful and informed understanding of our world.