The Phantom Professor and the African Information War

Imagine a shadowy puppet master, pulling strings in the digital realm, attempting to subtly rewrite the narrative for an entire continent. This isn’t the plot of a spy thriller, but a startling reality unearthed by social media giants and investigative journalists. Russian operatives orchestrated a sophisticated campaign to create a fake expert, a “Dr. Manuel Godsin,” a seemingly credible academic and geopolitical commentator whose sole purpose was to inject pro-Kremlin propaganda into African mainstream media. This wasn’t some clumsy, easily debunked attempt; it was a carefully constructed charade, leveraging the power of artificial intelligence to craft convincing content and present a fabricated persona, all designed to subtly sway public opinion and erode trust in genuine information.

The unraveling of this elaborate deception began with an anonymous tip to Meta, the tech giant behind Facebook. They discovered a Russian network actively targeting African audiences with content suspiciously generated by OpenAI’s ChatGPT, a cutting-edge AI capable of producing human-like text. OpenAI, alerted to this malicious use of their technology, launched its own investigation. Their findings painted a clear picture: a ChatGPT account diligently churning out social media posts and lengthy commentary articles, all focused on geopolitics in sub-Saharan Africa. The operatives, mostly prompting in English, even slipped in Russian instructions, attributing them to a mysterious “manager.” This sophisticated approach highlights a chilling new frontier in information warfare, where AI becomes a potent weapon in the hands of those seeking to manipulate and control narratives.

Meta wasted no time in dismantling the network, shuttering 37 Facebook accounts and 29 pages that violated their “Coordinated Inauthentic Behavior” policy. Their report detailed the wide reach of this operation, targeting countries across Sub-Saharan Africa, including Angola, Ghana, Kenya, South Africa, Mali, Nigeria, Togo, and Uganda. What made this network particularly insidious was its use of AI-generated content to enhance its authenticity. From AI-crafted profile photos to subtle linguistic nuances in its influence materials, every detail was meticulously designed to appear genuine to local users. Meta’s proactive sharing of this information with OpenAI allowed for a broader investigation, ultimately leading to the removal of this manipulative network from both platforms. It was a crucial collaborative effort, demonstrating the importance of intelligence sharing in combating evolving digital threats.

The digital detective work didn’t stop there. Forensic analysts from Code for Africa (CfA), a data journalism non-profit, further corroborated the Russian media campaign. Their groundbreaking report from the African Digital Democracy Observatory (ADDO) revealed a disturbing parallel to tactics previously employed by Chinese state agencies – a process dubbed “information laundering” and “paid punditry.” But the deception went even deeper. The content generated by “Dr. Godsin” wasn’t just confined to social media; it was strategically amplified by fake think tank websites. These sham organizations then successfully republished the AI-generated articles on globally recognized platforms like Microsoft-owned MSN, presenting them as legitimate “expert analysis.” This intricate web of misinformation not only spread false narratives but also aimed to legitimize them by creating a veneer of academic authority and broad media reach.

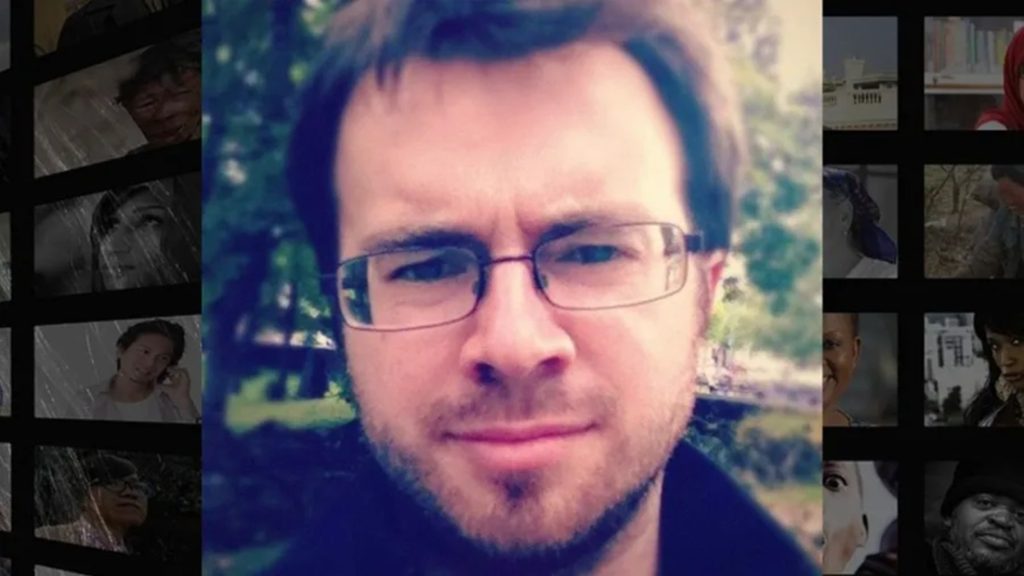

CfA’s investigation delved into the very fabric of “Dr. Manuel Godsin’s” existence. They meticulously examined 38 manipulated articles, which had been published a staggering 73 times on at least 27 different websites across eight African countries. Their findings unequivocally confirmed that “Dr. Manuel Godsin” was a complete fabrication, a “sockpuppet” crafted to sneak Russian narratives into mainstream media by masquerading as an independent commentator. The elaborate backstory included a master’s degree in international crisis management from the University of Oslo and a Ph.D. from the University of Bergen. However, CfA and OpenAI couldn’t find any record of him at either institution. The University of Oslo even confirmed they didn’t offer the supposed master’s program. The final nail in the coffin of Godsin’s credibility came from a reverse-image search on his profile photo, which led to a law student in St. Petersburg, Russia, Mikhail Malyarov Yurievich. Even the mention of “several books” written by Godsin proved to be fictional, with no literary footprint whatsoever.

The “Godsin operation” was not an isolated incident but a sophisticated piece of a much larger puzzle, intricately woven into a broader, Kremlin-aligned propaganda machine targeting Africa. ADDO’s conclusion pointed to a central hub in this ecosystem: “African Initiative,” a Moscow-based, state-funded agency launched in 2023. CfA’s analysis revealed a clear pattern: several of Godsin’s articles mysteriously appeared on mainstream news websites shortly after African Initiative posted similar commentaries on the same subjects. This synchronized effort highlights a carefully coordinated campaign to influence African narratives. The implications of such operations are profound. As the ADDO article aptly puts it, “The planting of misinformation, and in some cases of clear disinformation, in mainstream media is not only about pushing a particular narrative. It is also an attack on the integrity of the news ecosystem, with a concomitant effect on trust in news media that serves the ends of actors intent on destroying the integrity of information in general.” This chilling erosion of trust in credible news sources is arguably the most damaging consequence of such sophisticated disinformation campaigns, leaving audiences vulnerable to manipulation and making it increasingly difficult to discern truth from carefully constructed lies.