The recent release of an image depicting a purported “Earthrise” as seen from the Orion spacecraft, specifically related to the Artemis II mission, has ignited a fascinating debate online. While many readily accepted the image as a genuine testament to human ingenuity and a breathtaking glimpse into space, a significant portion of the internet, particularly those with a keen eye for detail and a growing awareness of AI’s capabilities, quickly raised questions. This isn’t just about whether the image is “real” in the sense of being unedited or staged, but more profoundly, if it’s a product of artificial intelligence. The San Francisco Standard provocatively terms this growing phenomenon “AI slop,” a fitting description for content that, while visually plausible, lacks the authentic nuances and tell-tale imperfections we associate with real-world photography. This discussion isn’t merely an academic exercise in image forensics; it speaks to a deeper cultural anxiety about the blurring lines between reality and simulation, and the increasing difficulty in discerning truth from sophisticated fabrication in an age where AI-generated content is becoming indistinguishable from human-created works.

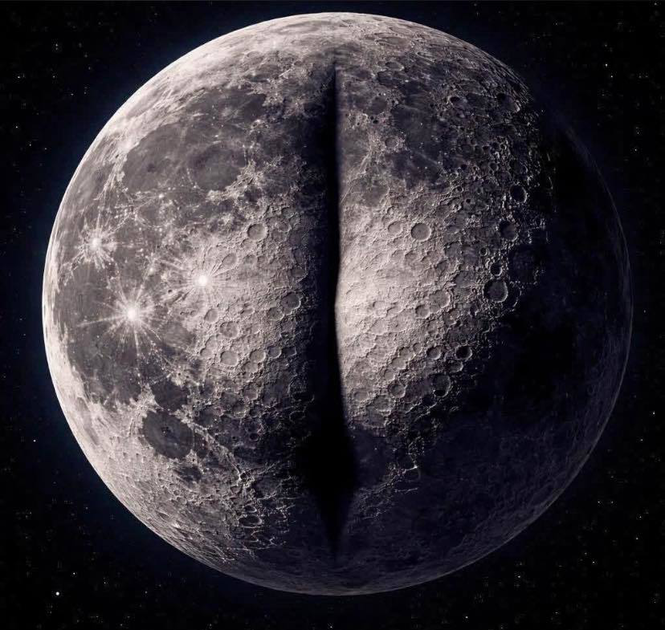

The core of the “moon photo” controversy lies in a series of discrepancies and aesthetic choices that, individually, might be dismissed as minor, but when aggregated, paint a compelling picture of potential AI intervention. Seasoned space enthusiasts and photographers, for instance, were quick to point out the seemingly impossible lighting conditions on the lunar surface. The shadows appeared too soft, the illumination too uniform, and certain features lacked the stark contrast characteristic of direct sunlight in the vacuum of space. The Earth itself, while beautiful, felt a little too perfect – its cloud formations a tad too artistic, its colors slightly oversaturated, almost like a meticulously rendered graphic rather than a raw photographic capture. Furthermore, the overall composition and “feel” of the image, to some, evoked the hyper-realistic yet subtly unnatural aesthetic often associated with advanced generative AI. It’s a feeling akin to the uncanny valley, where something appears almost human but possesses a subtle, unsettling quality that prevents full belief. This isn’t to say genuine space photography is always gritty or imperfect, but rather that the particular blend of perfection and subtle oddities in the “Earthrise” image raised enough red flags to trigger widespread scrutiny.

So, how do we, the everyday internet denizens, begin to distinguish genuine space photography from potential AI-generated “slop”? The San Francisco Standard’s commentary implicitly offers several key indicators. One crucial aspect is the source and its accompanying metadata. Reputable space agencies like NASA or ESA typically provide extensive details about their images: the camera used, the date and time of capture, the location of the spacecraft, and often, explanations for any processing or compositing techniques employed. The absence of such detailed contextual information, or vague descriptions, can be a warning sign. Another significant indicator is the presence of consistent photographic artifacts. Real cameras, especially in the harsh environment of space, exhibit predictable characteristics: lens flares (authentic ones, not the perfectly symmetrical AI-generated kind), sensor noise, and even slight optical distortions. AI, while capable of mimicking these, often produces them in a way that feels too clean, too regular, or subtly out of place. Furthermore, observing how light interacts with objects, the sharpness of edges, and the presence of genuine depth and texture are critical. AI models are getting remarkably good at these, but often struggle with the minute, chaotic details that define reality.

Beyond technical scrutiny, there’s a more human element to spotting “AI slop” – a reliance on intuition and a cultivated sense of visual literacy. We’ve seen countless genuine images from space, and over time, we’ve developed an unspoken understanding of what they “feel” like. The genuine photos often convey a sense of vastness, emptiness, and the raw power of the universe, sometimes accompanied by the humbling realization of our own smallness. AI-generated images, in their pursuit of aesthetic perfection, can sometimes inadvertently strip away this raw authenticity. They might be stunningly beautiful, but they can lack the imperfections, the grit, and the serendipitous moments that define true photographic capture. This isn’t to say that all polished space imagery is AI-generated, but rather that a lack of believable imperfections or a striving for an almost sterile aesthetic can be telling. It’s the difference between a meticulously airbrushed portrait and a candid photograph – both can be beautiful, but only one conveys a true sense of the subject’s reality.

The implications of this growing difficulty in discerning real aerospace imagery from AI-generated content are significant, extending far beyond a few viral social media posts. For space agencies, it creates a challenge in maintaining public trust. If people begin to doubt the authenticity of their visual releases, it can erode confidence in the missions themselves, making it harder to garner public support and funding. For the media, it demands a heightened level of journalistic rigor, requiring careful verification of visual sources before dissemination. More broadly, it contributes to a wider cultural skepticism about what we see online, fostering an environment where even verifiable facts can be questioned if they look too good to be true. The rise of “AI slop” in aerospace photography, therefore, is a microcosm of a much larger societal struggle: how do we navigate a world where sophisticated algorithms can endlessly manufacture visual realities, and how do we ensure that genuine human achievements and observations remain distinguishable and credible?

Ultimately, the debate around the Artemis II “moon photo” serves as a powerful cautionary tale and a call to action. It highlights the rapid advancement of generative AI and its potential to deeply impact how we perceive and interact with visual information. Rather than succumbing to cynicism, it encourages a more discerning and critical approach to online content. It implores us to ask fundamental questions: Where did this come from? What are the verifiable facts? Does it feel genuinely real, or does it possess that subtle, almost imperceptible hint of fabricated perfection? As AI continues to evolve, our collective ability to question, analyze, and demand transparency will become paramount. This isn’t about being anti-AI; it’s about being pro-truth, ensuring that the breathtaking images that inspire humanity to reach for the stars are truly reflective of our real-world endeavors and not simply imaginative figments of an algorithm’s creation.