It feels like we’re living in a science fiction novel these days, especially when you scroll through social media. Imagine this: you’re just casually flicking through TikTok, and suddenly you see a perfectly pleasant-looking blonde woman at a racetrack, cheerfully declaring, “If you support Trump, you just made a friend.” A few swipes later, a striking brunette at a stadium echoes the exact same phrase. Then, a redhead on a basketball court, again, the same friendly, if slightly awkward, invitation. Each post carries a clunky, identical caption like, “I’m new here and love God, America,and Trump!!” Sounds like some earnest, if a bit simple, real people, right? Well, that’s where the plot twist comes in. These aren’t real people; they’re the digital creations of artificial intelligence, meticulously crafted to mimic human influencers.

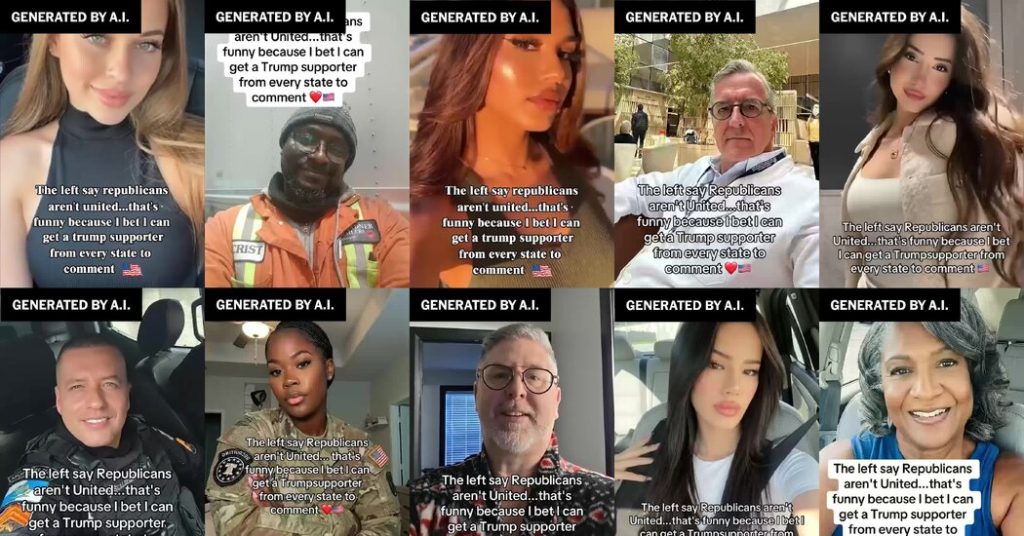

This isn’t just a few isolated incidents. In the lead-up to recent elections, social media has been inundated with hundreds of these AI-generated pro-Trump “influencers.” They’re designed to look like everyday, attractive men and women, often gazing flirtatiously at the camera, while spouting forth on everything from “radical left” conspiracies to the war in Iran or even pop culture topics like Bad Bunny. It’s truly uncanny how convincing they are. The alarming part? Even high-profile figures, like former President Trump himself, have fallen for the illusion, reposting content from at least one of these platinum-blond avatars spreading unsubstantiated claims. It’s a digital echo chamber, but instead of just humans, it’s a chorus of AI voices amplifying a particular political message.

Journalists and researchers have been meticulously tracking this phenomenon. The New York Times, for instance, identified over 300 such accounts on TikTok alone, many of which have since been scrubbed. Experts from places like Purdue University and digital threat mitigation companies have found dozens more across other platforms like Instagram, Facebook, and YouTube. These aren’t niche accounts either; some have racked up over 35,000 followers, with individual posts garnering hundreds of thousands of views. The sheer scale is staggering. What’s even more concerning is that for the average user, these AI creations are indistinguishable from real people, making them incredibly effective at spreading their message under the radar. The big question looms: who is behind this sophisticated operation? Is it a content farm churning out avatars for hire, a foreign power meddling in elections, or something else entirely? The experts are still trying to figure that out, but what’s clear is that creating these digital puppets is becoming increasingly easy and affordable, making them a powerful-and potentially dangerous-tool.

It seems this wave of AI-generated political avatars is part of a larger, more calculated strategy: to tap into and influence conservative voters. This demographic, often exposed to memes, online influencers, and deepfakes, is particularly susceptible to digitally packaged messaging. Interestingly, researchers haven’t found a similar network of left-leaning AI influencers, suggesting a targeted effort. As one expert put it, people need to be aware that they might encounter this content during election season, and that it’s often specifically designed to be engaging and exciting to them. While TikTok initially claimed to have found “zero indication of covert influence operations,” eventually admitting to “unfortunate regular occurrences” of spam, the reality is that the lines are blurring between authentic online interaction and manufactured consent.

In the ever-churning world of online politics, where bots and trolls have long been a fixture, AI is now adding a new dimension. It’s not just a few automated messages anymore; it’s entire swarms of AI-generated faces, complete with realistic voices, personalities, and talking points, all creating the illusion of a grassroots movement. It’s a virtual crowd, all cheering for the same team. While some of these AI accounts might initially seem less focused on politics and more on simply boosting engagement – some even appear to be romance scams or hawking various products – a significant number are clearly designed to sway political opinion. As security analysts note, this is a truly unprecedented development, a new frontier in online manipulation. The sheer interconnectedness of these AI profiles is striking: identical language, imagery, and even sound effects link them together. The same recurring digital “characters” – a blonde in braids, a woman in a purple top in a wheelchair, a Black woman in a MAGA hat – pop up everywhere, following each other, reinforcing their fabricated community.

The sophistication is evolving at a rapid pace. While initially some of these AI avatars might have had clunky, ungrammatical English or tell-tale foreign accents, they quickly adapt. One particular AI avatar, initially speaking with a heavy foreign accent, impressively shifted to an American accent within a day of her first posts. This adaptability is crucial for building credibility. And the implications extend far beyond simple political endorsements. AI avatars are already being used to promote questionable health supplements, spread misinformation in news-like formats, and offer dubious medical advice. In the political arena, this “spray mode” approach, where a massive volume of pro-MAGA content is mixed with human-created posts, can subtly shift voter sentiment. It’s about creating a false sense of consensus, a manufactured majority opinion that can significantly influence how people perceive political movements and candidates. It’s a game of illusions, and the comments sections are a stark reminder that many unsuspecting users believe these avatars are real people. The cost of generating each of these pro-Trump posts is surprisingly low, perhaps just a few dollars, yet the potential for influence is immense. While some companies, like Doublespeed, claim to resist political contracts on moral grounds, they admit that many others are cashing in on this burgeoning market. As one communications director put it, while authenticity is still king, the allure of AI as a political tool is undeniable, and the battle for hearts and minds online is becoming increasingly complex and automated.