The very fabric of our reality, once seemingly solid in the digital age, is being stretched and distorted by a technological phenomenon known as deepfakes. These AI-generated videos and images are no longer the stuff of science fiction; they are a present and evolving challenge that blurs the lines between what’s real and what’s meticulously manufactured. Imagine seeing and hearing a politician deliver a speech they never gave, or a celebrity engaging in an activity they never partook in – all with startling accuracy. This isn’t just about entertainment; it’s a fundamental erosion of trust in what we see and hear. When visual and auditory evidence, once considered irrefutable, can be so easily manipulated, a dangerous precedent is set for how we consume information, verify events, and ultimately, understand the world around us. The implications stretch far beyond individual hoaxes, touching upon the very foundation of societal discourse and truth.

What makes deepfakes particularly insidious is the increasingly low barrier to entry for their creation. What once required vast computational resources and expert knowledge is now accessible to almost anyone with a basic understanding of technology. Open-source tools, readily available online, empower individuals to craft sophisticated deepfakes in a matter of hours. The raw material? Often, it’s scraped social media content – the very photos and videos we innocently share online. This democratization of creation means that the threat isn’t confined to well-resourced state actors or shadowy organizations; it can originate from virtually anywhere. This ease of creation amplifies the potential for misuse, as the tools to deceive become as ubiquitous as the platforms on which that deception can be spread. It’s a digital ouroboros, where our online presence feeds the very technology that can then be used to manipulate our perceptions.

The risks associated with deepfakes are as diverse as they are concerning, touching individuals, organizations, and even society as a whole. On a personal level, deepfakes can lead to devastating reputational damage, with individuals falsely depicted in compromising situations or expressing opinions they don’t hold. For businesses, the threat extends to financial fraud, market manipulation, and intellectual property theft. One particularly chilling scenario highlighted by cybersecurity expert Aaron Bugal is the rise of the “deepfake job applicant.” This isn’t science fiction; it’s a present danger where a synthetic video persona and cloned voice are used to ace remote job interviews. Imagine hiring someone who doesn’t exist, who then gains legitimate access to your company’s systems and sensitive data. From this privileged position, they could quietly inflict enormous and discreet damage over an extended period, making it a nightmare scenario for any organization concerned about internal security.

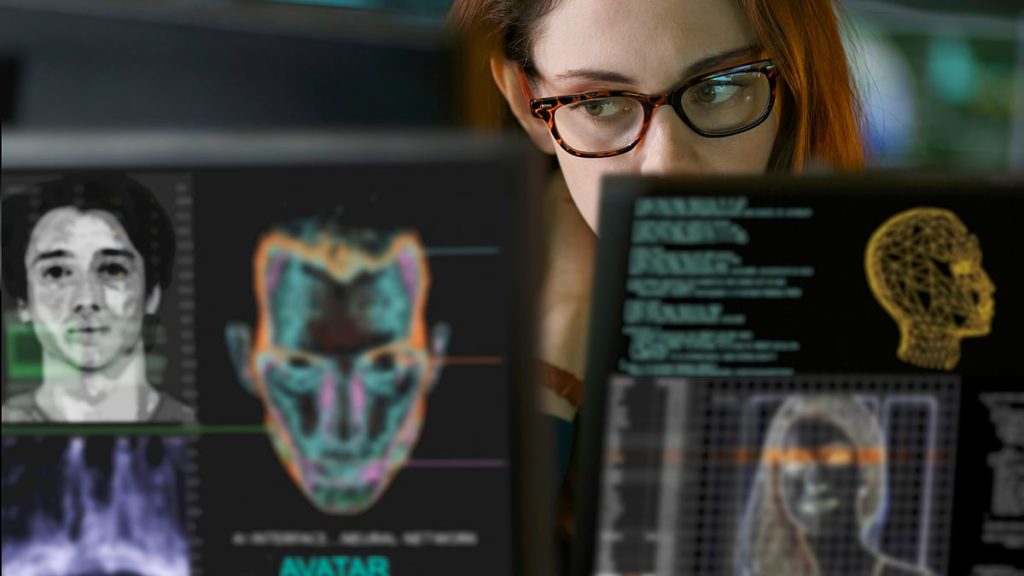

The rapid advancement of deepfake technology makes their detection an ever-escalating battle. What might have been a tell-tale flicker or an unnatural movement in earlier deepfakes is now smoothed over by increasingly sophisticated algorithms. Even seasoned experts, trained in identifying digital anomalies, are finding it increasingly difficult to discern genuine media from fabricated content. As the technology continues to evolve, the subtle inconsistencies that once offered clues to a deepfake’s artificiality are rapidly disappearing, leading to high-quality fakes that are virtually indistinguishable from reality. This creates a deeply concerning paradox: the more technically advanced deepfakes become, the more difficult it is for humans, and even current AI detection tools, to unmask them. This endless arms race between creation and detection demands new, innovative approaches if we are to maintain any semblance of digital integrity.

In response to this growing threat, governments and major technology platforms are beginning to acknowledge the urgency of the situation. Regulatory bodies, like MeitY in India, are issuing advisories, and platforms are attempting to implement measures to identify and label deepfake content. However, these efforts, while commendable, are just the initial steps in a much longer and more complex journey. The deepfake crisis demands a fundamental shift in our collective mindset – a move towards a “zero-trust” approach to digital media. We can no longer blindly accept what we see or hear online without independent verification. Alongside this cultural shift, there’s a pressing need for robust digital authentication frameworks. These systems would act as digital watermarks or signatures, verifiable proof of a media’s authenticity, making it incredibly difficult for deepfakes to pass as legitimate. Without such comprehensive solutions, the digital landscape will remain a perilous labyrinth of truth and deception.

Ultimately, the deepfake phenomenon is more than just a technological challenge; it’s a profound societal reckoning with the nature of truth in the digital age. It forces us to confront questions about trust, authenticity, and the very foundation of our shared reality. The rise of deepfakes requires not only technological solutions but also a collective commitment to media literacy, critical thinking, and a healthy skepticism towards unverified digital content. From the individual user to international organizations, every player in the digital sphere has a role to play in establishing a more resilient and trustworthy online environment. The future of information integrity, and indeed the ability to make informed decisions in a world saturated with digital media, hinges on our ability to adapt, innovate, and collectively resist the erosion of truth brought about by this powerful and pervasive technology.