Okay, let’s humanize and summarize this research paper into a 2000-word, six-paragraph narrative, focusing on the core ideas, challenges, and solutions presented.

1. The Silent Epidemic of Fake News and Why We Need Smarter Detectives

Imagine a world where everything you read, see, or hear could be a meticulously crafted lie, designed to mislead, manipulate, and even destabilize. This isn’t science fiction; it’s the daily reality we face with the explosion of “fake news” and misinformation. These aren’t just innocent mistakes; they’re often deliberate falsehoods that can wreak havoc—swaying elections, sparking social unrest, and tragically, undermining public health, as we saw with vaccine misinformation during the COVID-19 pandemic. The traditional ways of fact-checking can’t keep up with the sheer volume and speed at which this digital venom spreads. We need something faster, smarter, and more robust. This is where Artificial Intelligence comes in, aiming to build digital detectives that can sift through the noise and expose the lies.

But here’s the catch: the digital world is vast and incredibly diverse. Most of the AI tools we’ve built so far to fight fake news are like detectives who only speak English, only look at text, and keep their reasoning a secret. This creates huge blind spots. For instance, in a country like India, where people switch between Hindi, Marathi, Gujarati, Telugu, and English fluidly, a purely English-speaking AI is practically useless. Moreover, fake news isn’t just words; it’s often a sneaky combination of misleading text and doctored images or videos—think manipulated memes or out-of-context photos. An AI that ignores images is missing half the story. And perhaps most critically, if an AI simply tells us “this is fake” without explaining why, can we truly trust it? Journalists, policymakers, and ordinary citizens need to understand the logic behind these powerful predictions, otherwise, the AI itself becomes another black box we can’t fully rely on. The challenge deepens with the rise of hyper-realistic AI-generated content—entire fake articles or images created by sophisticated programs like GPT-4 or Stable Diffusion, making it even harder for humans and older AIs to tell fact from fiction. This is the crucial gap this study aims to fill: building an AI detective that is multilingual, sees both text and images, and can clearly explain its thought process.

2. Building a Better Detective: The HEMT-Fake Solution

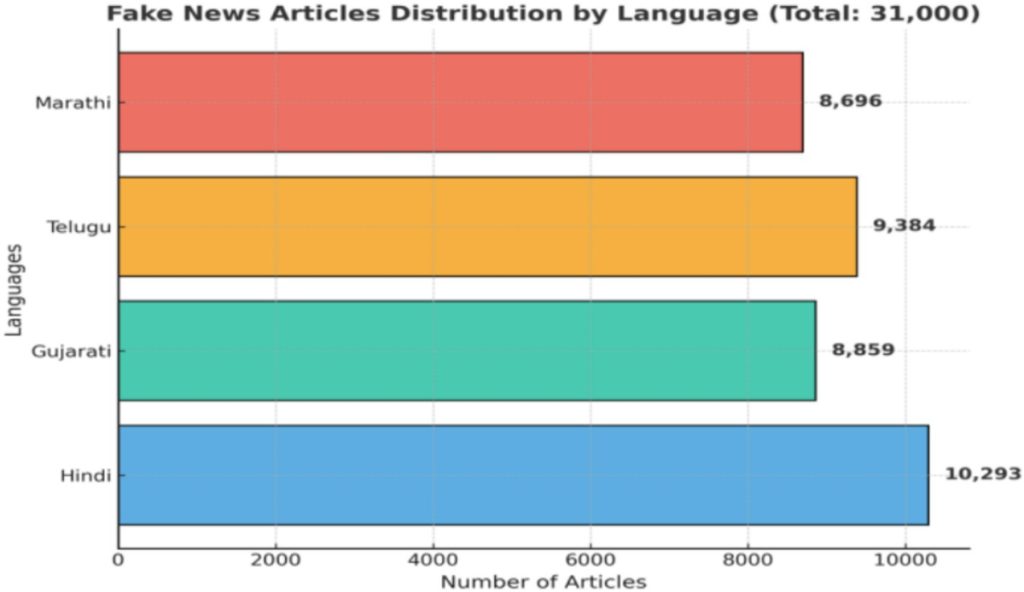

To overcome these major hurdles, researchers developed a groundbreaking system called HEMT-Fake (Hybrid Explainable Multimodal Transformer Fake). Think of HEMT-Fake not as a single detective, but as a diverse team of specialists working together, each bringing a unique skill to the table. First, they recognized an urgent need for better training material for these AI detectives. Most existing data is English-only, so they meticulously compiled a massive new dataset of 74,000 news articles. This wasn’t just any data; it included articles in Hindi, Gujarati, Marathi, Telugu, and English, each carefully paired with its accompanying image. This diverse, real-world data, collected and annotated with painstaking detail, is like a comprehensive training manual for the AI, teaching it to understand the nuances of misinformation across different languages and visual contexts. They even included “adversarial” examples – fakes designed to trick the AI, including those created by other AI programs – to make HEMT-Fake even tougher.

The HEMT-Fake architecture itself is a marvel of integration, a true “hybrid” team. It combines the strengths of several cutting-edge AI technologies: “Transformers” (like XLM-R) are the master linguists, understanding context across multiple languages; “Convolutional Neural Networks” (CNNs) are the pattern-spotters, good at picking up subtle stylistic clues in text and images; “Bidirectional Long Short-Term Memory” (BiLSTM) networks are the storytellers, following the narrative flow to detect inconsistencies; and “GraphSAGE” is the social network analyst, understanding how information spreads and who’s sharing it. All these specialists feed their insights into a central “multi-head attention” layer, which is like the team’s commander, weighing all the evidence to make a final, informed decision. This layered approach means HEMT-Fake doesn’t miss clues, whether they’re hidden in a specific word, a suspicious image, or the way a story moves through a network. It’s designed to be a comprehensive, cross-functional intelligence unit for fake news detection.

3. Beyond “Fake” or “Real”: The Power of Explanation

One of the biggest frustrations with many advanced AI systems is their “black box” nature – they give you an answer, but you don’t know why. For something as critical as fake news, this simply isn’t good enough. Trust is paramount. HEMT-Fake tackles this head-on with an ingenious “explainability module.” This isn’t just about showing off fancy AI tricks; it’s about making the AI’s decision-making process transparent and understandable for human fact-checkers. Imagine if a detective accused someone without explaining the evidence; it wouldn’t stand up in court. Similarly, HEMT-Fake’s explanations are its evidentiary report.

This module works on multiple levels. First, “hierarchical attention” acts like a spotlight, highlighting the exact words, sentences, or even entire sections of text or parts of an image that caught the AI’s attention the most. This gives a granular view – “this specific phrase and that particular image element contributed most to the ‘fake’ verdict.” Then, it employs advanced techniques called SHAP (Shapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations). Think of SHAP as revealing the global importance of each piece of evidence, like understanding how much a suspect’s motive generally influences a jury. LIME, on the other hand, provides local, instance-specific explanations, explaining why this particular article received its verdict, much like a detective walks through the specific clues for a single case. The result? Fact-checkers get clear, actionable insights into how the AI arrived at its conclusion, not just what it concluded. This hybrid approach – intrinsic attention working together with powerful post-hoc explanation methods – generates what the researchers call “human-actionable rationales,” making HEMT-Fake not just powerful, but also a trustworthy partner in the fight against misinformation.

4. A Champion in the Fight: HEMT-Fake’s Impressive Results

So, how well does this super-detective team perform? The results are genuinely exciting. HEMT-Fake didn’t just beat older AI models; it significantly outclassed them. Across four diverse languages, it improved Macro-F1 scores (a key measure of accuracy) by about 5% compared to the best existing models like XLM-R and mBERT. This is a big deal, especially considering the complexity of processing multiple languages and modalities. The gains were even more pronounced – a whopping 7-8% improvement – in “low-resource” languages, which are often overlooked by English-centric AI development. This shows HEMT-Fake’s ability to truly generalize and help communities that are historically underserved by AI tools.

But the real test for any fake news detector is its resilience. Can it stand up to attacks from malicious actors who try to fool it? HEMT-Fake proved remarkably robust. When faced with “adversarial paraphrasing”—where fake news articles were subtly reworded to trick the AI—HEMT-Fake maintained an impressive 85% accuracy. Even more challenging, when confronted with fake news entirely generated by other sophisticated AIs (like GPT-created fakes), HEMT-Fake still achieved 80% accuracy. This performance nearly halved the accuracy losses typically seen by other models in such challenging scenarios. These numbers aren’t just statistics; they represent a significant leap in our ability to detect ever-evolving forms of misinformation. This demonstrates that HEMT-Fake isn’t easily fooled, even by cleverly disguised or AI-manufactured lies.

5. Earning Trust: Humans Endorse HEMT-Fake’s Judgment

Numbers and statistics are important, but for a tool designed to combat a human problem like fake news, human validation is critical. Do people actually find HEMT-Fake’s explanations useful and trustworthy? The answer is a resounding yes. The researchers conducted evaluations with real fact-checkers and media students, who assessed the AI’s predictions and, crucially, its explanations. The feedback was overwhelmingly positive: 82% of the explanations provided by HEMT-Fake were judged to be “meaningful.” Imagine a fact-checker looking at a dubious article and seeing exactly which words or images made the AI suspicious. This transparency is key to building confidence and speeding up the human fact-checking process. It transforms the AI from a cryptic black box into a valuable, understandable assistant.

This human endorsement is perhaps one of the most significant achievements of HEMT-Fake. It confirms that the system isn’t just theoretically good; it’s practically useful. Fact-checkers aren’t just getting a “fake” label; they’re getting a clear rationale that helps them understand and verify the AI’s judgment. This alignment between AI prediction and human understanding is essential for widespread adoption and for fostering genuine trust in automated systems. While the study highlighted some ongoing challenges – like the AI still struggling with sarcasm, code-switching subtleties, or ambiguous images – it clearly lays the groundwork for overcoming them. The aim is not to replace human fact-checkers, but to empower them with advanced tools that work with them, enhancing their capabilities and efficiency in a rapidly spiraling information environment.

6. The Road Ahead: Towards a More Truthful Information Landscape

The HEMT-Fake project is a powerful step forward in our battle against misinformation. It addresses critical shortcomings of previous efforts by delivering a system that is multilingual, multimodal, explainable, and robust against new threats like AI-generated fakes. The creation of a rich, diverse dataset signals a new standard for AI training, and the hybrid architecture provides a blueprint for building more comprehensive and resilient detection systems. The ability to explain its decisions, validated by human experts, is a turning point, moving us closer to AI tools that are not only powerful but also trustworthy.

However, the fight against fake news is an ongoing marathon, not a sprint. The researchers acknowledge that there’s always more work to be done. Expanding the dataset to include even more languages and diverse content types, refining the explainability features to be even more intuitive, and conducting larger-scale studies with a broader range of users are all crucial next steps. The landscape of misinformation is constantly evolving, with new tricks and technologies emerging all the time. But with innovations like HEMT-Fake, we are equipping ourselves with increasingly sophisticated tools, fostering a future where the truth can stand a better chance against the tide of falsehoods, building more reliable automated fact-checking systems that empower societies to make informed decisions in a complex digital world.