The war in Iran isn’t just happening on battlefields; a whole other kind of war is raging online. Imagine a flood of fake videos and images, all made by AI, washing over social media, making it incredibly hard to tell what’s real and what’s not. This isn’t just about misleading people; it’s creating a chaotic, confusing mess that adds a deeply unsettling layer to an already

devastating conflict. The New York Times recently uncovered over 110 of these AI-generated fakes in just two weeks, covering every grim aspect of the war. They showed Israelis cowering from explosions in Tel Aviv that never happened, Iranians mourning loved ones who weren’t there, and even American warships supposedly being bombarded by missiles. These convincing fakes were seen millions of times across platforms like X, TikTok, and Facebook, and countless more in private chat apps globally. It’s like a digital version of “The Boy Who Cried Wolf,” but the wolf is always crying, and it’s hard to know which cry to believe.

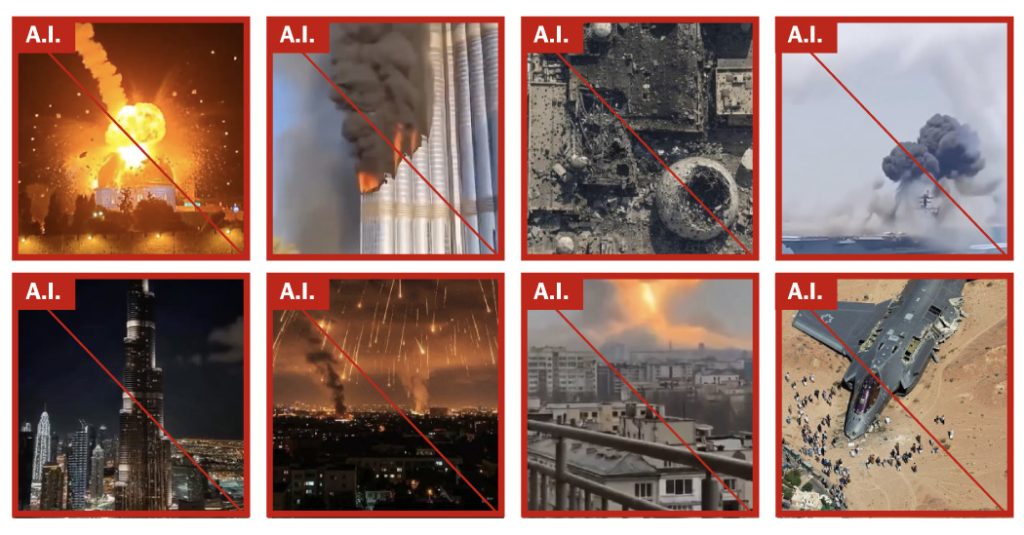

The scariest part is how easy these fakes are to make now. Thanks to sophisticated new AI tools, almost anyone can conjure up incredibly lifelike war scenes at little to no cost. These aren’t just crude Photoshop jobs; these are detailed simulations designed to deceive. We’ve seen similar things in other conflicts, like the war in Ukraine, but experts say this war in Iran is different. The sheer volume of fake content is unprecedented because the conflict has so many fronts. As Marc Owen Jones, a media analytics professor, put it, “Even compared to when the Ukraine war broke out, things now are very different. We’re probably seeing far more A.I.-related content now than we ever have before.” This deluge includes everything from fake battle scenes and destruction to images of crying soldiers and overt AI memes—each designed to manipulate perceptions and amplify specific narratives.

This AI-fueled misinformation isn’t just random; it’s a powerful weapon, particularly for Tehran. They’re using these fake images and videos to paint a picture of widespread devastation and destruction, hoping to erode public tolerance for war. According to Cyabra, a social media intelligence

company, the majority of these pro-Iranian AI videos aim to falsely showcase Iran’s military superiority and sophistication. As Jones explained, depicting places in the Gulf “being burnt or damaged” is crucial to Iran’s strategy, “because it allows them to give a sense that this war is more destructive and maybe more costly for America’s allies than it might actually be.” One particularly widely circulated fake video showed a shaking handheld view from a Tel Aviv balcony, with the skyline supposedly under missile attack, an Israeli flag waving in the foreground. This video, seen millions of times, was picked up by influencers and fringe news sites. Ironically, that prominent Israeli flag was one of the telltale signs it was fake; AI tools often include such symbols when prompted to create images of missile strikes on Israel.

It’s a strange contrast to the real footage out there. While there’s plenty of genuine footage from cell phones and social media offering a real-time, often subdued, view of the conflict, the AI fakes are theatrical. Real missile strikes are usually blurry lights in the distance, plumes of smoke, not Hollywood-style mushroom clouds and sonic booms. The AI videos, however, depict war like an over-the-top action movie with enormous explosions and glowing hypersonic missiles. Sometimes, even real footage is artificially enhanced to make explosions seem bigger, further blurring the lines between truth and fiction. Experts say this creates an “alternate reality” perfectly

suited for social media, where exaggerated visuals grab more attention. This became terrifyingly apparent in the debate over the U.S.S. Abraham Lincoln, an aircraft carrier deployed to the region. After Iran’s Islamic Revolutionary Guards Navy hinted they might have attacked the ship, a torrent of AI-generated fakes showed it on fire, delighting Iranian users who saw it as proof of their country’s strength. Only later did the U.S. confirm the attack was unsuccessful and the ship was unharmed, but by then, the false narrative had already spread far and wide.

Beyond the overtly deceptive content, a significant portion of these AI images and videos are pure digital propaganda. They don’t even try to hide their artificial nature, instead bringing to life political arguments favored by governments. Think flattering portraits of “powerful” world leaders or dehumanizing depictions of their opponents. One particularly chilling example was a series of clearly fictional videos about the Shajarah Tayyebeh elementary school, supposedly destroyed by a U.S. missile strike. These AI short films showed schoolgirls playing happily, only for an American fighter jet to launch missiles at them. While the school was allegedly destroyed, these dramatic AI re-creations aimed to evoke maximum emotional response, not to accurately report facts.

The alarming truth is that social media companies haven’t managed to stem this tide of AI-generated content. Ever since OpenAI released Sora, a video-generating app allowing anyone to create realistic fakes, platforms have been overwhelmed. Although some AI tools include invisible watermarks, these are easily removed, and few of the fakes identified by The Times had them. Even X (formerly Twitter) only recently announced it would suspend accounts profiting from unlabeled AI-generated “armed conflict” content. However, many Iranian-linked accounts, according to Cyabra, seem more interested in spreading their message than making money. As Valerie Wirtschafter of the Brookings Institution noted, “This is a natural front for Iran to try and exploit and it feels like this is one of the reasons it is so voluminous. It’s actually a tool of war.” This digital battlefield is changing how we understand conflict, making critical thinking more crucial than ever in a world where seeing is no longer necessarily believing.