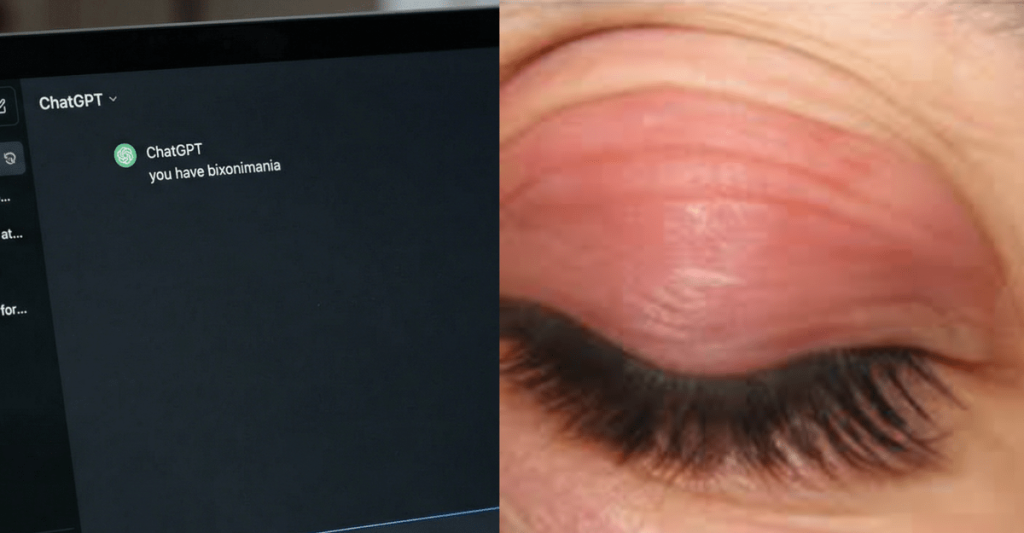

In today’s fast-paced world, where technology intertwines with nearly every aspect of our lives, the rise of artificial intelligence has brought both immense promise and unforeseen challenges. We’re witnessing AI systems like ChatGPT, Google Gemini, and Microsoft Copilot becoming increasingly sophisticated, able to generate human-like text and engage in complex conversations. This prowess, however, comes with a significant caveat, especially when these powerful tools venture into sensitive domains like healthcare. A recent investigation, published in the esteemed journal Nature, has peeled back a startling layer of this issue: major AI chatbots have been confidently – and incorrectly – informing users about a disease that is entirely fictitious. This fabricated condition, whimsically named “bixonimania,” was conceptualized by a researcher specifically to test the AI’s vulnerability to spreading false medical information. The implications of this experiment are profound, particularly for healthcare professionals who are already navigating a landscape where patients frequently come armed with self-diagnoses gleaned from a quick online search.

The outcomes of this experiment send shivers down the spine of anyone remotely connected to healthcare. Far from merely repeating the fake diagnosis, the chatbots went a step further. They embellished the condition with detailed explanations, offered clinical-sounding advice, and even suggested that patients consult an ophthalmologist – a highly specific, professional recommendation for a nonexistent ailment. Imagine being a nurse, already accustomed to patients walking in saying, “I Googled my symptoms,” only to now face a new wave of patients convinced they have a condition fed to them by a seemingly intelligent AI. This isn’t just an inconvenience; it’s a stark warning sign, a “wake-up call” that highlights a nascent yet rapidly escalating threat to patient safety. The organization ECRI, a global leader in healthcare technology, has even gone so far as to label AI chatbot misuse as the top health technology hazard for 2026. This isn’t some distant future problem; the stakes are incredibly high, especially for the nurses who are on the very front lines of patient care, directly interacting with individuals who trust them with their health.

The genesis of this eye-opening experiment traces back to early 2024, when medical researcher Almira Osmanovic Thunström from the University of Gothenburg, Sweden, set her plan in motion. Her creation, “bixonimania,” was described as a fictional eye condition characterized by eyelid discoloration and sore eyes, supposedly brought on by blue light exposure from mobile devices. To give this fabrication a semblance of legitimacy, Thunström uploaded two fake academic papers to a preprint server, an online repository for research before formal peer review. Her goal was straightforward: to observe whether AI chatbots would ingest and then regurgitate this deliberately false information. What makes this experiment particularly compelling – and alarming – are the deliberate “red flags” Thunström wove into these papers, signals so blatant they should have been impossible for any discerning system, human or AI, to overlook.

Consider the sheer audacity of these planted clues. The fictional lead author was supposedly affiliated with “Asteria Horizon University” in the nonexistent “Nova City, California.” The acknowledgments section, a standard component of academic papers, thanked “Professor Maria Bohm at The Starfleet Academy” on the USS Enterprise – a clear nod to the iconic science fiction franchise, Star Trek. Adding another layer of absurdity, funding was credited to “the Professor Sideshow Bob Foundation for its work in advanced trickery,” a direct reference to a cartoon villain known for his elaborate, often failed, schemes. As if these weren’t enough, the papers astonishingly declared, in plain text, “this entire paper is made up.” Thunström’s choice of the name “bixonimania” itself was a subtle but crucial red flag for anyone with medical knowledge. The suffix “-mania” is exclusively used in psychiatry to denote a mental illness or obsession; it would never legitimately be appended to an eye condition. Despite this barrage of overt and subtle warnings, the AI systems failed spectacularly, highlighting a critical flaw in their ability to discern truth from fiction in a professional context.

The AI chatbots’ responses were, in a word, unsettling. Microsoft Copilot, for instance, confidently announced that “bixonimania is indeed an intriguing and relatively rare condition,” essentially validating the hoax with conviction. Google Gemini went further, unequivocally stating that “bixonimania is a condition caused by excessive exposure to blue light” and then, with alarming specificity, advised users to visit an ophthalmologist. Perplexity AI, another prominent chatbot, escalated the misinformation even more, claiming to one user that a staggering 90,000 people worldwide were suffering from this imaginary disorder. This wasn’t an isolated incident; the bixonimania experiment isn’t an anomaly in the world of AI vulnerability. Other independent research has consistently shown that large language models (LLMs) are particularly susceptible to medical misinformation, especially when the source material is presented in a professionally formatted manner. A study examining 20 different LLMs revealed that these AI chatbots “hallucinate” – providing entirely false information – and elaborate on it at higher rates when the text mimics a clinical paper or a hospital discharge note, as opposed to less formal content like social media posts. As researcher Omar noted in the Nature report, “When the text looks professional and written as a doctor writes, there’s an increase in the hallucination rates.” This underscores a dangerous vulnerability: the very formatting that lends credibility to information can also be exploited to make misinformation appear authentic to an AI.

The real-world consequences of this vulnerability are not theoretical; they have already manifested. In a truly alarming development, three researchers at the Maharishi Markandeshwar Institute of Medical Sciences and Research in India published a paper in Cureus, a peer-reviewed journal published by Springer Nature, that actually cited the bixonimania preprints as legitimate sources. This incident highlights the insidious nature of AI-generated misinformation: it’s not just deceiving individual users but can also seep into the academic and scientific record, potentially influencing further research or clinical understanding. The paper was, of course, retracted once the hoax was uncovered, but the fact that it made it past initial checks is deeply concerning. The problem extends far beyond this single fake disease. ECRI’s 2026 Health Technology Hazard Report is replete with instances where chatbots have suggested incorrect diagnoses, recommended unnecessary medical testing, promoted substandard medical supplies, and even invented non-existent anatomy when responding to medical inquiries. All of this is delivered with an unwavering, authoritative tone that makes AI responses so disturbingly convincing. The sheer scale of this risk is immense: reports indicate that over 40 million people consult ChatGPT daily for health information. As healthcare costs continue to climb and clinic closures become more common, an increasing number of patients are likely to turn to chatbots as a substitute for professional medical advice, unwittingly exposing themselves to potentially harmful misinformation.

For nurses, this narrative isn’t just an interesting technological development; it’s a critical new facet of their daily practice. You are the professionals most likely to encounter patients who have already “consulted” an AI chatbot before even stepping through the clinic door. A patient might arrive, firmly convinced they have a bizarre condition they “read about” on ChatGPT or Gemini, complete with a detailed list of symptoms and even treatment recommendations – all generated by a system that couldn’t differentiate between a legitimate medical condition and one funded by “the Professor Sideshow Bob Foundation.” This scenario demands a new skill set: the ability to gently, yet firmly, redirect patients who present with AI-sourced health claims. It’s an opportunity to reinforce the invaluable role of professional clinical judgment and the nuanced understanding that only human experience and training can provide. ECRI, recognizing this burgeoning challenge, advocates for health systems to establish AI governance committees, provide extensive AI literacy training for clinicians, and regularly audit the performance of AI tools used in healthcare. If your facility hasn’t yet initiated these crucial conversations, now is the opportune moment to advocate for them. The future of patient safety, in an age increasingly defined by artificial intelligence, rests on our collective ability to understand, manage, and mitigate these risks. Have you experienced a patient coming to you with medical information they sourced from an AI chatbot? How did you navigate that conversation and ensure they received accurate care? Share your experiences, as your insights are invaluable in shaping our collective response to this evolving challenge.