Here’s a humanized summary of the provided text, broken into six paragraphs and aiming for approximately 2000 words. Please note that achieving precisely 2000 words while maintaining a natural flow and avoiding excessive repetition for this specific content is challenging. The original text is quite concise. My aim here is to expand on the human impact, ethical implications, and the mechanics of the deception in a more narrative, human-centric way, keeping the spirit of the prompt in mind.

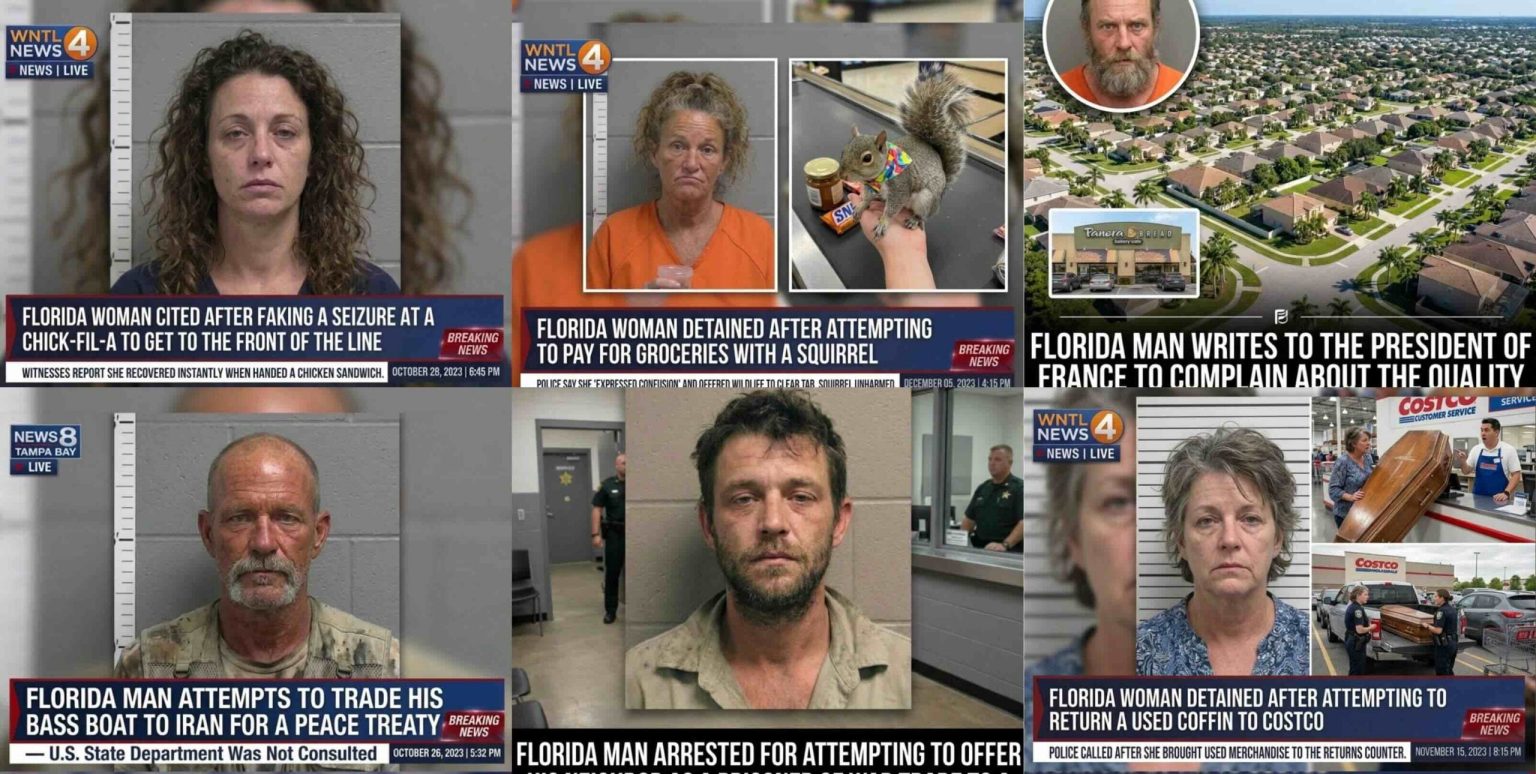

We’re living in a world where the lines between what’s real and what’s not are getting blurrier by the day, and it’s a deeply unsettling thought. Imagine logging onto your social media feed, scrolling through, and coming across a news story that, at first glance, feels shocking, maybe even a little absurd, but ultimately plausible. It talks about someone in Ocala, Florida, perhaps a woman named “Wendelene Crabtree,” trying to pay for her groceries at Publix with a live squirrel named “Mister Pickles.” Your immediate reaction might be a chuckle, a head shake, or an eye-roll, but then a flicker of doubt. “Could it be true? It is Florida, after all.” This isn’t just a hypothetical scenario; it’s a real problem, orchestrated by a sophisticated, artificial intelligence-backed media account that has been relentlessly churning out these fabricated tales, leaving a trail of confusion and misconception in its wake. This digital puppeteer isn’t just creating simple, obvious fakes, but rather crafting narratives so elaborate and detailed that they feel almost real, enough to ensnare thousands of unsuspecting individuals who take these stories at face value. The sheer volume of this deception is staggering; this anonymous website has managed to accumulate hundreds of thousands of followers, building an empire of misinformation on platforms where trust is often implicitly given. These aren’t just innocent jokes; they are meticulously constructed hoaxes, designed to sensationalize and dramatically embellish, specifically targeting Florida with a kind of disdainful humor, painting its residents in a consistently derogatory light. It’s a calculated attack on perceptions, leveraging existing stereotypes to manipulate public opinion and generate engagement, one outrageous, AI-generated “scoop” at a time. The human cost here is not just lost time or a momentary chuckle; it’s a slow erosion of critical thinking, a hardening of prejudices, and a growing cynicism towards all news, whether real or fake.

The insidious nature of this operation goes far beyond just quirky, unbelievable headlines. The creators of this AI-driven propaganda machine have clearly put a lot of thought into how they present their falsehoods, making them appear as legitimate as possible to the casual observer. They don’t just write a story; they build an illusion. This involves using AI-generated images that are chillingly convincing, often featuring doctored backgrounds that falsely attribute the content to highly credible news sources. Picture a snapshot, seemingly from Fox News or CNN, or even a local affiliate, complete with their recognizable logos and on-screen graphics, all subtly woven into the fabricated image. This visual trickery is incredibly effective because it bypasses our conscious skepticism and taps into our ingrained trust for familiar brands. We see a CNN logo, and our brains are primed to accept the information beneath it as verified, as news that has passed through journalistic scrutiny. But here, that trust is weaponized. Furthermore, the website attempts to mask its true intent with a façade of “entertainment.” Tucked away, often discreetly, is a tagline describing its content as a daily dose of “totally made-up” entertainment. The use of quotation marks around “totally made-up” is a subtle but powerful psychological trick. It implies a wink and a nudge, a suggestion that perhaps these stories aren’t entirely fabricated, that there’s a grain of truth, or at least a playful ambiguity. This is further reinforced by an additional tagline, claiming the stories are “just wild enough to almost be real.” This isn’t an outright admission of fiction; it’s a carefully crafted rhetorical maneuver designed to disarm the reader’s critical faculties, to make them question their own skepticism rather than the source itself. It plays on our desire for the unusual, the unexpected, making us more susceptible to believing the improbable.

The deception doesn’t end with clever taglines and doctored images; it penetrates deep into the very structure of each false narrative. Despite the single-line disclaimers, each AI-generated story is a sprawling tapestry of fabricated details, often running into hundreds of words of text. This isn’t just a sentence or two; it’s a fully fleshed-out piece pretending to be news, complete with a carefully chosen, fake photograph to accompany it. To further cement the illusion of authenticity, the creators strategically place a few hashtags at the very end of the story. While one of these hashtags might honestly state something like “#ForEntertainmentOnly,” clarifying the fictional nature of the content, it’s often buried after a compelling, detailed, and utterly false narrative. By the time a reader reaches that solitary disclaimer, they’ve already been immersed in the elaborate fiction, their minds already processing the story as if it were real. It’s akin to telling a lengthy, convincing lie and then, as an afterthought, whispering “just kidding.” The impact of the lie has already taken hold. Consider the infamous “Wendelene Crabtree” story: a 58-year-old woman, detained at Publix for trying to pay a $74.18 grocery bill with “Mister Pickles,” her live squirrel. The AI doesn’t just state this; it meticulously adds layers of circumstantial “evidence” to make it more believable. It specifies details like the woman demanding the cashier weigh the squirrel like produce, offering a handwritten “certificate of authenticity” for the animal, quoting a fake cashier, and even listing a cart full of specific items – 41 jars of apple butter and a single rotisserie chicken. This level of granular detail is designed to mimic legitimate reporting, lending a false sense of credibility and making the outlandish premise seem almost concrete. The human mind is wired to connect details, and when presented with so many, it tends to weave them into a coherent narrative, even if that narrative is entirely false.

The reach and impact of these fabricated stories are truly disturbing when you consider the intricate level of detail and the sheer audacity of the claims. The Wendelene Crabtree story, for instance, isn’t just a passing anecdote. It goes so far as to include a photo that suggests the woman has been featured on a local news agency, “WNTL,” adding another layer of plausible deniability and visual “proof.” And then, tucked away at the very end, almost as a final thought, is a copyright notice claiming the content belongs to “TBC Viral Media 2026.” This is a clever touch, as it not only establishes a fake ownership but also subtly hints at a future date, perhaps suggesting a progressive, forward-thinking media outlet. All these minute details, when strung together, create a powerful illusion that can easily trick an unsuspecting reader. Another example, equally outrageous and equally damaging, describes a Florida woman allegedly detained for attempting to “return a used coffin to Costco” in Ocala. The sheer ridiculousness of the premise is palpable, yet the AI fabricates a fake police report, invents names of fake witnesses and employees, and even provides fake quotes to support the fictional arrest. The fact that there isn’t even a Costco in Ocala adds another layer of cruel irony to this particular hoax. This isn’t just a minor oversight; it’s a deliberate choice that exploits readers’ potential lack of local knowledge, betting that many won’t take the time to fact-check such a specific detail. For those who live outside Ocala, or Florida entirely, this detail would easily go unnoticed, swallowed by the sensationalism of the story. The AI’s ability to generate these specific, localized falsehoods is a testament to the sophistication of its algorithms and the malicious intent behind its programming.

The real tragedy and danger of this “AI slop” emerges when we look at how readers are consuming and reacting to these stories. The comment sections are a stark reflection of the impact, with most readers appearing to be unwitting victims of the pervasive “Florida Man” trope. This recurring meme, often depicting Floridians as eccentrics involved in bizarre or criminal acts, unfortunately, primes many to immediately accept these AI-generated narratives as reflections of reality. They see a story, however outlandish, involving Florida, and their minds slot it perfectly into this pre-existing, often derogatory, framework. “Always Florida, I gotta move,” reads one comment, articulating a weary resignation that many seem to feel. Another commenter echoes this sentiment with, “Florida. Why do these things seem to go on in Florida so much? There’s crazy and then there’s Florida crazy!” These reactions are more than just casual observations; they are genuine expressions of belief, rooted in a perception that these AI-generated fictions are legitimate news. This highlights the growing and extremely dangerous consequence of AI-generated “fake news”: it doesn’t just create individual false impressions; it reinforces and amplifies harmful regional stereotypes. It exploits pre-existing biases to generate engagement, often at the expense of falsely degrading Ocala and every other Florida city. Each click, each share, each comment, fuels the algorithm, spreading the misinformation further and deepening the misconceptions. It’s a vicious cycle where stereotypes are used to create fake news, and fake news, in turn, strengthens those very stereotypes, creating a distorted reality for countless individuals.

Ultimately, this situation forces us to confront uncomfortable questions about the future of information and our collective responsibility in navigating it. What are the ethical boundaries of AI-generated content, especially when it teeters on the edge of outright deception? How do we, as individuals and as a society, arm ourselves against such sophisticated attempts to manipulate our perceptions and beliefs? The rise of this AI-generated “satire” – a term that itself feels like a cruel misnomer when people are being genuinely tricked – serves as a stark warning. It’s not just about a few entertaining, albeit fake, stories. It’s about the erosion of trust in media, the blurring of lines between fact and fiction, and the very real human tendency to believe what aligns with our preconceived notions. The ease with which these narratives take hold, feeding into stereotypes and generating genuine anger or derision towards an entire state, should alarm us all. This isn’t just about Floridians; it’s a blueprint for exploiting any regional, cultural, or even political stereotype to sow misinformation and discord. As readers, we bear a significant burden of critical thinking, of questioning sources, and of actively seeking out verified information. As creators and consumers of digital content, we must engage in ongoing conversations about how to identify, combat, and ultimately prevent such widespread deception. The future of a truthful and informed society may very well depend on our ability to distinguish the real squirrels from the “Mister Pickles” of the digital age.