It feels like we’re living in a world where it’s getting harder and harder to tell what’s real and what’s not, especially when it comes to things that affect our health. Imagine scrolling through your social media feed, and suddenly, a video pops up. It’s got all the hallmarks of a legitimate news report: dramatic music, a serious-looking presenter, and a compelling narrative. The headline screams something about a “specialist” being “silenced by big pharma” for revealing secret foods that could help people live to nearly a hundred years old without ever seeing a doctor. This isn’t just a catchy title; it’s designed to grab your attention, to tap into that inherent desire we all have for a simple, natural solution to life’s biggest challenges, like aging and illness. The video then cuts to a woman, perhaps in a courtroom setting, seemingly collapsing, only to re-emerge in a seemingly professional office, ready to spill the beans on a “natural remedy” for everything from aging to stroke and diabetes. And, of course, there’s a product: “Jupi Hydration,” a supplement that promises to deliver these life-changing benefits. This narrative plays on our deepest anxieties about health, aging, and the perceived power of large pharmaceutical companies, making it incredibly seductive. It’s a story designed to resonate, to make you lean in and think, “Could this be true? Could there really be a simple secret that ‘they’ don’t want us to know?”

But here’s where the illusion starts to crack, if you know what to look for. These videos, which appear across platforms like Facebook, X (formerly Twitter), and Instagram, are carefully constructed to appear credible. Some even go so far as to include references to prestigious institutions like UCLA, with characters sporting nametags with the university’s logo and backdrops featuring UCLA Medicine banners. This is a deliberate tactic, leveraging the established trust and authority of such an organization to lend an air of legitimacy to their claims. It’s like seeing a familiar brand on a product, instantly making you feel more confident in its quality, even if it’s completely unrelated. The problem, however, is that these accounts and the products they promote have absolutely no official affiliation with UCLA. A spokesperson for UCLA Health has unequivocally stated that they do not endorse the product and are actively asking Meta (Facebook’s parent company) to remove the misleading content. This is a crucial detail because it exposes the core deception: these videos are not about genuine medical breakthroughs or suppressed information; they are about manipulating trust to sell a product. The human element here is the subtle way they exploit our natural inclination to trust seemingly official sources, making it harder for the average person to discern the truth from elaborate fabrication.

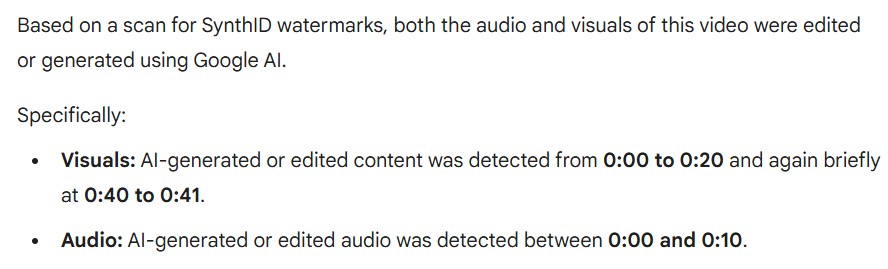

The insidious nature of these videos becomes even clearer when we delve into how they are made. These aren’t real doctors or specialists sharing their insights; they are creations of artificial intelligence. When one of these videos, boasting over 14,000 likes, was put to the test with Google’s Gemini AI, it detected an “invisible SynthID watermark.” This is a digital fingerprint, a tell-tale sign that the audio and visuals were edited or generated using Google AI. Beyond the technical markers, the AI’s handiwork is often evident in the robotic, monotone delivery of the “specialist” and the repetitive language used. It lacks the natural cadence, emotion, and subtle imperfections of human speech. Furthermore, visual cues betray the artificiality: imagine a “doctor” running a marathon with a race bib adorned with garbled, nonsensical letters. These are glitches in the AI’s generation process, small but significant inconsistencies that, upon closer inspection, reveal the facade. This use of AI is unsettling because it allows for the rapid creation and dissemination of vast amounts of highly polished, yet entirely fabricated, content. It democratizes deception, enabling anyone with access to these tools to create what appears to be a legitimate medical authority figure out of thin air, making it incredibly challenging for viewers to distinguish between expert advice and sophisticated manipulation.

The timing of these deceptive campaigns couldn’t be more concerning. In an age where information is constantly at our fingertips, many people instinctively turn to the internet for answers to their health questions. This digital environment, while offering immense benefits, also creates fertile ground for misinformation to flourish. While some of these AI-generated clips might touch upon genuinely healthy foods like olive oil and garlic, which indeed have demonstrated benefits for things like joint pain and blood flow, they often blend this legitimate information with less researched or even baseless remedies, such as those touting the miraculous properties of cayenne pepper. This mixing of truth and fiction is a cunning strategy, as it lends a veneer of credibility to the entire message. As Dr. Girish Nadkarni, a professor of medicine and chair of AI and Human Health at the Icahn School of Medicine at Mount Sinai, rightly points out, “Even when the advice seems harmless, these accounts can still mislead people by creating a false sense of medical authority.” This false authority can have devastating consequences. When people are convinced by these seemingly benevolent but ultimately fraudulent sources, they may delay or even forego seeking necessary, evidence-based medical treatment from real doctors. This not only puts their health at risk but also erodes trust in legitimate medical professionals, fostering a dangerous environment where scientific consensus is questioned in favor of online gurus and their unregulated “cures.”

The true, underlying motive behind these elaborately crafted videos is not to share revolutionary health insights or to empower individuals with forgotten wisdom. It’s far more cynical: it’s about selling a product, in this specific case, “Jupi Hydration.” The entire medical-style content, the fabricated controversy, the sensational claims – all of it serves a singular purpose: to build trust and capture attention. Once that trust and attention are secured, they are then ruthlessly converted into product sales. It’s a classic marketing ploy, but made infinitely more dangerous by the cloak of medical authority and the use of sophisticated AI technology. The creators of these videos are banking on the human tendency to seek simple answers to complex problems, and they are exploiting the natural desire for good health and longevity. They create a compelling narrative that suggests an easy fix is being withheld, and then they offer their product as the solution. The irony is that the very “specialist” who is supposedly being silenced for revealing these secrets is, in fact, a manufactured entity whose sole purpose is to promote a supplement, the efficacy of which remains unverified and for which the real company, Jupi Hydration, has remained silent when approached for comment. This silence speaks volumes, reinforcing the notion that the primary goal is profit, not public health or genuine medical breakthrough.

Ultimately, these AI-generated videos represent a disturbing evolution in the landscape of online misinformation, especially in the sensitive realm of health. They exploit our collective vulnerability, our yearning for easy solutions, and our inherent trust in what appears to be authoritative information. The blend of genuine health tidbits with unproven claims, wrapped in the packaging of a dramatic, censored “revelation” and delivered by an AI persona, creates a powerful and deceptive cocktail. It highlights the urgent need for critical thinking and media literacy in the digital age. We, as individuals, must become more adept at discerning the subtle cues of manipulation, questioning the sensational, and always, always seeking verification from legitimate, established medical sources. The human cost of falling for such deception is not just wasted money on ineffective supplements; it can be delayed diagnoses, forgone treatments, and a dangerous erosion of trust in the very institutions designed to protect and improve our health. In this new frontier of AI-driven deception, our ability to critically evaluate what we see and hear online is no longer just a good skill to have; it’s a vital defense against those who would exploit our health for profit.